Introduction

As a DBA or SQL developer, you most probably encountered the tiresome issue of executing multiple SQL scripts one-by-one during your project release time or during a migration of data changes to a different database. Wouldn't it be much more efficient to point an executable to a specific directory with SQL script files, click "start' and voila – every script has been executed?

Multiple Solutions Available

Even though there are some excellent third-party solutions on the market such as RedGate SQL Multi Script, in my company (a major US bank) we could not use them as the database development environment continues to be very restrictive. The server machines may only have absolutely necessary third-party tools or packages installed. In this case, it was much easier for our team to build our own solution rather than to justify a purchase of the external third-party package.

Taking into account our background as SQL Developers, there were a few options to choose from: sqlcmd utility, PowerShell scripting and SSIS. The future solution should have come with the robust database logging as well as with accurate error output.

Our development environment consisted of MS Visual Studio 2013 (VS 2013), SQL Server 2014 and Windows Server 2012 R2.

The sqlcmd utility seemed to be the easiest to start with. Unfortunately we learned very quickly that sqlcmd was unable to interpret the errors coming from the failed executions of EXEC SP_EXECUTESQL, used to run dynamic SQL statements.

Then we turned our attention to PowerShell scripting, but the following problems were uncovered:

- PowerShell interpreted quotes differently from how in-line SQL ( SQL Server Management Studio) interpreted them. The scripts containing EXEC SP_EXECUTESQL with double quotes around dynamic SQL statements were generating errors of "missing quotes'.

- Script block size. The max size of SQL script that PowerShell was able to execute as a single file was about 32,000 characters. All scripts with a larger number of characters were split (in memory) into the smaller chunks. That led to multple errors, since some of our stored procedures and large batch scripts (e.g. during data migration with a lot of INSERT statements) were exceeding the 32,000 characters.

Finally, we decided to proceed with SSIS solution and its project deployment model.

SSIS Solution

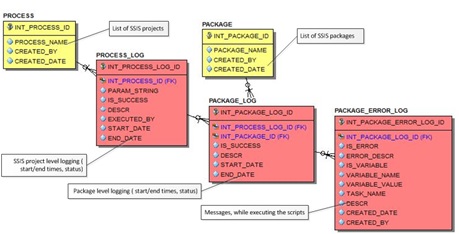

The SSIS project consists of a single package, five logging tables and five stored procedures which are used for logging.

Fig. 1 SSIS Data Model

All SSIS projects in our environment use the above set of tables. This simple set of logging tables was originally implemented in SQL Server 2008 R2, well before Microsoft came up with its own SSIS logging enhancements in SQL Server 2012.

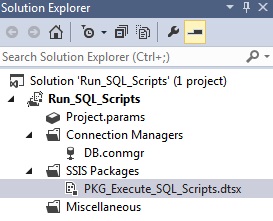

Fig. 2 SSIS project structure

Our SSIS project consists of a single package PKG_Execute_SQL_Scripts.dtsx and a single connection manager. Here is the packge:

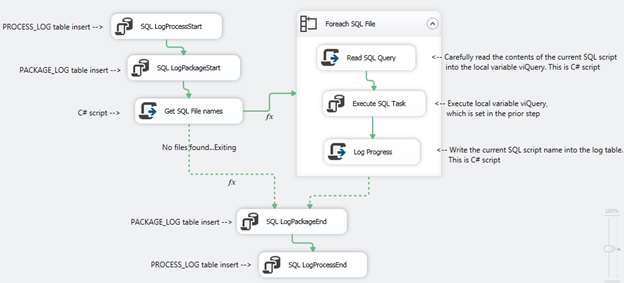

Fig. 3 PKG_Execute_SQL_Scripts.dtsx package control flow

And the connection manager:

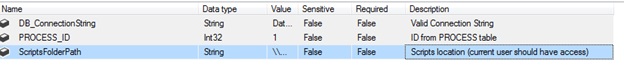

Fig. 4 SSIS project parameters

The scripts execution is a repeatable process. If you don't want certain scripts to be re-executed than you need either remove them or modify their names to include the "archive' key word. All scripts and folders containing "archive' word are ignored during execution.

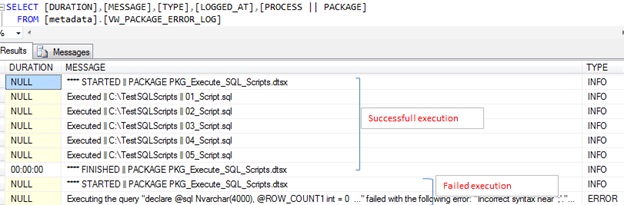

The execution stops immediately if any error is encountered. You can always correct the error (you know the error by looking into either VW_PACKAGE_ERROR_LOG view or PACKAGE_ERROR_LOG table) and restart the scripts executions from the beginning.

Fig. 5 Sample execution logs in SSIS.

The process can be executed either via VS 2013 or, once deployed to the server, via stored procedure metadata.[Run_SQL], which is included to the attached archive.

Conclusion

After comparing and testing several approaches for implementation of our database development use cases, we have decided to implement them with SSIS project solution.

Even though it initially appeared more labor intensive to build and test, we feel our project became a robust home-grown engine for SQL and data migrations. DBAs manual script executions during release times are no longer required!

Overall, our implementation provides

- Transparent logic, which can be easily customized or enhanced.

- Solid logging in case of errors

- Multiple possibilities of multiple script execution either from VS 2013 or from the server itself.

Special thanks to Vijay Jayakumar who was heavily involved in this project from the beginning.