Virtualization is one of the most disruptive and beneficial technologies to hit enterprise data centers in quite a long time. Businesses have been using virtualization technologies to consolidate their server infrastructures and save money in the data center for almost ten years now and these days, Virtualization is everywhere.

If you have not already virtualized your SQL Servers, it's only a matter of time before the subject arises within your organization. Unfortunately, my experience shows that organizations keep the hypervisor side solely visible to the infrastructure administrators, and DBAs are left in the dark without any insight or stake in the virtualization platform. As a result, the DBA is hesitant to move their critical servers to this new platform.

When their servers get virtualized, regardless, the DBA is usually not involved in the virtualization process, has and so has little insight into this new layer. It presents a 'black-box' where visibility is limited, and thus is surrounded in mystery.

Without an understanding how virtualization works, the DBA will have blind spots when attempting to resolving performance issues, such as reduce resource contention, or improve the backup and restore operations, and so on. They will not realize that they can often resolve a problematic process, such as the faltering backup strategy or long-running task, simply by adjusting it to use features and functions built into the virtualization layer.

It does not have to be this way. With a proper understanding of the virtualization platform, and the groups that manage it, DBAs can learn how to exploit it to their best advantage. The goal of this Stairway series is demystify each component of the system stack in virtualization, and explain how to use each one to improve SQL Server availability, management, and performance.

This first level will help you understand what virtualization is, why it is so important to understand the concepts behind it, and why you should cross some organizational silos to get more involved with the architecture and operations of the virtualized infrastructure on which your mission-critical SQL Servers run. As we progress into deeper topics, in later levels, we'll cover key hypervisor-specific tips and tricks that will help you maximize your virtualized SQL Server efficiency and flexibility.

From the Physical to the Virtual

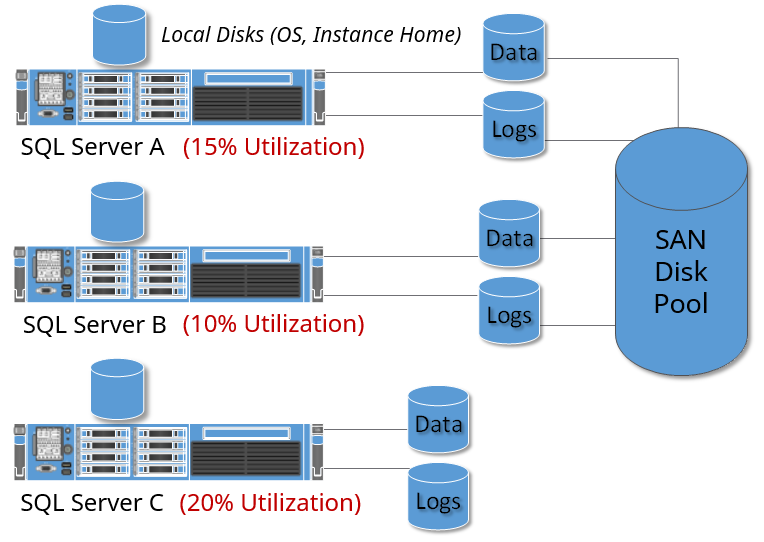

To define virtualization, first we should illustrate a traditional server infrastructure.

Figure 1

In a traditional server infrastructure, for every SQL Server request, we:

- Purchase and deploy a physical server.

- Configure local or attached (e.g. SAN) storage

- Install and configure the operating system

- Install and configure SQL Server

- Load the databases, and let applications connect to them

At this point, the physical resources of the server are fixed and finite. We will have allocated CPU, IO, memory resources to exceed the expected resource consumption for the expected workload. Therefore, for the vast majority of the life of this server, most of the raw aggregate computing power of this server is rarely used.

Virtualization helps to make more efficient use of the physical server. Functionally, virtualization is a new layer that sits on top of the hardware that allows us to run multiple independent operating systems on the same physical server. Although each of these operating systems share the physical resources available in that physical server, they are each fully independent and have no knowledge of the other operating systems running on the same physical machine.

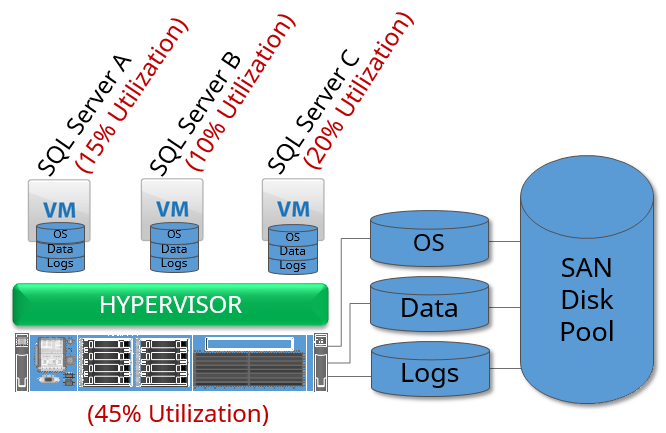

Figure 2

Multiple separate operating systems (referred to as virtual machines) can now independently run at the same time on one physical server, and an operating system is no longer dependent on that specific physical machine.

The virtualization layer adds queues that allow each of these virtual machines and the applications running inside them to request access to the same set of compute resources, such as CPU or memory. Each of these resource requests gets placed into the appropriate resource queue and eventually gets executed on the physical server, and the applications can continue to run.

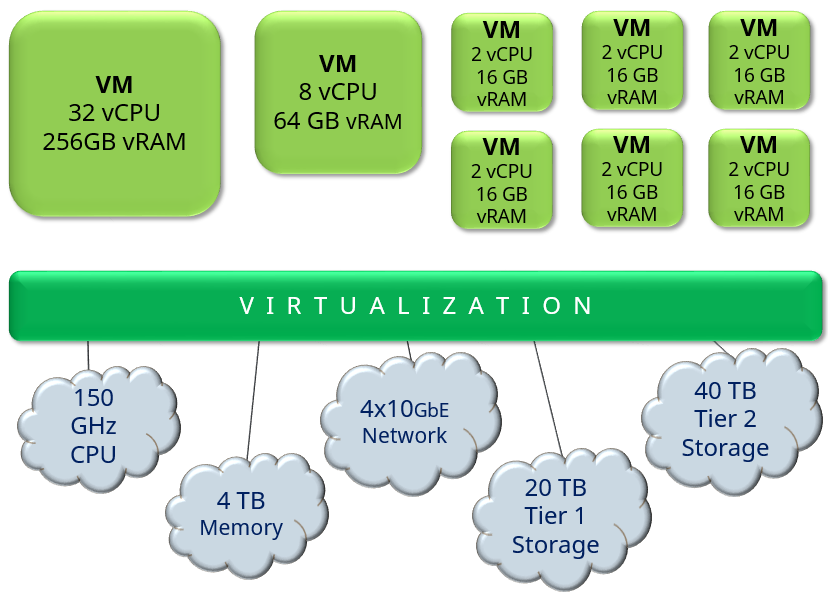

In the end, virtualization turns one or more physical servers into a collection of computer resources (such as 80GHz of CPU power, 1TB of available memory, or 25,000 operations per second of storage performance), which the VMs and their applications access via the queues. Once configured, the administrators are now able to divide up and allocate these resources by creating virtual servers, each with their own set of assigned resources.

Figure 3

Virtualization Terminology

System administrators tend to use some infrastructure-specific terminology to reference different parts of the stack, and it's best to speak the same language.

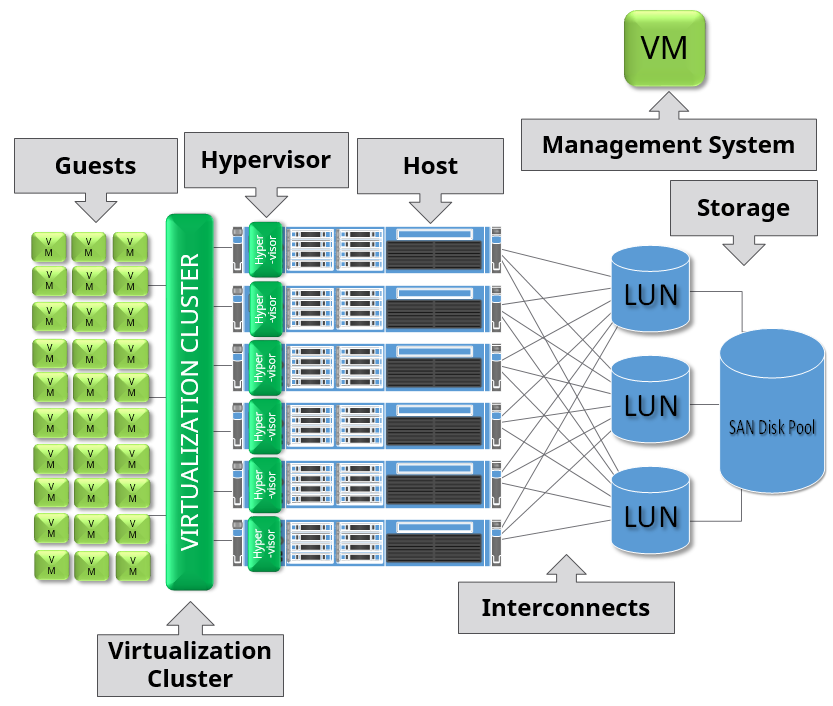

Figure 4

- A "hypervisor" is the virtualization layer that is installed and configured on each physical machine. The hypervisor handles the queuing of the virtual machine resource requests and their fulfillment with the appropriate resource delivery on the physical machine.

- A "host" refers to a single physical machine, located in a "cluster", or a set of hosts grouped together and governed by the same set of rules.

- An independent management system controls the actions and rules governing a group of hosts, watches all components of the infrastructure for outages, coordinates the automation of the environment, and provides a central location for administrators to manage the environment. It can be located within a virtual machine located in the same or different virtual environment, or a physical stand-alone server elsewhere in the datacenter. The hypervisor and the management system makes up the virtualization suite.

- A "guest" is a virtual machine that is located on this virtualization "cluster", and can reside on any one of the hosts in the cluster. It can only reside on one of the hosts at a time, so the virtual machine's scale is limited in size to the resources available in that single host.

- Interconnects reference the different networking and storage connectivity means to connect the hosts together and to the shared storage components.

A large number of virtualization hypervisors exist for the enterprise to select.

Each of these platforms is a major player in the virtualization space. Conceptually, all do the same thing: allow multiple virtual machines, each with their own operating system, to independently co-exist on the same physical server, and share the compute resources on one or more physical servers. Each have their own nuances, but most of the items you will see in this series is hypervisor agnostic. As we dive deeper into the architecture and performance, hypervisor-specific details will be highlighted.

This concept of resources and resource queues comes back over and over again throughout the Stairway series.

How does Virtualization help DBAs?

We all have heard the drivers behind virtualization before from a business standpoint: save money, energy, time, and so on. But, how can virtualization help the DBA? If properly utilized, virtualization can help the DBA in an almost endless list of ways.

Cost Reduction

The licensing costs of SQL Server can be pretty substantial, but can possibly be brought down (sometimes significantly) by properly consolidating SQL Server virtual machines with the appropriate virtualization licensing model.

Independent Consolidation

Normally, when a DBA thinks consolidation, they cringe with the memories of the challenges brought when multiple instances are consolidated to one, or multiple application databases are consolidated to the same instance. With virtualization, you are consolidating by improving the resource consumption, as a result of having multiple virtual machines co-exist on the same host. You do not have to consolidate instances or applications. Quite the contrary, the overhead from multiple operating systems is negligible from a resource consumption standpoint, so if the licensing allows for it, separating out the instances into independent guests is preferable.

High Availability

A virtualization layer can directly and indirectly add to the high availability (HA) and risk minimization strategy for your enterprise SQL Servers. Without virtualization, complex technologies must be used to create highly available SQL Servers. In some cases, virtualization high availability protection for a VM can replace a nontrivial HA solution with a very simple and transparent HA solution.

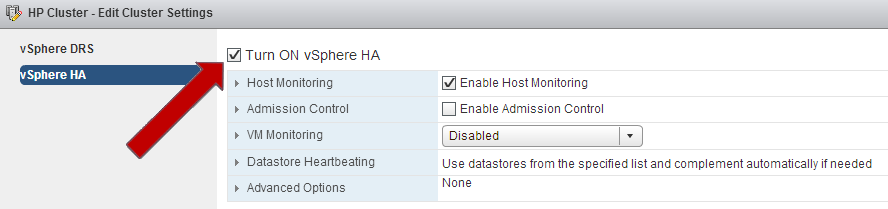

For example, a VMware high availability solution to provide resiliency against unplanned hardware outages is just a checkbox, and provides an approximately four-minute recovery for VMs impacted if a physical machine fails.

Figure 5

Even if a strictly VM-centric HA approach is not appropriate, VM-level HA can complement traditional SQL Server HA methods to reduce the risk introduced when a physical component fails.

Disaster Recovery

Disaster recovery gets much better with virtualized SQL Servers. Ordinarily, a SQL Server in the disaster recovery site should be almost identical to the source server, or else the organization risks hardware-dependent issues that can come up during the failover process. Performing a disaster recovery test can also be nontrivial in the physical world. With virtualized SQL Servers, the VM is no longer dependent on the nuances and intricacies of the physical machine. It can be restored and promoted to a live state without worrying about the dependencies of specific equipment. Virtualization technologies can used to simplify and complement, or even replace, traditional SQL Server disaster recovery techniques.

Agility and Flexibility

Due to the elimination of the dependence on the hardware, making system changes could not be easier. As long as your VM will fit on a single host in the cluster, you can dynamically adjust CPU, memory, networking, and disk configurations. If you exceed the limits of a single host, buy a bigger host and simply move the VM to the new host – while in use by the business. The same process applies for hardware upgrades. Add the new hosts to the cluster, migrate the VMs to the new equipment, and decommission the old hardware.

Standardization

How many combinations of server makes, models, BIOS and driver revisions, disk configurations, and file placement do you have in your environment? With a properly designed virtual infrastructure, start with a pre-configured and approved standard SQL Server VM template, and deploy from there. Your instances start from a standard configuration, and any differences from that point forward are approved and documented. You save time and effort, especially in an emergency.

Organizational Silos and Differences in Priorities

The history of virtualization is a relatively long and arduous one. Originally, virtualization was used for rapid and massive consolidation. The performance overhead of these early hypervisors was much higher than they are today, and the performance impact was definitely noticeable. However, early virtualization was used for simple preproduction workloads where performance did not matter as much.

Over time, the hypervisors constantly improved, became more efficient, and allowed for larger VMs. More and more production workloads were virtualized. Now, these platforms are ready for just about every production workload on the planet.

However, consider the original drive for virtualization – massive consolidation. Virtualization administrators were pressed to squeeze as many VMs into their environments as possible. Consolidation came first – performance came second. Fast-forward to today, and this directive still exists in most environments.

Now, DBAs have a different set of directives. Common DBA directives start with data availability, then integrity, and then performance. The first two align with the goals of the virtualization admin, but the core conflict – performance versus consolidation – still exists. Remember when I stated that virtualization is really just about resources and resource queues? The more activity that you have trying to access a resource, the more contention your critical virtual machines have getting to the resource. Contention to a physical resource means a silent performance penalty on the VM. The greater the contention, the higher the performance penalty becomes.

This conflict between consolidation and performance is at the root of most of production SQL Server virtualization's historical problems. SQL Server virtualization gets a bad name for itself because of how virtual machine admins create and manage the virtual SQL Servers. VM admins do not know the nuances of the applications that they are virtualizing, nor should they be expected to know. However, their best practices, which usually work fine for most workloads, can cause major trouble for the enterprise DBA. SQL Server is very latency sensitive to infrastructure delays, and what might cause a 1-2% performance impact on a file server might cause a 25% or greater performance penalty to a large SQL Server. It's up to you, the DBA, to demonstrate this. Knowledge of the environment is key to discussing these issues and concerns with the VM admins.

The easiest things that you can do to demonstrate a knowledge of a virtual infrastructure's impact on SQL Server is to conceptually understand the stack and use objective information to demonstrate the performance impacts due to the infrastructure underneath it. Having these objective metrics will make or break any virtualized application, and if you are prepared for total production virtualization in this manner, you will have less heartache maintaining the availability and performance of your mission-critical SQL Servers.

SQL Server Virtualization Monitoring

Monitoring, collecting, and trending key performance metrics in your environment will help DBAs trend and track performance before and after virtualization. How else can you provide feedback on performance if you cannot objectively prove how fast your systems were performing before they were virtualized? Setting up a process for ongoing performance statistic collection, analysis, and infrastructure visibility is critical for achieving the maximum efficiency in this virtualized environment.

Ongoing Performance Collection

Ongoing collection of the performance metrics in Windows Server and SQL Server are critical. Fortunately, it's relatively simple to configure and maintain this collection. If you have a fancy tool such as System Center or some other collector, make sure you get all of your key counters added. If you do not have access to such a tool, you have Windows Perfmon built into the operating system at your disposal! You can automatically collect Windows and SQL Server metrics without impacting your environment. Directions for setting up Perfmon for 24x7 collection of performance are available over at my blog site (http://www.davidklee.net/articles/sql-server-articles/perfmon/).

Benchmarks and Baselines

Outside of key system performance counters, you should also collect performance statistics from items of importance from within your SQL Server environment. Measure the ongoing performance of item such as long running queries, key report runtimes, backup times, ETL step timings, etc. – anything that matters to you.

Anything that you collect and measure here should be able to be repeated, and the results should be stored in something that you can use to trend the ongoing performance.

Insight in to the Virtualization Layer

You deserve the access into the virtual environment. The management systems behind enterprise virtualization collect and store key performance metrics from the hosts, the guests, and the infrastructure underneath, such as host-level CPU performance, aggregate storage consumption, and resource queue wait times. At a minimum, you should have read-only access to these systems for the virtual machines that you manage and the hosts that they reside on. Education is key to interpret these metrics, but getting access to this layer allows you to quickly see if any item in the infrastructure is not performing as expected.

Next Steps

In this introductory step in this Stairway series, we discussed the basics, key benefits, and inherent conflicts of SQL Server virtualization. In the next installments of this series, we will discuss how to construct the ideal SQL Server virtual machine. We will discuss how the choice of the hardware underneath SQL Server VMs is critically important, including the compute nodes, networking, and storage. Monitoring and alerting will be discussed in depth, and performance validation will be analyzed in depth. Stay tuned for more soon!