Multiphase Data Pump

Introduction

When transferring data using DTS, the multiphase data pump is a very flexible task that you can use, but it is not just one process; there are various processes attached to this DTS task. If your developers are allowed to code at each of the different phases that are available, they can add much flexibility to the DTS process. Among the functionalities that the above process provides are those listed below.

The ability to restart the data pump without having to reload large number of rows that were already processed. You can add functions to save processed raw data, batches, or partial batches, writing that data back to the source or a status table for later use.

Individual handling of different types of insert or transformation errors. For example, you could add special error handlers to customize problems handling NULL data or constraint violations.

Customizing data pump initialization or termination steps. For example, on data pump initialization you could write out a schema header to a file prior to writing XML data to the file.

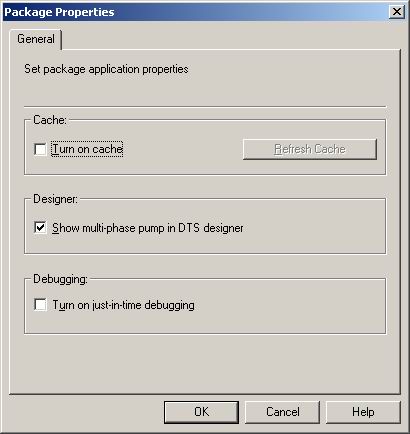

This option can be enabled from Enterprise Manager. Right click the Data Transformation Services folder and select Properties. Then enable the Show multi-phase pump in DTS

designer.

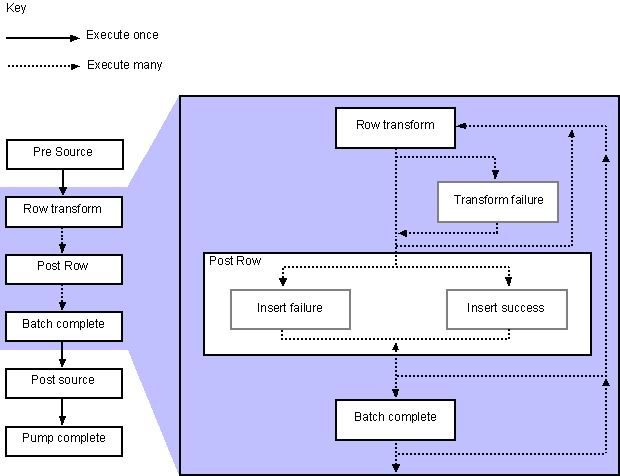

Data Pump Phases

The following phases are available for the use of the Data pumping process

1. Pre Source Phase. This is activated before the whole data pump and therefore only once for this task.

2. Row Transform Phase. This is the actual moving of the data. It's what you would normally see in a data pump.

3. Post Row Transform Phase (On Transform Failure). Caused by errors such as data type conversion errors.

4. Post Row Transform Phase (On Insert Failure). Caused by errors such as Primary key Violations.

5. Post Row Transform Phase (On Insert Success). This fires when the row is valid and moves to the destination.

6. Batch Complete Phase. This will fire for every batch insert you do, based on the Insert Batch Size.

7. Post Source Data Phase. Executes after the last row of data has been transformed (or not). This is also executed only once for the task.

8. Pump Complete Phase. As the name suggests, this fires after the data pump complete. This phase executes only once for the entire task as well.

Source : SQL Server 2000 , Books on line

How to Configure the Data Pump

Lets start with a simple example. We will use a simple DTS package, which will use to transfer data from Access to SQL Server. The package contains two tasks, as shown below.

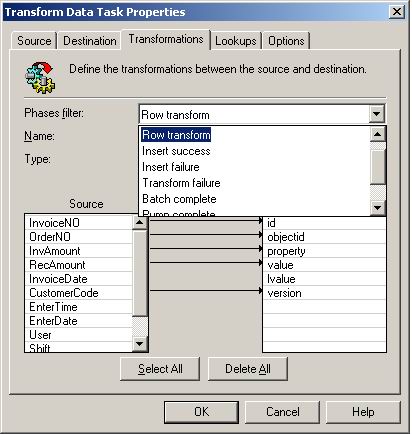

Right click the Transform Data Task and select Properties, then select the Transformations tab. You will see a dialog like the one below.

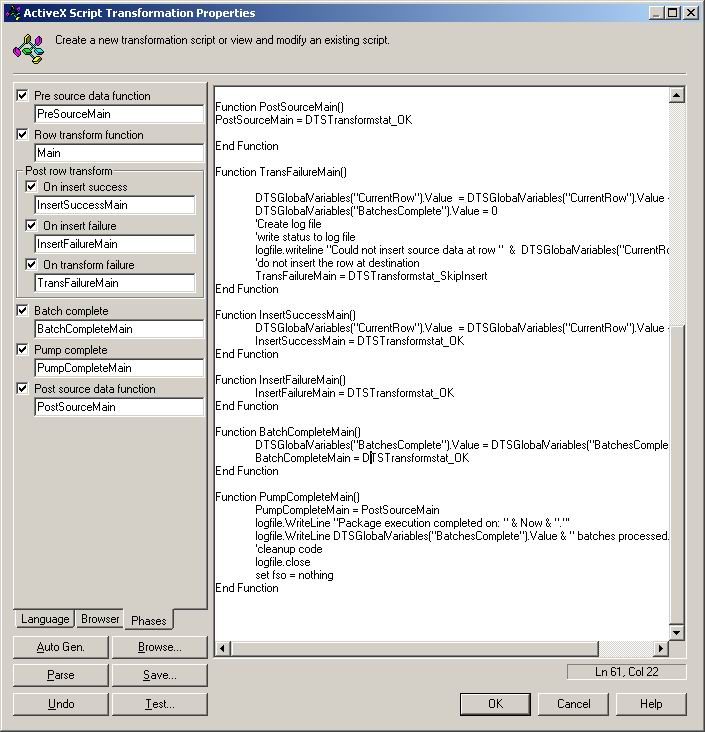

Remove all the existing predefined transformations which DTS gives you when creating the task. Then select New button and select ActiveX Script and press OK. You will get a dialog that has a tab called "Phases", which lists all the phases of the data pump as shown below.

You can now select the phases that you need. Then select

the General tab of the same dialog and press Properties

button. In that dialog select Phases tab. You will see code stubs for each of the phases that is enabled.

For the above ActiveX Script Transformation Properties dialog users are allowed to include those functions and coding that you need. For example you can log the errors to the test files or you can insert them to a table or else you can display them in a message box or a file. If you are a developer, you know how helpful those error messages are. The code to write these messages to a file is below.

'**********************************************************************

' Visual Basic Transformation Script

'************************************************************************

' Copy each source column to the destination column

strLogFile = "E:\DTSLOG.txt"

Set fso = CreateObject("Scripting.FileSystemObject")

Set logfile = fso.CreateTextFile(strLogFile)

DTSGlobalVariables("CurrentRow").Value = 0

Function Main()

DTSDestination("Fname") = DTSSource("Fname")

DTSDestination("LName") = DTSSource("LName")

'Check for NULL's and mark as an error so that the

TransformFailure phase gets called.

if isnull(DTSSource("Fname")) or isnull(DTSSource("LName"))

then

Main = DTSTransformStat_Error

else

Main = DTSTransformStat_OK

end if

End Function

Function PreSourceMain()

'Initialize global variables

DTSGlobalVariables("BatchesComplete").Value = 0

'Create log file

logfile.WriteLine "Package execution started On: " & Now &

"."

PreSourceMain = DTSTransformstat_OK

End Function

Function PostSourceMain()

PostSourceMain = DTSTransformstat_OK

End Function

Function TransFailureMain()

DTSGlobalVariables("CurrentRow").Value =

DTSGlobalVariables("CurrentRow").Value + 1

DTSGlobalVariables("BatchesComplete").Value = 0

'write status to log file

logfile.writeline "Could not insert source data at row " &

DTSGlobalVariables("CurrentRow").Value

'do not insert the row at destination

TransFailureMain = DTSTransformstat_SkipInsert

End Function

Function InsertSuccessMain()

DTSGlobalVariables("CurrentRow").Value =

DTSGlobalVariables("CurrentRow").Value + 1

InsertSuccessMain = DTSTransformstat_OK

End Function

Function InsertFailureMain()

InsertFailureMain = DTSTransformstat_OK

End Function

Function BatchCompleteMain()

DTSGlobalVariables("BatchesComplete").Value =

DTSGlobalVariables("BatchesComplete").Value + 1

BatchCompleteMain = DTSTransformstat_OK

End Function

Function PumpCompleteMain()

PumpCompleteMain = PostSourceMain

logfile.WriteLine "Package execution completed on: " & Now &

"."'

logfile.WriteLine DTSGlobalVariables("BatchesComplete").Value

& " batches processed."

'cleanup code

logfile.close

set fso = nothing

End Function

If you ran this for a package, the text file output would be something like this:

Package execution started On: 2005-03-09 3:31:51 PM. Could not insert source data at row 2 Could not insert source data at row 3 Package execution completed on: 2005-03-09 3:31:51 PM. 1 batches processed.

From the above output we can verify that errors occurred in the rows 2 and 3.

Below I have listed some of the constants, which will be helpful when programming this task in DTS.

| Constant | Description |

| DTSTransformStat_AbortPump | Processing is terminated with the current row |

| DTSTransformStat_Error | Indicates the transformation encountered an error. |

| DTSTransformStat_ErrorSkipRow | Terminate further processing of this row, for nonerror reasons. |

| DTSTransformStat_ExceptionRow | Terminate further processing of this row as an exception and call the error sink, and write this row to exception file. |

| DTSTransformStat_NoMoreRows | The current row is the last to be processed. The current row is processed as specified by other transformation status values. This value differs from DTSTransformStat_AbortPump in that no error is raised. |

| DTSTransformStat_OK | Default conversions (if any) succeeded. Write the row to destination if specified, without calling any error handlers. |

| DTSTransformStat_SkipFetch | Do not fetch the next row; reexecute all transforms against the current source and destination rows. |

| DTSTransformStat_SkipInsert | Do not write the current row to the destination. |

Source : SQL Server 2000 , Books on line

Insert Failure

An Insert failure function will be triggered when data fails to insert. A suitable example for this kind of failure is if you try to insert a Null value in a Non-Nullable column.

Transform Failure

A simple example for this kind of failure is inserting a different type of data than the column allows. For example if DTS is trying to transfer data from a char field to an int field, then this event is fired.

Conclusion

Most of the times DTS gives us headaches when errors occur. Like in other development tools, it is much easier if we can debug the package to find these errors. By using the multiphase data pump mechanism, developers will be able to tackle more complicated DTS packages while making their job much easier.