Introduction

The main purpose of this article is to show you how to build a SQL database on Azure blob storage. There are many reasons to why you would want to do this which are covered here: https://msdn.microsoft.com/en-us/library/dn385720.aspx.

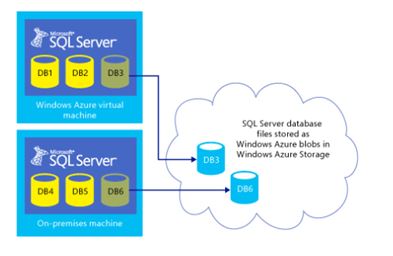

Below is a diagram taken from Books On Line (BOL) that shows the architecture. By the end of this article we will have built a SQL database whose database files reside on Azure storage from a SQL Server that is on-premise. This is similar to that of DB6, as shown within the image below.

High Level Requirements

You will need the following items to create your database on Azure Storage:

- An on premise SQL Server 2014 Express / Standard / Business Intelligence / Enterprise edition.

- An Azure Account.

- Access to your Azure storage account.

- Primary Storage Access Key (To connect to Azure storage).

- A policy and a Shared Access Signature (SAS) – Granular level key to a storage container level.

- Credentials on SQL Server with rights to create a database.

The Azure Storage Account

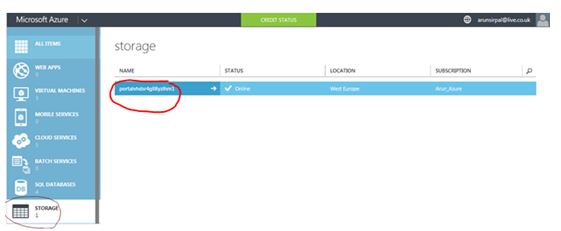

Once you have logged into the Azure portal you need to navigate to the STORAGE section as indicated by the black circle on the screen shot below. You will then need to create a storage account, (or if you have one already you can use that) then from here you should create a dedicated container to hold your SQL data files.

Clicking on the storage account name (red circle) gives you a global view of settings.

You can see from my screen shot below I am using locally redundant storage.

Geo-replication for the storage account is not supported for Azure database files, as stated in BOL: “If a storage account is geo-replicated and a geo-failover happened, database corruption could occur”.

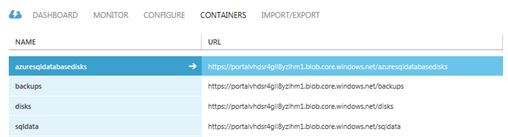

Now we need to drill into the container information. To do this you need to click on the containers button circled in red below:

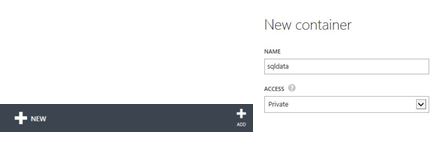

To build a new container you need to look for the add button which is at the bottom of the portal within a ribbon section:

The below screenshot shows the container overview with the newly created sqldata container. By default the container is set to private, which is a best practice setting.

Azure Storage Account

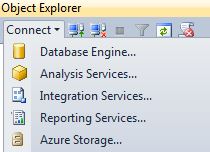

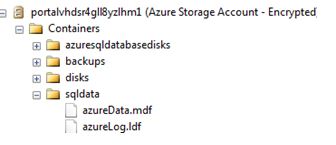

Via your on-premise SQL Server 2014 Management Studio (SSMS), we can connect to Azure storage. I like doing this so that I can view the account details locally rather than flipping over to the web based Azure portal.

Using Object Explorer you need to connect to Azure Storage.

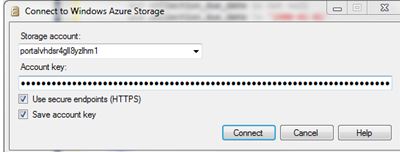

You will need your storage account name and primary storage key which you can get from Azure.

The details are obtained from your Azure storage account > manage access keys section.

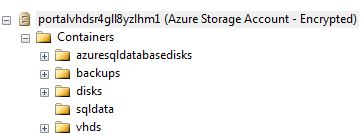

Once you have populated the form with the correct data you will then be connected to your storage account:

Shared Access Signature

The Shared Access Signature (SAS) key is absolutely critical; this is because by using a SAS key we can enable SQL Server to access resources in the storage account without sharing the Windows Azure primary storage account key.

Therefore we need to fulfil the following:

- We must create a policy on a container and also generate a SAS key.

- For the container used by the database files we must create a SQL Server Credential whose name matches the container path.

- We must store the information regarding Windows Azure Storage container and the SAS key in the SQL Server credential store.

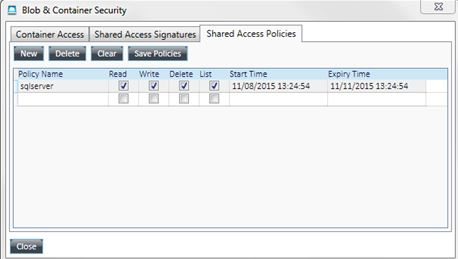

There are many ways to create the policy and the SAS key; you could use the Azure SDK for.NET or even PowerShell. I chose to use a codeplex tool called Azure Storage Explorer to help create a policy and generate a SAS key that will expire mid-November. The tool can be found here: https://azurestorageexplorer.codeplex.com/

Creating the policy

Once you have installed the Azure Storage Explorer tool, you then need to create a shared access policy.

Creating the SAS key

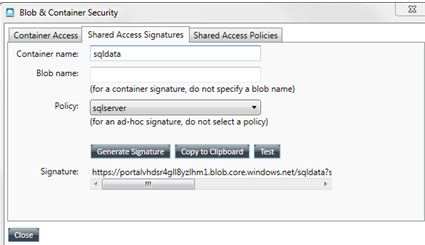

Then you will need to navigate to the Shared Access Signatures tab where you will then need to click on the Generate Signature button, as shown in the middle of the image below.

For the purpose of the article I have obfuscated the real SAS key.

The generated string will be similar to that of the following:

https://portalvhdsr4gll8yzlhm1.blob.core.windows.net/sqldata?sr=c&si=sqly3BlobyourSASkeygoeshereskills

The important concept here is to select the code block highlighted after the question mark (?), which becomes the SECRET for your SQL credential.

Creating the database

I am now ready to connect to my on-premise SQL Server and create a database. As mentioned before the SQL credential will be used to store authentication information to connect outside of SQL Server containing the path to the storage container and the previously generated SAS key.

USE Master

GO

-- Create a credential

CREATE CREDENTIAL [https://portalvhdsr4gll8yzlhm1.blob.core.windows.net/sqldata]

WITH IDENTITY='SHARED ACCESS SIGNATURE',

SECRET = 'sr=c&si=sqly3BlobyourSASkeygoeshereskills'

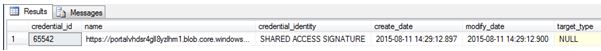

Under the context of the Master database we can confirm a newly created SQL credential.

USE Master GO -- Confirm credential created SELECT * FROM sys.credentials

Now the TSQL for the Azure based create database – notice the filename paths are the paths to my sqldata container.

USE Master

GO

CREATE DATABASE Azuredb1

ON

( NAME = azuredata,

FILENAME = 'https://portalvhdsr4gll8yzlhm1.blob.core.windows.net/sqldata/azureData.mdf' )

LOG ON

( NAME = azurelog,

FILENAME = 'https://portalvhdsr4gll8yzlhm1.blob.core.windows.net/sqldata/azureLog.ldf')

Confirmation

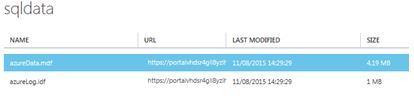

We now have a SQL database created and online with its files on Azure storage. We can see this in the Azure Portal.

We can also see this in the Azure Storage Viewer in SSMS.

We can also check this in T-SQL with the following code:

SELECT DB_NAME([database_id])AS [SQL Database Name], [file_id], name, physical_name, type_desc, state_desc, CONVERT( BIGINT, size/128.0) AS [Size in MB] FROM sys.master_files WHERE [database_id] = 13

This will give us results similar to the image below.

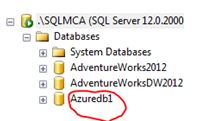

If we look in SSMS, we will also see this:

Summary

Hopefully this article has given you some insight regarding how to build an on premise SQL database whose data files reside on Azure Blob Storage. There are limitations to this approach such as lack of Filestream support and upper size limits on each individual database data and log files (1TB). I encourage you to read through the limitation list to determine whether or not this is a concept you would like to use. That list can be found here: https://msdn.microsoft.com/en-us/library/dn385720.aspx .