Introduction

In part 14, we learned how to run T-SQL queries using PolyBase to a CSV file stored in an Azure Storage Account. In this article, we will do the same, but instead of using a Storage Account we will read the CSV file stored in Azure Data Lake.

Azure Data Lake (ADL) is a lake of data. It is optimized to stored big data and big files. It is designed for large files and fast parallel reading. It is used for log files, streaming data, IoT data. The blob storage is for backups, binary data, texts, and more.

In this example, we will:

- Create a Data Lake Store from 0. If you do not have any experience in Data Lake, you are in the right place.

- Create an Azure Active Directory application. To connect to Azure, you need to create an application when you connect to Data Lake.

- Add permissions to the application to access to Data Lake.

- Create PolyBase access using T-SQL from our Azure SQL Data Warehouse to the Data Lake using the Azure Application registration.

Requirements

- We are assuming that you already have an ADWH database.

- An Azure Account.

- SSMS or Visual studio to run some queries in ADWH.

Getting Started

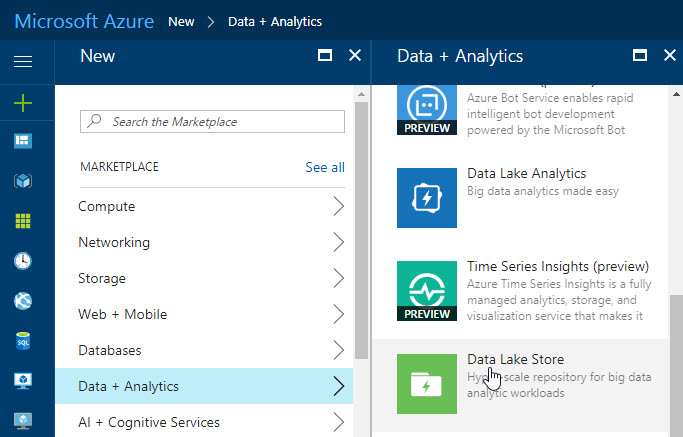

We will first create a Data Lake Repository. In the Azure Portal go to more services>Data Analytics>Data Lake Store

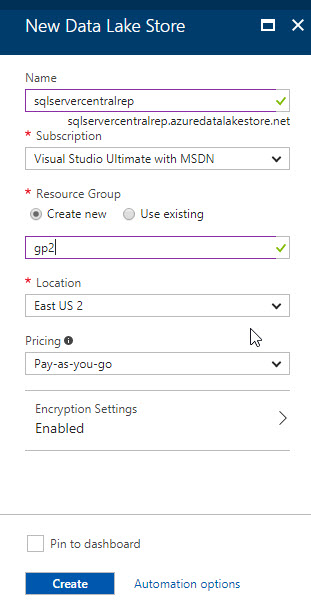

Add a name for the new Data Lake Store and create a resource group (or use an existing one). Select the Location and pricing. Pay-as-you-go will make you pay according to the space used. For example, the first 100 TB costs $3.9 and from 100 to 1000 TB costs between $3.8 and $38. The other option is a monthly commitment package. In this option 1 month of 1 TB costs $35 and 10 TB $320.

For more information about prices, please refer to this link: Azure Data Lake Storage Prices

Press the create button:

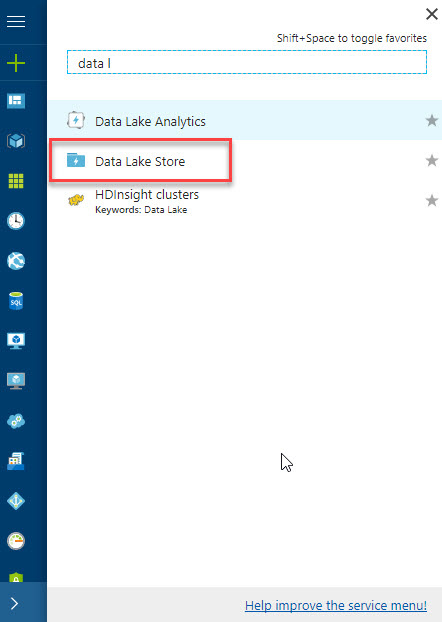

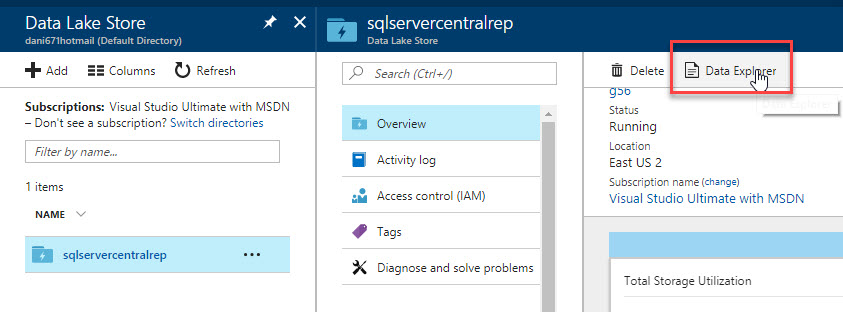

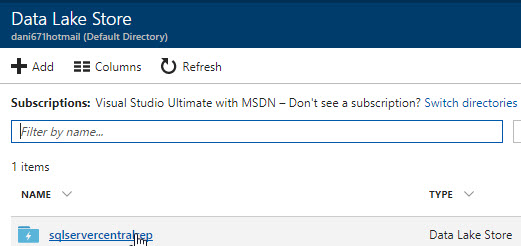

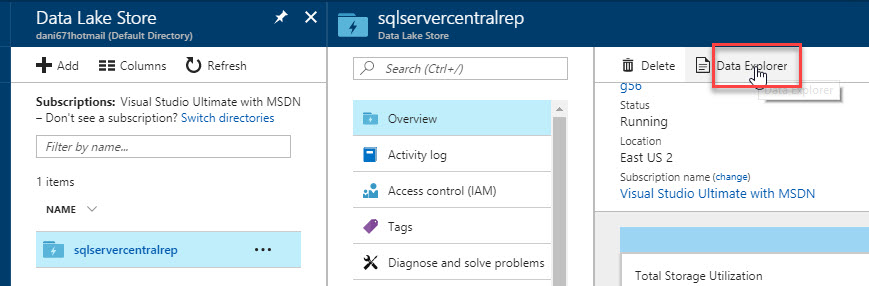

To verify the Data Lake Store created, go to More Services and look for Data Lake Store:

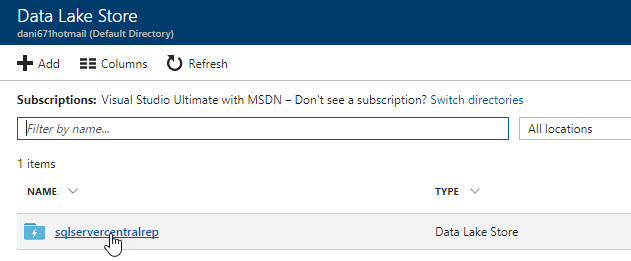

Click on the sqlservercentral Data Lake Store created:

Go to Data Explorer:

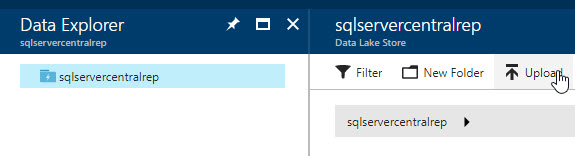

Press Upload to upload a CSV file:

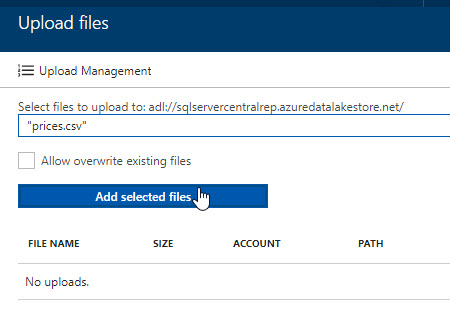

Select the prices.csv file. This file is in the resources section at the bottom of this article:

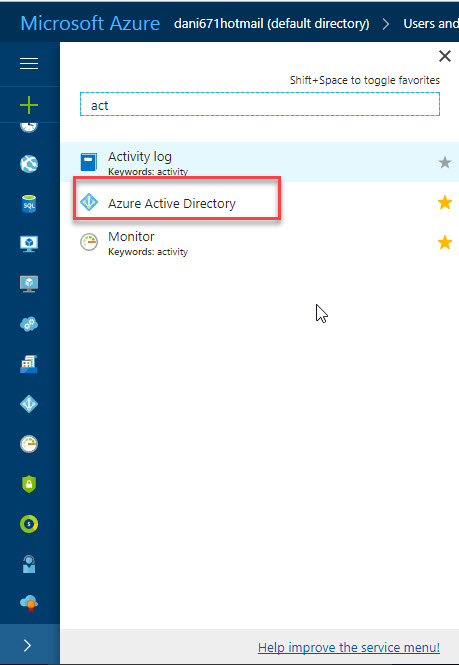

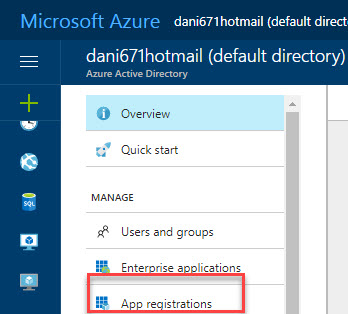

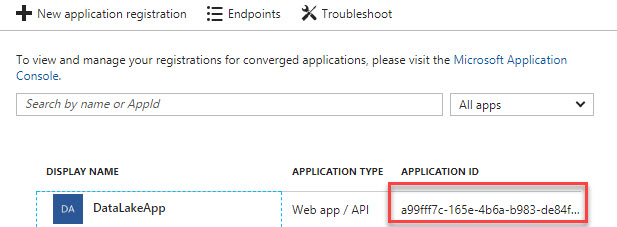

We will now create an application in Active Directory. To access to Data Lake, we will create an Active Directory App and assign permissions to it. In the Azure Portal, go to more services and search the Azure Active Directory:

Go to App registrations:

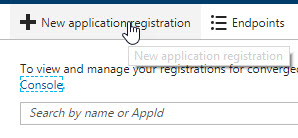

Select the option + New application registration:

Enter a name, select Web app/API and assign a URL:

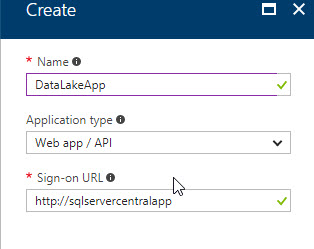

The Application ID will be used to connect to Data Lake using PolyBase. Copy that information:

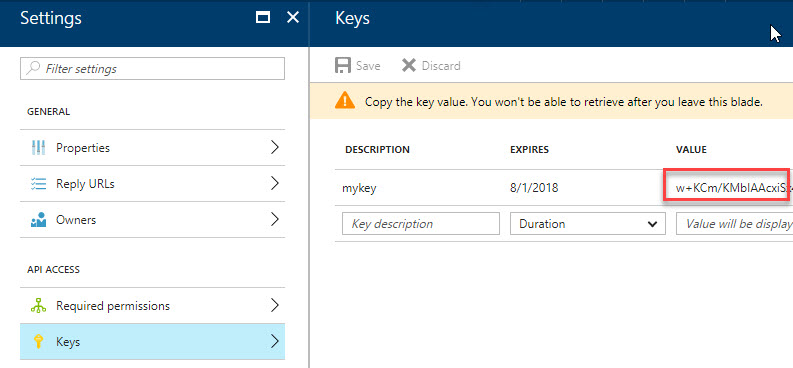

In Keys in the application created, add a new key. You will need a description and duration. The value will be created. Copy that key because it is also necessary to connect using PolyBase:

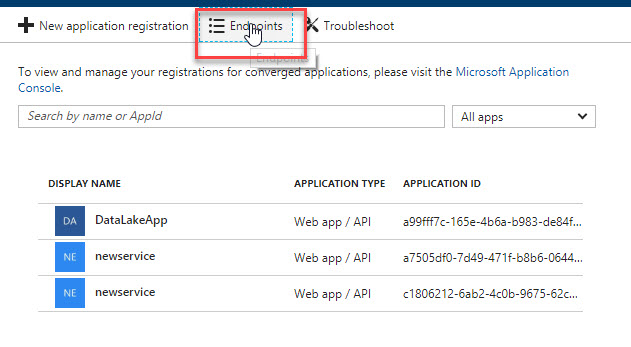

In the Application go to Endpoints:

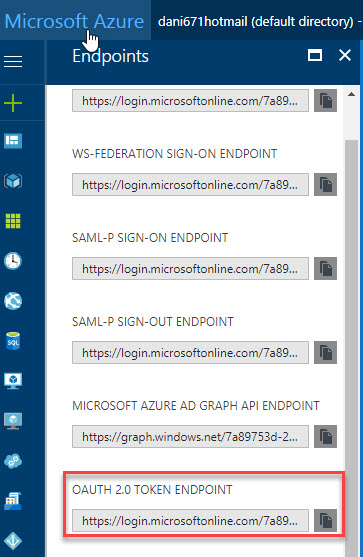

In Endpoints, copy and paste the EndPoint URL of the OAUTH 2.0 Token EndPoint. This information will also be used by PolyBase:

Go to the Data Lake Store again and go to sqlservercentralrep:

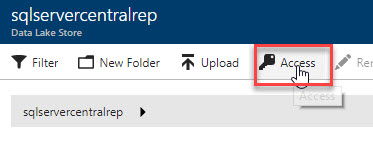

Go to Data Explorer:

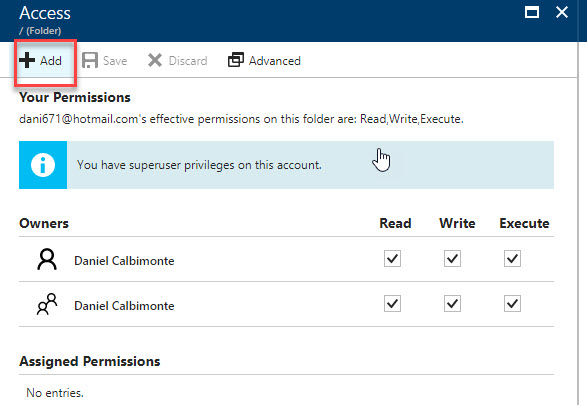

Go to Access:

Press +Add:

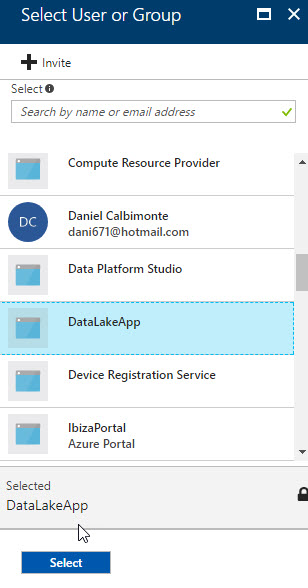

Add the DataLakeApp just created:

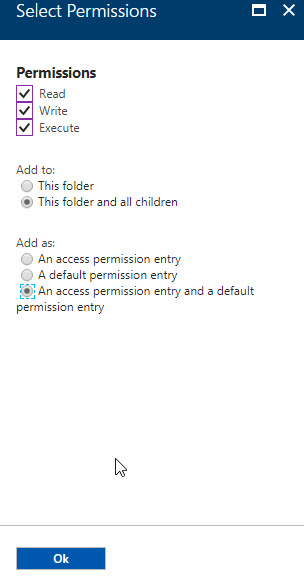

Activate Read, Write and execute permissions. Select the option Add to this folder and all children and select the option Add as an access permission entry and a default permission entry:

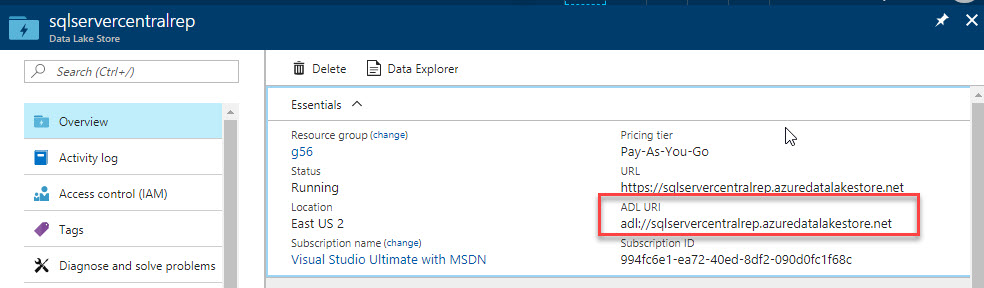

In the Data Lake Store created, go to overview and copy the ADL URI. This information is useful for the PolyBase connection. Copy this information:

PolyBase connection

OK, we now have a Data Lake Store with a CSV file named prices.csv. We also have an AD application with permissions to access to the Lake Store. Now, we will connect to our ASDW using SSMS and run the following T-SQL sentences:

If you do not have a master key, create a new one:

CREATE MASTER KEY;CREATE DATABASE SCOPED CREDENTIAL ADLCredential WITH IDENTITY = 'a99fff7c-165e-4b6a-b983-de84f82cdb@https://login.microsoftonline.com/7a89753d-2a93-4852-b304-5535509155/oauth2/token', SECRET = 'w+KCm/KMbIAAcxiSx40fj05URPZS/JTlC3WXcAHV6FY='

ADLCredential is the name of the database credential. It can be any name.

IDENTITY is the Application ID (you can get this information from the AD Application) plus the Application OAUTH 2.0 Token EndPoint that can be obtained in the EndPoint section of the AD Application.

SECRET is the key of the AD Application.

The next step is to create an external Data Source. In this example, the external data source name is AzureDataLakeStore, but it can be any name:

CREATE EXTERNAL DATA SOURCE AzureDataLakeStore

WITH (

TYPE = HADOOP,

LOCATION = 'adl://sqlservercentralrep.azuredatalakestore.net',

CREDENTIAL = ADLCredential

);In location, you need to add the ADL URI (you can get this information in the Data Lake Store Overview). We will create later an external file format:

CREATE EXTERNAL FILE FORMAT sqlservercentralformat

WITH (

FORMAT_TYPE = DELIMITEDTEXT,

FORMAT_OPTIONS (

FIELD_TERMINATOR = ','

)

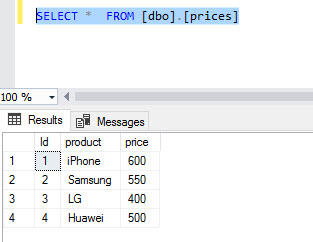

);We are defining the comma as a delimiter because we are going to query a CSV file. Finally, we are creating an external table named prices:

CREATE EXTERNAL TABLE prices

(

Id int,

product VARCHAR(64),

price int

)

WITH

(

LOCATION = '/',

DATA_SOURCE = AzureDataLakeStore,

FILE_FORMAT = sqlservercentralformat

)Location is the root in this case. If we had a folder in our Data Lake named folder1, the LOCATION would be /folder1 if the file was there.

Data source is the external data source and file format is the external file format. The data types of the table depend on the csv file data.

If we run a select, the external table we will be able to see the data:

Conclusion

In this article, we learned how to create a Data Lake Store, how assign permission using an AD App to our Data Lake Store and finally we queried a CSV file stored in Data lake Store using T-SQL.

Data Lake Store is optimized for big data and that is why it is a good idea to combine Azure Data Lake and Azure Data Warehouse.

If you want to know more about Azure Data Lake, we encourage you to read our Stairway to U-SQL series, which are amazing!