The subject of audit trails and logging data changes often comes up for DBAs. One of the common solutions to this is to set up triggers that log every change in the data in one or more tables. The purpose of this article is to go over that idea, and some variations on it, as well as some other means of auditing the database.

First, there are questions to be asked about the purpose of the auditing. How will it be used?

Some common uses include:

· Blamethrower Audits: I hate the idea, but it has to be mentioned in this subject. This is where the audit trail is primarily going to be used to point the finger of blame at people who mess something up. Sometimes a manager will demand this functionality and the DBA will have no choices except set it up, or find employment elsewhere. I don’t like this use, but it can be unavoidable.

· Management Reports: By this I mean reports on workflow, as tracked by the database. I’ve found this very useful. One company I worked for used this and found that 90% of orders took 3-5 days to get through a quality control and customer review step, while just over 9% took 1-2 days, and less than 1% took less than a day. Analyzing the differences in how the orders were handled at this step took the average down from 4 days to 6 hours. This just about doubled production capacity.

· Undo Functions: A stored procedure can read the audit trail on the data, and another can restore a row to a prior state from the audit trail. Normally, once a transaction is committed, going back means either re-editing all the data, possibly from a paper-trail, possibly from someone trying to remember what it was. With an audit trail, it can be very simple to review all of the states that a row of data has been in, and restore it to any prior state with one command. Given a simple front-end, a manager or employee could be given access to this, without having to go to the DBA just to get this done.

· Nothing At All Useful: Sometimes there might be a regulation or law that requires an audit trail, or a manager or executive insists on it without having any tangible reason for it. These audit trails must be created, but might never actually be used for anything, except possibly in the case of a court necessity.

In the case of “Nothing Useful” audit trails, the DBA may have no choice about how to set it up. The manager or regulation may stipulate a specific methodology, and “nothing else will do”. I can’t really help on those, it’s pretty much up to the DBA to figure out how to implement what’s being demanded.

In other cases, it’s important to review what the audit trail will be used for, and some important considerations on the effects of various methods of implementation.

If it's meant to provide legally defensible audit trails, which might end up in court, then triggers may not be the route to go (other options are discussed below). The whole idea of such triggers is that they insert data into a set of tables. Those tables, like any others, can be inserted into, deleted from, or updated, by anyone with adequate access. Depending on the authentication requirements, this kind of audit log can be bypassed by a DBA or system administrator with the right privileges. If that’s an acceptable risk, then triggers could be a solution. You’ll need to check the laws that apply, as they are beyond the scope of this article. (Basically, legal compliance isn’t something I can help you with. You’ll need to consult with lawyers, et al, on that.)

Audit logs that are meant for reporting and/or undo functions, generally need to be stored in tables that can be easily queried and that stored procedures can be run on. Depending on the complexity of the reporting, it might even be desirable to load the data into a data warehouse on a regular basis, possibly even Analysis Services cubes.

Once it has been determined what the audit trail will be used for, including combinations of uses, it is necessary to determine how to go about accomplishing those ends. Some possibilities include:

· Trigger-Based/Active Logging: This is logging by using triggers.

· Log File/Passive Logging: This uses the SQL Server log files instead of triggers.

· Proc-Based/Inline Logging: Stored procedures used to insert/update/delete data also log the changes.

· Mixed Logging: This means some actions are logged by triggers and/or procs, and the rest of the logging is left up to the SQL Server log files.

For most purposes, for most databases, I highly recommend passive logging. This means leave the database in Full Recovery mode, backup the log files regularly, and use those for all auditing.

Passive

Passive logging has several advantages:

· No cost to the code: There is no extra overhead in the database. Everything goes into the log files anyway, so transactions don’t take any longer.

· No introduction of errors: Triggers can have buggy code in them, or can become buggy when other things are changed in the database. Errors in triggers can cause failed transactions.

· Low maintenance: There is no maintenance on these except the actions that should be done for disaster prevention anyway. Log files need to be backed up, etc., but no more than they would otherwise be.

It has a few disadvantages:

· Cost: Some of the log parser products have a price tag on them.

· Availability: Usually, log parsing programs have a limited number of user licenses. Even if everyone who needs access to the audit trails has a license, these programs aren’t as easy to use as building a query on a table in a log database. That means the DBA is going to do almost all of the work in the audit trail.

· Reporting and Data Mining: Table-based audit logs can be queried. They can be loaded into Analysis Services cubes. They can be the basis for Reporting Services reports. I don’t know of a way to do that with the SQL log files.

If you aren’t doing a lot of reporting on the data, and aren’t doing a lot of undo functions, and can afford about $1,000 in your budget for a parsing program (or get a free one), this is by far the easiest method of logging to set up. It’s also the most accurate, and has the least (zero) impact on database performance. You don’t have to maintain the code yourself. And so on. It’s pretty ideal in these circumstances.

If this sounds like the right way to go, RedGate (http://www.red-gate.com/products/index.htm), ApexSQL (http://www.apexsql.com/sql_tools_log.asp), and Lumigent (http://www.lumigent.com/products/audit_db.html) all have products that can read SQL Server log files. They range in price (RedGate’s is free per their web page, but only works on SQL 7/2000, Apex’s costs about $1,000 per their page, but you can get a free trial, and Lumigent’s says “call to talk about pricing”).

Even at $1,000, if it saves you a couple of days of work over the following year or so, it’s probably worth it. (It’s hard to argue with the RedGate price if you can use it.)

Of these, I’ve used the Apex and Lumigent versions, and both are easy enough to use. They are a bit slow on large log files, but that’s to be expected.

One caveat on using them, which wasn’t obvious to me until I tried it: You may have the clever idea of moving a backup of the database to another server, restoring it there, and using the log parser on that copy, to avoid overhead on the main server. Don’t. SQL Server doesn’t restore log files that way, and it won’t get you what you need. If you need to do something like that, back up the log files, and then scan the backups. Apex and Lumigent will both scan log files that are in SQL backups. I assume the RedGate one will do the same.

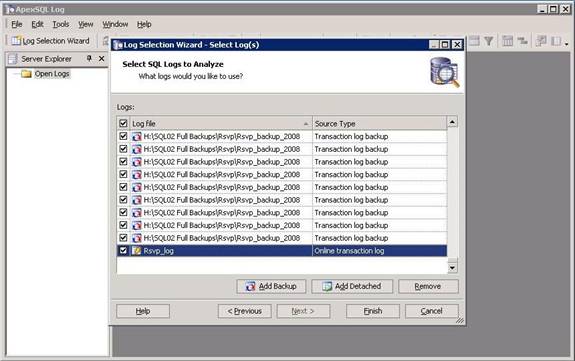

Here are some screen shots from the Apex product:

On this screen, you pick which logs you want to scan. It can include the current log file, as well as backups. It can scan log file backups in full, differential and log backups. You can pick which ones you want to scan. Because it works from the backup history stored in SQL Server, it can show backup files in this list that don’t exist any more, if those files have been moved or deleted outside of SQL Server.

Not shown here, on the screen prior to this one, you can pick which server to scan logs on, and which database or databases you want to scan logs for.

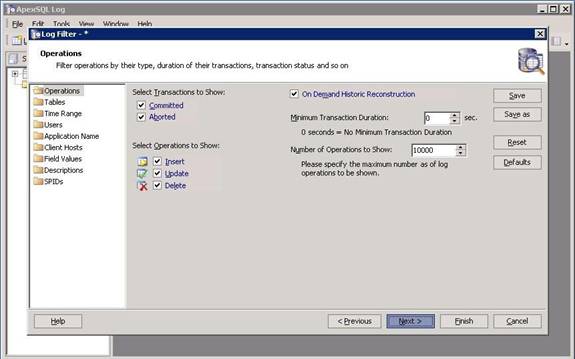

Once you’ve decided which database or databases, and which log file (or files), it gives you a lot of options for what to look for in the log. You can narrow it down as far as a specific transaction, whether the transaction was committed or rolled back, or it can be everything in the file, or anything in between.

Quite often, the most useful scan will be to select the Field Values folder and specify a specific primary key in a specific table. That will show you all the transactions for that row.

Everything up to here is fast. The next part, actually parsing out the log files and showing you what you’re looking for, often takes quite a while. While writing this, I have a parse of all activity in a table in a specific database running. It’s been parsing for 10 minutes now, and still isn’t done. It will finish (I’ve done this before and it often takes an hour or more). Right now, its progress bar is showing 1% progress about every 10 seconds.

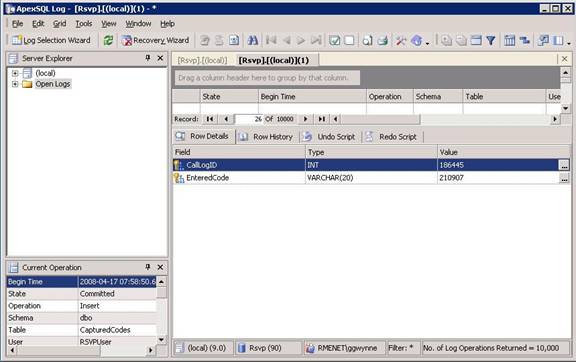

Once done, it presents you with the log view:

This includes data about which log file it came out of, which database and table, what data was inserted/updated/deleted, the type of transaction, etc. It also includes functions for creating an “undo script”, that will undo that particular transaction.

There are a ton of other features, but since there’s a free demo available, I’ll leave it up to you to discover which ones you want most to look at and play with.

I haven’t used the Red Gate version. I assume it’s similar. As mentioned, it only works for SQL 7/2000. I’m also told it’s being discontinued and won’t have a 2005 or 2008 version.

The Lumigent product has very similar functionality.

Summary

In summary, passive logging adds no processor or other overhead to your database. It can see every change made, so long as you have the backups and/or log files available. The main disadvantages are that it isn’t something that can be readily used by anyone other than a DBA, it’s a bit on the slow side (and that’s on my small, hundreds-of-transactions-per-minute databases; I can only imagine what it’s like on large, heavy-transaction databases), and it does cost money to get a parser.

The next article will go over active logging, which has a different set of advantages and disadvantages.