A transaction log is a file in which SQL Server stores a record of all the transactions and data modifications performed on the database with which the log file is associated. In the event of a disaster that causes SQL Server to shut down unexpectedly, such as an instance or hardware failure, the transaction log is used to recover the database, with data integrity in-tact. Upon restart, a database enters a recovery process in which the transaction log is read to ensure that all valid, committed data is written to the data files (rolled forward) and the effects any partial, uncommitted transactions are undone (rolled back). In short, the transaction log is the fundamental means by which SQL Server ensures database integrity and the ACID properties of transactions, notably durability.

Some of the most important duties of a DBA in regard to managing the transaction log are as follows:

- Choose the right recovery model – SQL Server offers three database recovery models: FULL (the default), SIMPLE and BULK LOGGED. The DBA must choose the appropriate model according to the business requirements for the database, and then establish maintenance procedures appropriate to that mode.

- Perform transaction log backups – unless working in SIMPLE mode, it is vital that the DBA perform regular backups of the transaction log. Once captured in a backup file, the log records can be subsequently applied to a full database backup in order to perform a database restore, and so recreate the database as it existed at a previous point in time, for example right before a failure.

- Monitor and manage log growth – in a busy database the transaction log can grow rapidly in size. If not regularly backed up, or if inappropriately sized, or assigned incorrect growth characteristics, the transaction log file can fill up, leading to the infamous "9002" (transaction log full) error, which puts SQL Server into a "read only" mode (or into "resource pending" mode, if it happened during recovery).

- Optimize log throughput and availability– in addition to basic maintenance such as taking backups, the DBA must take steps to ensure adequate performance of the transaction log. This include hardware considerations, as well as avoiding situation such as log fragmentation, which can affect the performance of transactions

In this Stairway series, we'll consider each of these core maintenance tasks in detail. Here, at the first Level, we'll start with an overview of how SQL Server uses the transaction log, and two of the most significant ways in which it impacts the life of a DBA, namely database restore and recovery, and disk space management.

How SQL Server uses the Transaction Log

In SQL Server, the transaction log is a physical file, identified conventionally, though not compulsorily, by the extension LDF. It is created automatically on creation of a database, along with the primary data file, commonly identified by the MDF extension, though again any extension can be used, which stores the database objects and the data itself. The transaction log, while generally implemented as a single physical file, can also be implemented as a set of files. However, even in the latter case, it is still treated by SQL Server as a single sequential file and, as such, SQL Server cannot and does not write in parallel to multiple log files, and so there is no performance advantage to be had from implementing the transaction log as multiple files. This is discussed in more detail in Level 7 – Sizing and Growing the Transaction Log.

Whenever T-SQL code makes a change to a database object (DDL), or the data it contains, not only is the data or object updated in the data file, but also details of the change are recorded as a log record in the transaction log. Each log record contains details regarding the ID of the transaction that performed the change, when that transaction started and ended, which pages were changed, the data changes that were made, and so on.

Note: The transaction log is not an audit trail. It does not provide an audit trail of changes made to the database; it does not keep a record of the commands that were executed against the database, just how the data changed as a result.

When a data modification is made, the relevant data pages are, hopefully, read from the data cache, or first retrieved from disk if they are not already in the cache. Data is modified in the data cache, and the log records to describe the effects of the transaction are created in the log cache. When a transaction is committed, the log records are written to the transaction log, on disk. However, the actual changed data may not be written to disk until a later time, when a database checkpoint occurs. Any page in the cache that has been modified since being read from disk so that the data value in cache is different from what's on disk is called a dirty page. These dirty pages may contain both:

- Data that has been committed and "hardened" to the transaction log file but not yet to the data file

- Data modified by open transactions i.e. those that have not yet been committed (or rolled back)

Periodic database checkpoint processes scan the data cache and flush all dirty pages to disk, at which point the modifications are reflected in the physical data file as well as the log file. This happens even in cases where a transaction is still open; during a checkpoint, dirty pages related to open transactions are flushed to disk, with SQL Server always ensuring that log records relating to these open transactions are flushed from the log cache to the transaction log file before the dirty pages are flushed to the data files.

Note:Another process that scans the data cache, the LazyWriter, may also write dirty data pages to disk, outside of a checkpoint, if forced to do so by memory pressures.

The important point to note here is that the log buffer manager always guarantees that the change descriptions (log records) are written to the transaction log, on disk, before the data pages are written to the physical data files. This mechanism is termed write-ahead logging. It is essentially the mechanism by which SQL Server ensures transaction Durability (see ACID properties of database transactions).

By always writing changes to the log file first, SQL Server has the basis for a mechanism that can guarantee that the effects of all committed transactions will ultimately be reflected in the data files, and that any data modifications on disk that originate from incomplete transactions, i.e. those for which neither a COMMIT nor a ROLLBACK have been issued are ultimately not reflected in the data files.

If a database crashes, for example, after a certain transaction (T1) is committed but before the affected data is written to the data file, then upon restart, the database recovery process is initiated which attempts to reconcile the contents of the transactions log file and the data files. It will read the transaction log file and ensure that all of the operations that comprise transaction T1, recorded in the log file, are "rolled forward" (redone) so that they are reflected in the data files.

Likewise, after a database crash, the recovery process will "roll back" (undo) any data changes in the database that are associated with uncommitted transactions, by reading the relevant operations from the log file, and performing the reverse physical operation on the data.

In this manner, SQL Server can return the database to a consistent state, in the event of a crash. More generally, the rollback (undo) process occurs if:

- A ROLLBACK command is issued for an explicit transaction

- An error occurs and XACT_ABORT is turned on

- If the database detects that communication has been broken between the database and the client that instigated the transactions.

In such circumstances, the log records pertaining to an interrupted transaction, or one for which the ROLLBACK command is explicitly issued, are read and the changes rolled back. In these ways, SQL Server ensures that either all the actions associated with a transaction succeed, as a unit, or that they all fail. As such, the transaction log represents one of the fundamental means by which SQL Server ensures data consistency and integrity during normal day-to-day operation.

However, the transaction log plays another, vital role in that it provides the mechanism by which the database can be restored to a previous point in time, in the event of a disaster. With proper planning and management, you can use backups of these log files to restore all of your data up to the point where it became corrupted or unusable.

The Transaction Log and Database Restores

As discussed earlier, a transaction log file stores a series of log records, sequential according to when the transaction started, which provide a historical record of the modifications and transactions that have been issued against that database. Each log record contains details regarding the ID of the transaction that performed the change, when that transaction started and ended, which pages were changed, the data changes that were made, and so on. Log records within the transaction log file are organized into multiple sections, called Virtual Log Files (VLFs) – these are covered in much more detail in Level 2 – Transaction Log Architecture.

SQL Server's write-ahead logging mechanism guarantees that the description of a modification (i.e. the log record) would be written to a VLF before the modified data itself was written to the data file. So, a log record may contain details of either a closed (committed) transaction or an open (uncommitted) transaction, and in each case the data modified by the transaction may or may not have been written to the data files, depending on whether or not a checkpoint has occurred.

Note:By regularly flushing dirty pages from cache to disk, the database checkpoint process controls the amount of work SQL Server needs to do during a database recovery operation. If SQL Server had to roll forward the changes for a huge number of committed transactions relating to dirty pages, then the recovery process could be very lengthy.

Any log record relating to an open transaction may be required for a rollback operation, during recovery, and will always be a part of what is termed an active VLF and will always be retained in the log file. A log record relating to a closed transaction will also be part of an active VLF, until the point is reached where there is no log record in the whole VLF that is associated with an open transaction, at which point the VLF becomes inactive.

The log records in these inactive VLFs essentially provide a "history" of the previously-completed database transactions, and what happens to these inactive VLFs varies depending on the recovery model of the database. We'll be discussing these recovery models in detail in Levels 3 - 6 of this Stairway, but the key point here is that, if you are using the FULL (or BULK LOGGED) database recovery model, then the transaction log retains the log records in inactive VLFs, until a log backup is taken (more on this shortly).

By backing up the transaction log, we can capture into a backup file the all log records in the live log, including the ones in these inactive VLFs. These log backups can then be used to restore your database to a previous point-in-time; hopefully a point in time very close to the point at which some "disaster" occurred. In the event of such a disaster, the log backup files can be applied to a restored copy of a full database backup file, and any transactions that occurred after the full backup will be "rolled forward", during database recovery, to recover the database and restore the data to a given point in time, and so minimize any data loss. Of course, this assumes that you have not only taken these log backups, but also transferred them to a safe location. If your log backup files are on the same drive as the live log file, and that drive crashes, then you may lose all your backups.

When a database is in SIMPLE recovery model (more on this in Levels 3 and 4), log records in the active VLFs are retained, as they may be required for a rollback operation. However, the inactive VLFs will be truncated when a checkpoint occurs, meaning that the log records in these VLFs can be immediately overwritten with new log records. This is why a database operating in SIMPLE recovery is referred to as being in auto-truncate mode. In this mode, no "history" is maintained in the log and so it cannot be captured in a log backup and used as part of the restore process.

Controlling the size of the log file

Hopefully, the preceding discussion has made it clear that, for most production databases that are operating in FULL recovery model, it will be necessary to take regular backups of the transaction log file, in order to enable recovery of the database to a particular point in time.

However, there is a second, important reason to take these log backups when operating in FULL (or BULK_LOGGED) mode and that is to control the size of the log. Remember that a log record is written to the log file for every transaction that modifies data or objects in a SQL Server database. In a busy system, with many concurrent transactions, or ones that write a lot of data, the transaction log can grow in size very quickly.

When working in FULL (or BULK_LOGGED) mode, capturing in a backup file a copy of the log records in inactive VLFs, is the only action that will make those VLFs eligible for truncation, meaning that the space occupied by the log records becomes available for reuse.

A brief note on truncation and the size of the transaction log: There is a common misconception that truncating the log file means that log records are deleted and the file reduces in size. It does not; truncation of a log file is merely the act of marking space as available for reuse. Truncation, in the context of each of the different recovery models, will be discussed in more detail in subsequent Levels.

Therefore, one of the reasons why it's vital to perform regular transaction log backups when working in FULL (or BULK_LOGGED) mode is to control the size of the log.

A Brief Example of Backing up the Transaction Log

In order to briefly illustrate some of the concepts we've discussed in this first Level, we'll walk through a very simple example of backing up the transaction log for a database operating in FULL recovery mode. Details of each individual processes and commands will be covered in much more detail in subsequent Levels.

In Listing 1.1, we create a new TestDB database on a SQL Server 2008 instance, and then immediately obtain the size of the log file using the DBCC SQLPERF (LOGSPACE) command.

USE master ;

IF EXISTS ( SELECT name

FROM sys.databases

WHERE name = 'TestDB' )

DROP DATABASE TestDB ;

CREATE DATABASE TestDB ON

(

NAME = TestDB_dat,

FILENAME = 'C:\Program Files\Microsoft SQL Server\MSSQL10.MSSQLSERVER\MSSQL\Data\TestDB.mdf'

) LOG ON

(

NAME = TestDB_log,

FILENAME = 'C:\Program Files\Microsoft SQL Server\MSSQL10.MSSQLSERVER\MSSQL\Data\TestDB.ldf'

) ;

DBCC SQLPERF(LOGSPACE) ;

Database Name Log Size (MB) Log Space Used (%) Status

---------------------------------------------------------

master 1.242188 34.27673 0

...<snip>...

TestDB 0.9921875 31.74213 0Listing 1.1: Initial log file size for the new TestDB database

As you can see, the log file is currently about 1 MB in size, and about 30% full.

Note: The initial size and growth characteristics of the user databases created on an instance are determined by the properties of the model database, as is the default recovery model that each database will use (FULL, in this case). We'll discuss the impact of these properties in a lot more detail in Level 7 – Sizing and Growing the Transaction Log.

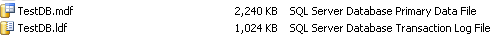

The size of the file can be confirmed by simply locating the physical files on disk, as shown in Figure 1.1.

Figure 1.1: Data and log file for TestDB

Let's now perform a backup of the data file for TestDB, as shown in Listing 1.2 (you'll first need to create the "Backups" directory). Note that this backup operation ensures the database truly is operating in FULL recovery mode; more on this in Level 3 – Transaction Logs, Backup and Recovery.

-- full backup of the database BACKUP DATABASE TestDB TO DISK ='C:\Backups\TestDB.bak' WITH INIT; GO

Listing 1.2: Initial full backup of TestDB

There is no change in the size of the data or log file, as a result of this backup operation, or in the percentage of log space used, which is perhaps unsurprising given there are no user tables or data in the database, as yet. Let's put that right, and create a table called LogTest on this database, fill it with 1 million rows of data, and re-check the log file size, as shown in Listing 1.3. The author of this script, seen regularly on the SQLServerCentral.com forums, is Jeff Moden, and it is reproduced with his kind permission. Do not worry about the details of the code; the only important thing here is that we're inserting a lot of rows. This code may take several seconds to execute on your machine, and that isn't because the code is inefficient; it's all the work going on behind the scenes, writing to the data and log files.

USE TestDB ;

GO

IF OBJECT_ID('dbo.LogTest', 'U') IS NOT NULL

DROP TABLE dbo.LogTest ;

--===== AUTHOR: Jeff Moden

--===== Create and populate 1,000,000 row test table.

-- "SomeID" has range of 1 to 1000000 unique numbers

-- "SomeInt" has range of 1 to 50000 non-unique numbers

-- "SomeLetters2";"AA"-"ZZ" non-unique 2-char strings

-- "SomeMoney"; 0.0000 to 99.9999 non-unique numbers

-- "SomeDate" ; >=01/01/2000 and <01/01/2010 non-unique

-- "SomeHex12"; 12 random hex characters (ie, 0-9,A-F)

SELECT TOP 1000000

SomeID = IDENTITY( INT,1,1 ),

SomeInt = ABS(CHECKSUM(NEWID())) % 50000 + 1 ,

SomeLetters2 = CHAR(ABS(CHECKSUM(NEWID())) % 26 + 65)

+ CHAR(ABS(CHECKSUM(NEWID())) % 26 + 65) ,

SomeMoney = CAST(ABS(CHECKSUM(NEWID())) % 10000 / 100.0 AS MONEY) ,

SomeDate = CAST(RAND(CHECKSUM(NEWID())) * 3653.0 + 36524.0 AS DATETIME) ,

SomeHex12 = RIGHT(NEWID(), 12)

INTO dbo.LogTest

FROM sys.all_columns ac1

CROSS JOIN sys.all_columns ac2 ;

DBCC SQLPERF(LOGSPACE) ;Listing 1.3: Inserting a million rows into the LogTest table, in TestDB

Notice that the log file size has ballooned to almost 110 MB and the log is 91% full (the figures might be slightly different on your system). If we were to insert more data, it would have to grow in size again to accommodate more log records. Again, the size increases can be confirmed from the physical files (the data file has grown to 64 MB).

We can back up the data file again at this point, by re-running Listing 1.2, and it will make no difference to the size of the log file, or the percentage of space used in the file. Now, however, let's back up the transaction log file and recheck the values, as shown in Listing 1.4.

-- now backup the transaction log BACKUP Log TestDB TO DISK ='C:\Backups\TestDB_log.bak' WITH INIT; GO DBCC SQLPERF(LOGSPACE) ; Database Name Log Size (MB) Log Space Used (%) Status ------------------------------------------------------- master 1.242188 63.52201 0 ...<snip>… TestDB 99.74219 6.295527 0

Listing 1.4: Backing up the transaction log for TestDB

The log file is still the same physical size, but by backing up the file, SQL Server is able to truncate the log, making space in the 'inactive' VLFs in the log file available for reuse; more log records can be added without needing to physically grow the file. Also, of course, we've captured the log records into a backup file and so would be able to use that file as part of the database recovery process, should we need to restore the TestDB database to a previous state.

Summary

In this first Level, we've introduced the transaction log, and explained how it's used by SQL Server to maintain data consistency and integrity, via a write-ahead logging mechanism. We've also described, and briefly demonstrated, how a DBA can capture the contents of the transaction log file into a backup file, which can then be reused to restore the database as part of a recovery process. Finally, we stressed the importance of backups in controlling the size of the transaction log.

In the next Level, we'll take a closer look at the architecture of the transaction log.