There are times when you need to create a “quick and dirty” solution to build a report. This blog will show you one way of using a few Azure products to accomplish that. This should not be viewed as a replacement for a data warehouse but rather as a way to quickly show a customer how to get value out of their data or if you need a one-time report or if you just want to see if certain data would be useful to move into your data warehouse.

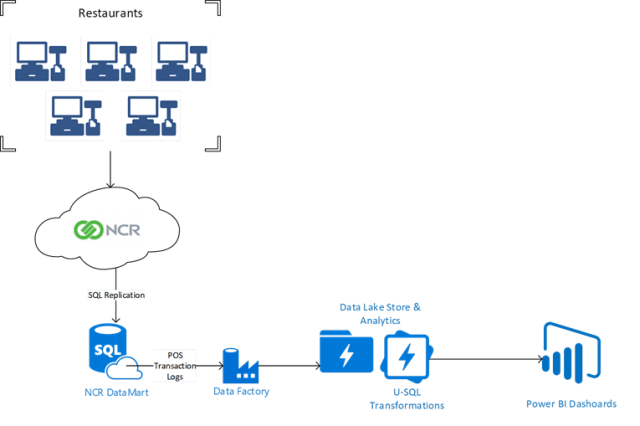

Let’s look at a high-level architecture for building a report quickly using NCR data (restaurant data):

This solution has the restaurant data that is in on-prem SQL Server replicated to Azure SQL Database using transactional replication. Azure Data Factory is then used to copy the point-of-sale transactions logs in Azure SQL Database into Azure Data Lake Store. Then Azure Data Lake Analytics with U-SQL is used to transform/clean the data and store it back into Azure Data Lake Store. That data is then used in Power BI to create the reports and dashboards (business users can build the models in Power BI and the data can be refreshed multiple times during the day via the new incremental refresh). This is all done with Platform-as-a-Service products so there is nothing to setup and install and no VMs – just quickly and easily doing all the work via the Azure portal.

This solution is inexpensive since there is no need for the more expensive services like Azure SQL Data Warehouse or Azure Analysis Services, and Azure Data Lake Analytics is a job service that you only pay for when the query runs (where you specify the account units to use).

Some things to keep in mind with a solution like this:

- Power BI has been called “reporting crack” because once a business user is exposed to it they want more. And this solution gives them their first taste

- This solution should have a very limited scope – it’s more like a proof-of-concept and should be a short-term solution

- It takes the approach of ELT instead of ETL in that data is loaded into Azure Data Lake Store and then converted using the power of Azure Data Lake Analytics instead of it being transformed during the move from the source system to the data lake like you usually do when using SSIS

- This limits the data model building to one person using it for themselves or a department verses have multiple people build models for an enterprise solution using Azure Analysis Services

- This results in quick value but sacrifices an enterprise solution that includes performance, data governance, data history, referential integrity, security, and master data management. Also, you will not be able to use tools that need to work against a relational format

- This solution will normally require a power user to develop reports since it’s working against a data lake instead of a easier-to-use relational model or a tabular model

An even better way to get value of of data quickly is with another product that is in preview called Common Data Service for Analytics. More on this in my next blog.