SAN's and virtual platforms are fast becoming the norm for most companies\authorities now. This is all well and good for those that have interaction with these technologies and understand them, but what about the DBA. After all, if his\her SQL server will be connecting to backend SAN storage, they still need to understand a little about what it does. This is even more so if it's also a virtual machine. This article will give you some basics on how these things affect your database instance.

Let's start by breaking down the SAN,

What is a SAN?

Storage Area Networks basically consist of racks of disks connected by switches. The disks are "sliced" up into arrays called "LUN's". The switches used are not ordinary switches, they are fibre channel units supporting specialized protocols (lately FC-IP).

But why does the admin always refer to it as the fabric?

The "Fabric" is the term used to describe the high speed fibre channel network which comprises the SAN. This network consists of fibre channel switches, fibre optic cables and host bus adaptors (HBA's). Some older SAN technologies use hubs instead of switches, but these are now rare. The network configuration is usually automatic in that each switch registers a devices WWN when plugged into one of its ports. Each device has its own WWN which is a 64 bit unique ID (sort of like a MAC address but longer). This WWN is registered on the internal routing table and essentially builds a map. LUN's can be zoned to provide secure access between given ports. At the server end sits the HBA, an HBA is a plug in card much like a NIC and most have dual port capability, the fibre optic cable plugs into these ports. All these components link together and essentially present the storage as though it were locally attached.

How does it provide failover?

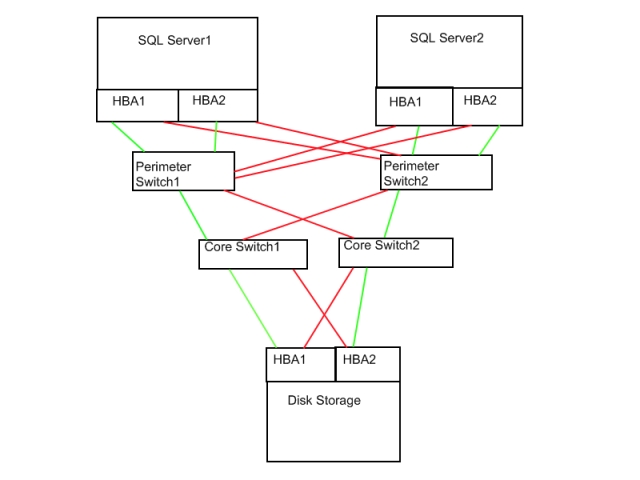

Multiple fabrics are usually employed to multi home devices to the disk storage. The diagram below shows a basic fabric configuration.

Although a little crude you should get the basic idea. Note the multi homing between the HBA's and the switches to provide a fail over path at any point. I have depicted SQL Server1 and SQL Server2 in the diagram above but these could quite easily be ESX hosts with virtual machines running inside them. Obviously the more devices you add (HBA's and switches), the more links you create to provide multi homed redundancy, and the larger they get the more complex they usually become.

In our scenario each HBA and switch are linked to multiple partners, Perimeter Switch1 and Core Switch1 make up Fabric1. Perimeter Switch2 and Core Switch2 make up Fabric2, so even with a core switch and a server HBA failure your server still has a path through to the storage. Another reason for this is, it allows a whole fabric to be taken offline and repaired\administered\etc without affecting storage access. Currently speeds of 2-4 Gbps are supported, but these speeds are ever increasing. For all their complexity SAN's are essentially, no different to ethernet networks (well maybe just a little).

So what is virtualization?

Modern virtualization technologies (HyperVisors) are merely a software layer which shares common hardware components among software based machines. They all work very differently, so it's best to clear this up from the start.

During a some of my previous scoping projects, a vendor has indicated to me that they didn't support their software on VMWare virtual machines as some of their customers had tried this and encountered performance problems. I became suspicious and asked the vendor which platform the customers had used, it turned out (much as i expected) they were using VMWare Server.

Now, don't get me wrong this product and Microsoft Virtual Server 2005 Enterprise are both ideal entry level virtualization platforms but should not be used in anger for a product such as a full blown GIS system or SQL\Exchange server. Having said that, I did have a 2 node SQL2005 cluster running on a laptop under MS VS2005 Enterprise. The laptop only died when I booted up the 3rd VM which was a Windows 2008 server (Beta) 🙂 , funny that!

The premium product currently on the market is VMWare's VI3 which incorporates ESX server 3.x, this host platform is extremely powerful and works quite differently to the previously mentioned products. The VI uses a management server which uses either a SQL or Oracle back end RDBMS. This is used to track\configure the entities in the virtual infrastructure.

The heart of the ESX server is the VMKernel (yes its a UNIX machine at heart), which is originally based on the Redhat 2.4.x kernel. It is totally stripped down and then rebuilt by the VMWare engineers. The VMWare kernel directly executes CPU code where ever possible except for special\privileged instructions, these use a process called binary translation, which interrupts the binary machine instructions and then re compiles them before sending them on to the virtual machine. The direct instruction execution affords the hypervisor superior performance as it does not emulate any instruction set, but can cause problems when moving VM's between hosts with disparate hardware (different CPUs specifically). When designing your virtual infrastructure it is best to decide on uniform hardware for the hosts from the outset to try and alleviate any problems that may arise as a result of different hardware (in other words don't buy 3 hosts with AMD Opteron and one with Intel Xeon).

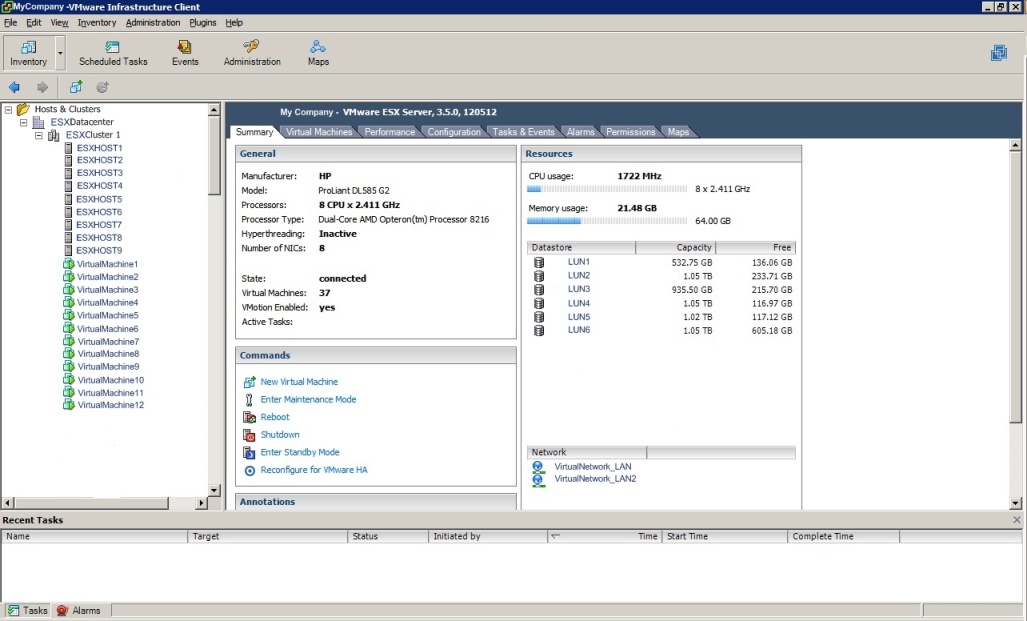

Each virtual machine runs in its own memory\process space, so there is no danger of machines crossing address spaces. The infrastructure uses a .NET client which allows full management of the system including configuring storage, virtual machines, networking, etc. Shown below is a screenshot of the VMWare Virtual Infrastructure Client for a configured VI3 system. Note the storage LUN's under datastores.

The HBA's are installed to each ESXHOST and the disk stores; storage LUN's are configured using the SAN management software and presented to the virtual infrastructure via the HBAs. VI3 has 3 unique options "HA", "DRS" and "VMotion". "VMotion" is the proprietary technology VMWare use to migrate a VM from one host to another while running live. "VMotion" uses a special private network (at least 1 Gbps configured on each host) which passes the VM configuration details and memory footprint (unencrypted, hence the high speed private network) between hosts. "HA" is the high availability option which allows VM's to be automatically resumed on another partner host should the original host fail for some reason. HA uses a heart beat technology (again over a private network) and detection of a failure initiates restart on another host. "DRS" is the process of distributing VM's across hosts to relieve memory and CPU pressures, this uses resource pools and works in conjunction with VMotion. With resource pools it is possible to group VM's and throttle them accordingly.

With virtualization and SAN's fast becoming cheaper and popular there is more of a requirement for the DBA to understand these technologies. Many organizations world wide are seeing a lot of success vitalizing SQL servers, exchange servers, file servers, domain controllers and even software firewalls. Reducing datacentre sizes and power consumption are primary goals and also contribute heavily to streamlined corporate DR processes, after all if all your mission critical servers are VM's on one host then DR becomes easier and more manageable. There are many documents available on the Internet detailing Virtualization platforms and SAN topologies. For VMWare links go to

Must have books are;

- SAN's for Dummies

- ESX server in the Enterprise by Edward L Haletky

Hopefully this article will help clear up some of the mysteries surrounding SAN's and Virtualization, it should now be down to you to complete the further reading.