June of 2009, Fusion-IO announced the DUO line with a price point that suddenly made SSD a viable alternative to conventional disk arrays. A fully loaded MSA 70 from HP with 24 73GB 15k drives will set you back around $10,000. The Fusion-IO Duo 640 retailed for $15,500. You would pay a premium at the dollar per gigabyte level, but the cost per IO, an important factor for SQL Server, is very competitive. At eScan Data Systems our current main production database would fit inside 640GB with a little room left over, so the goal was to start with two cards per machine, mirrored in a RAID 10 array for redundancy.

June of 2009, Fusion-IO announced the DUO line with a price point that suddenly made SSD a viable alternative to conventional disk arrays. A fully loaded MSA 70 from HP with 24 73GB 15k drives will set you back around $10,000. The Fusion-IO Duo 640 retailed for $15,500. You would pay a premium at the dollar per gigabyte level, but the cost per IO, an important factor for SQL Server, is very competitive. At eScan Data Systems our current main production database would fit inside 640GB with a little room left over, so the goal was to start with two cards per machine, mirrored in a RAID 10 array for redundancy.

There is one flaw in this plan. Windows doesn’t support RAID 10 in the logical disk manager, only RAID 1, 5, and 0. The reason this was an issue is simple, each card is actually two physical drives in Windows, hence the “Duo” moniker. I had used Symantec’s volume manager years ago and remember liking it. I also remembered it was very expensive. Doing some digging around I found that they now offer VERITAS Storage Foundation Basic for free! Of course there are limitations. This free version is limited to 4 user-data volumes, and/or 4 user-data file systems, and/or 2 processor sockets in a single physical system. This wasn’t an issue for my test server or my front line servers. When we got to a point that it would be a problem, buying Storage Foundation wouldn’t be an issue.

We only have two available slots in the server so two cards are fine for the initial purchase, but left us no room for growth. We needed a plan for growth. Many moons ago, I had worked with PCI expander boxes. These are a chassis with several of PCI slots that hooks back to your computer via cable and a PCI card. The problem was you very quickly ran out of bandwidth. If you needed capacity but not speed, they were great. With that in mind, I started searching for PCIe expander chassis. I was surprised at how few companies service this market. What I found was this segment was concerned mostly with adding video cards to a system to build a render farm. They were all 2U to 4U in height and didn’t have redundant power supplies. I did get a recommendation for a 1U expansion chassis but it only has one power supply. It is fast though, supporting PCIe 2.0 16x connection back to the server. If you need the speed One Stop Systems is your hookup.

We only have two available slots in the server so two cards are fine for the initial purchase, but left us no room for growth. We needed a plan for growth. Many moons ago, I had worked with PCI expander boxes. These are a chassis with several of PCI slots that hooks back to your computer via cable and a PCI card. The problem was you very quickly ran out of bandwidth. If you needed capacity but not speed, they were great. With that in mind, I started searching for PCIe expander chassis. I was surprised at how few companies service this market. What I found was this segment was concerned mostly with adding video cards to a system to build a render farm. They were all 2U to 4U in height and didn’t have redundant power supplies. I did get a recommendation for a 1U expansion chassis but it only has one power supply. It is fast though, supporting PCIe 2.0 16x connection back to the server. If you need the speed One Stop Systems is your hookup.

We settled on a 3U box that accepted a standard ATX power supply. We planned to swap out the power supply for a redundant model. It would still run out of throughput though, only supporting a PCIe X8 2.0 add in card and 4 PCIe 16x mechanical 8x lane slots. The Fusion-IO card required a PCIe 2.0 8X mechanical slot and 4X lanes of bandwidth. After checking the ioDrive Duo specification sheet we were confident enough to test out what we hoped would be our new IO platform. I wasn’t as worried about that as you would expect me to be. I know there are other expanders coming on to the market that will be PCIe 2.0 16x so we still had a path to upgrade if bandwidth became an issue.

With all the key parts in play it was time to plug in the two Fusion-IO Duo 640’s into my test machine. My test box had lots of PCIe expansion slots and enough power so I wouldn’t have to use the expander box during our initial test for performance and scalability. Our first rounds of testing would be for speed.

The Setup

This is a retired production server consisting of:

- SuperMicro chassis and motherboard

- Intel Xeon E5410 x 2

- 32GB RAM

- LSI 8480E RAID HBA x 2

- 15 Drive bay Chassis x 5

- 70 Usable Hard Drives

- Fusion-IO Duo 640 x 2

The Big Picture

Using SQLIO, or any benchmark tool, to get the maximum performance numbers from your system isn't that helpful. Since we are concerned with just SQL Server performance we need to focus on SQL Servers access patterns. To accomplish that I set the pending IO requests to 2, IO request size to 8K and 64K, did random and sequential read and write testing.

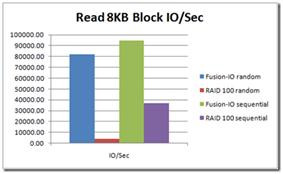

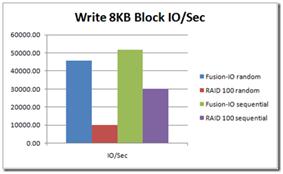

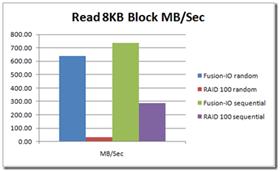

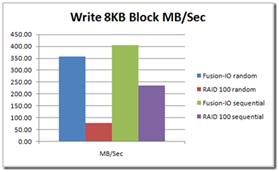

8KB Block Request Size

At 8KB block size the difference between the Fusion-IO and the RAID 100 setup is stark. Even with the write cache on the RAID HBA’s to 100% they weren’t able to come close to the IO throughput of the Fusion-IO cards.

There are a couple of anomalies brought on by the cache settings on the RAID HBA’s. Read random is low since there isn’t any caching going on at the HBA level. I could have readjusted the settings but I would have taken a significant hit on writes. Since our systems are write heavy it wasn’t prudent to do so. Writes get the performance boost of the cache and hit 10,000 random IO's a second. Sequential IO's are three times that at 30,000 a second.

In the throughput category, we see the same pattern. Lack of any read cache hurts the RAID setup on random reads. The Fusion-IO cards give as much as a 50x boost to random throughput and 2.5x in sequential throughput over the RAID setup. The RAID setup closes the gap a bit on writes but not by much.

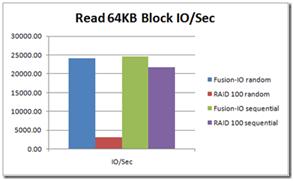

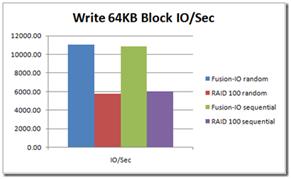

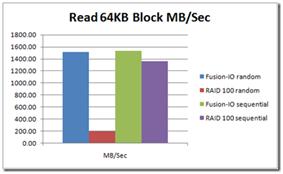

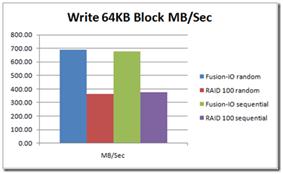

64KB Block Request Size

At 64KB block size things look pretty much the same. You will see the same anomaly due to the cache settings on the RAID 100 setup. Again on just sequential read throughput the RAID 100 setup isn’t that far off the Fusion-IO high water mark. The Fusion-IO cards again set the high water mark for write performance.

Sequential access numbers for the RAID 100 setup come very close but not quite to the same level as the Fusion-IO Cards. Again, the performance gains in random access by the Fusion-IO setup are just staggering, outpacing the RAID setup 10x. Write performance the gap narrows with Fusion-IO posting better than 2x the performance of the RAID array.

Random read throughput for the Fusion-IO cards again impresses me, breaking 1.4GB a second where the RAID array stays around 200MB a second. Sequential throughput isn't as clear cut with the RAID array coming within striking distance of the fusion IO cards. Write performance the RAID array again gets closer falling only 2x behind Fusion-IO.

Issues Arise

The Fusion-IO cards delivered on every front. At this point I moved on to pre-production testing where I put copies of the production databases on the live system and start replaying traces capturing additional numbers and estimating total improvement times. This is where I hit my first major snag with the Fusion-IO setup. The Fusion-IO cards suffered seemingly random failures under pre-production testing.

The Problem

The problem was pretty simple to diagnose once I talked to the technical support people at Fusion-IO. We were running out of system memory. From the manual “(You need) 2GB of free memory in RAM for every 80GB of storage space on the ioDrive(s)”. That means I need 32 gigabytes of RAM available to service all 1.2 terabytes of raw space. I’ve only got 32 gigabytes of RAM on the system.

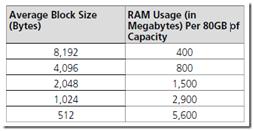

On the same page, they have a chart that maps out RAM usage in detail. It goes a lot higher than 2GB. The Fusion-IO folks tell me that the 512 byte block size is a worst case scenario. From what I understand, the drive uses system memory to help map out the solid state storage on the cards improving performance. If you have been reading about solid state disks you can find several articles on how the performance suffers when the disk is near full capacity or has been under a heavy write load.

The new trim feature implemented in Windows 7 helps address some of these issues. What the Fusion-IO folks have is trim on steroids. The root of the problem is what they have implemented in case the system is under memory pressure and the Fusion-IO driver can’t allocate any of the system memory to the write map, the drive goes off-line to prevent any data loss. I was assured that the data stored on the card wasn’t at risk. Through the course of troubleshooting the problem I found that our system does a burst of very small log file writes that quickly put the system under pressure with SQL Server already consuming 24 GB of RAM. It was enough to push the cards off line.

The Fix

Once we had identified the problem, they gave me a driver that had the ability to set the sector size to 4KB. You may have noticed that these writes were as small as a sector on a traditional disk, currently that is 512 bytes. The new standard that is slowly rolling out is a larger 4KB sector. In the SQL Server documentation you will find that SQL Server supports a 4KB sector.

If you look back at the chart if the smallest write possible is 4KB then that reduces us from 5.6 gigabytes per 80 gigabytes to 800 megabytes per 80 gigabytes. Since you can’t write a unit smaller than the size of a single sector it would force the writes to be 4KB instead of 512 bytes. Even with the reduction, I was still looking at having 12.8 gigabytes of memory reserved for the Fusion-IO driver cutting SQL Server back to 10 gigabytes. Also, having to convert all my databases from a 512 byte block to a 4KB block is a road block. There is no other way to do this other than copy all the data to a new database that is created on the new 4KB sector size.

With the memory allocation still pretty large and the time it would take to convert all our data to the new databases I had to change my strategy. We can’t use the larger cards in our current setup but we did settle on the smaller 160 gigabyte ioDrive’s to house tempdb since almost 50% of our IO runs through it. That strategy is working out well and giving us some head room to plan for our next round of servers where we can properly plan and size them with Fusion-IO in mind. Also, they have also announced an updated driver 2.0 that is due out soon. It reduces the memory consumption by as much as 10 fold over the current driver. With that and a 4KB block size memory pressure should be a thing of the past for them.

Conclusions

Fusion-IO has lots of success stories. I consider this to be one as well. Even though we weren't able to implement them as-is in our current environment, they did prove to me that solid state storage is the way. With any new cutting edge technologies, it may not be a perfect fit for everyone. With the performance numbers that the cards put up, I can’t imagine not using them in the future. With the proper planning and the new drivers I have no doubt that Fusion-IO will be eScan's choice for main storage in the near future.