In this article I’ll demonstrate how one can learn more about the queries that are sent

to analysis services.

Every analysis services administrator should know about the table named “QueryLog”. This table contains information about the queries, which are submitted to analysis services. By default this table is placed in an Access file, in the \Bin directory of the Microsoft analysis services path. I would recommend that this table will be moved to a SQL Server database. Simply use the import / export wizard to copy this table to your OLAP repository database. Do not use the default msdb database!

After exporting this table to SQL Server database, you need to tell the Microsoft OLAP server that queries will be logged into that table. To do so, open the registry editor, seek the key “hkey_local_machine -> software -> Microsoft -> OLAP server -> CurrentVersion “ QueryLogConnectionString and RemoteQueryLogConnectionString. Replace the text with the connection string to your SQL Server database, something like “Provider=SQLOLEDB.1;Integrated Security=SSPI;Persist Security Info=False;Initial Catalog=yours_olap_database;Data Source=your_sql_server”.

Note, after moving the table from Access to SQL Server, the SQL Server service should always be started to ensure that this information can be logged.

Now all new information about queries will be written to this SQL Server table. Remember, that after a cube is changed, query information about that cube is deleted from the table. To save information about all user queries I created new table named QueryLog_history, the same as QueryLog. The script for the QueryLog_history is:

--Ramunas Balukonis 2004.04.15

CREATE TABLE [dbo].[QueryLog_history] (

[MSOLAP_Database] [nvarchar] (255) NULL ,

[MSOLAP_Cube] [nvarchar] (255) NULL ,

[MSOLAP_User] [nvarchar] (255) NULL ,

[Dataset] [nvarchar] (64) NULL ,

[Slice] [nvarchar] (64) NULL ,

[StartTime] [datetime] NULL ,

[Duration] [int] NULL ,

[MOLAPPartitions] [smallint] NULL ,

[ROLAPPartitions] [smallint] NULL ,

[SamplingRate] [int] NULL

) ON [PRIMARY]

GO

CREATE CLUSTERED INDEX [IX_StartTime] ON

[dbo].[QueryLog_history]([StartTime] DESC ) ON [PRIMARY]

GO

My QueryLog_history has a start time ordered descending, so "select * from dbo].[QueryLog_history]", and "select * from [dbo].[QueryLog_history] order by starttime DESC" will return result in the data being returned in the same order.

Information to QueryLog_history should append when a new record is added to QueryLog, i.e. query was executed. So, QueryLog_history is populating using a trigger. The text for this trigger is :

--Ramunas Balukonis 2004.04.15

create trigger dbo.trg_QueryLog_I_history

on dbo.QueryLog

for insert

as

insert into querylog_history

(MSOLAP_Database, MSOLAP_Cube, MSOLAP_User, Dataset, Slice, StartTime

, Duration, MOLAPPartitions, ROLAPPartitions, SamplingRate)

select MSOLAP_Database, MSOLAP_Cube, MSOLAP_User, Dataset, Slice, StartTime

, Duration, MOLAPPartitions, ROLAPPartitions, SamplingRate

from inserted

After creating this trigger, in querylog_history, I find all the queries that are sent to my olap server. My table, querylog_history, was created in April, and in July it has ~2 million records.

From this table querylog_history, I created new OLAP db “Admin” and the cube “OLAP queries”. The script for this cube is attached in file admin.vbs.txt. Just change server name at 43 line and run the vbs. This script will creates a new olap database "Admin", and then creates 2 cubes. This file was generated using MetaDataScripter from sql server resource kit.

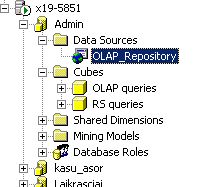

You will need to change the connection parameters in the data sources folder olap_repository connection. To do so, open analysis manager, find your new database named "Admin", expand database, expand folder "Data Sources", right click on "olap_repository" data source, and choose edit. in the "1. Select or enter a server name" box, write your sql server name, in the "3. Select the database on the server" select your olap repository database (See Figure 1).

Figure 1

In the olap database there exist 2 databases: olap queries (queries, sent to analysis services) and rs queries (queries sent to reporting services). The Fact table for “rs queries” is the view from your reporting services database, ExecutionLog table. You can create the view in the same olap repository database.

--Ramunas Balukonis 2004.04.15 create view dbo.querylog_rep as select username, timestart , (TimeDataRetrieval + TimeProcessing + TimeRendering ) / 1000 as duration , status, bytecount, [RowCount], parameters from reportserver.dbo.ExecutionLog

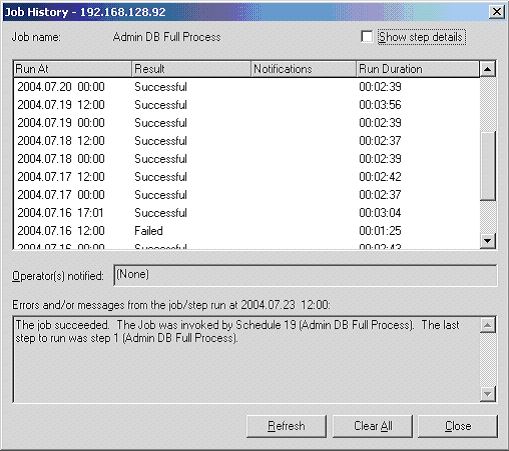

In my cube olap queries I have measures: “duration”, “number of queries” and “Time to execute query, avg” . Dimensions are: “users”, “cube” and “time” dimensions. Cube “rs queries” has similar dimensions and measures. You can create new calculated members like “number of queries / hour, avg” etc. I created my package to make a full process of admin database and scheduled to run them every day 2 times a day (See Figure 2).

Figure 2

Conclusions

After implementing the cube from querylog table, I can answer these questions:

1. How much queries are send per day/month/year.

2. Which users queries takes the most time to execute, which cube is the most popular in the database?

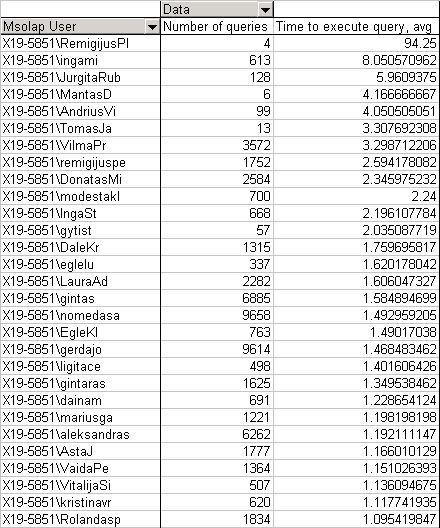

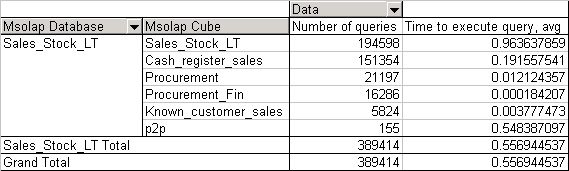

After that, you can create aggregations only for certain users or those queries that take more than 3 sec. See Figure 3 for long running queries or Figure 4 for the most popular.

Figure 3

Figure 4

3. How much time the queries take now after I designed my aggregations? Was the designing of the aggregations successful? What is the different of time spending answer a query before and after aggregations design.

So, after creating the cube "olap queries", I can more effectively support my olap databases: create aggregations, plan the process time, find the longest queries and analyze, why they take so much time?