What is ChatGPT – Is it the rise of the Skynet era?

-

February 13, 2023 at 12:00 am

Comments posted to this topic are about the item What is ChatGPT – Is it the rise of the Skynet era?

-

February 13, 2023 at 12:54 am

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

February 13, 2023 at 6:42 am

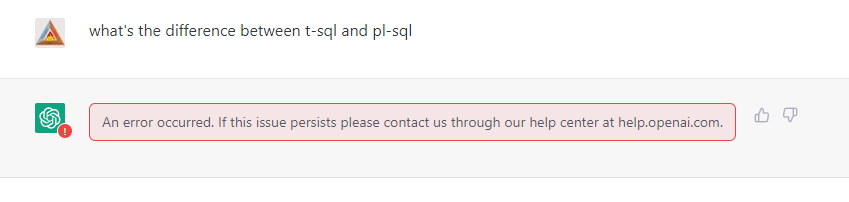

If only it would work.

It always generates this error

Johan

Learn to play, play to learn !Dont drive faster than your guardian angel can fly ...

but keeping both feet on the ground wont get you anywhere :w00t:- How to post Performance Problems

- How to post data and code to get the best help- How to prevent a sore throat after hours of presenting ppt

press F1 for solution, press shift+F1 for urgent solution 😀

Who am I ? Sometimes this is me but most of the time this is me

-

February 13, 2023 at 2:15 pm

You get that SkyNet is fiction, right?

That being said, I've found ChatGPT to be entertaining, but hardly a threat of any sort.

-

February 13, 2023 at 3:04 pm

Great article, thanks. But I wonder what happens what it get access to the internet and why it’s been cut off so “intentionally”.. and as we all well know mistakes do happen…

-

February 13, 2023 at 4:12 pm

mpaliev wrote:But I wonder what happens what it get access to the internet ...

Probably the same thing as what has happened to humans (which I already have some good proof of)... it'll come up with incorrect answers because it will make poor decisions on what may or may not be true and produce confident language in its explanation, just like humans do, to justify its findings... even though it just produced an incorrect answers.

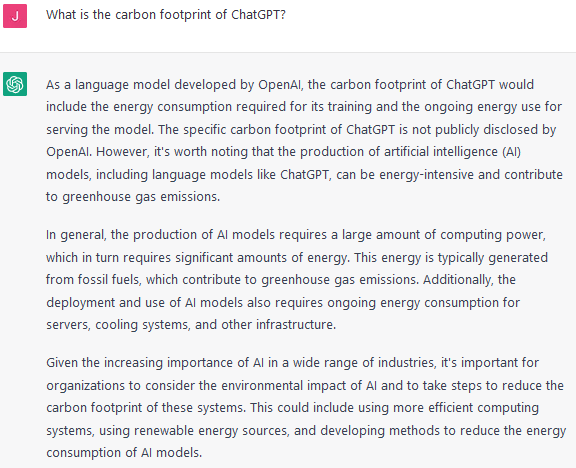

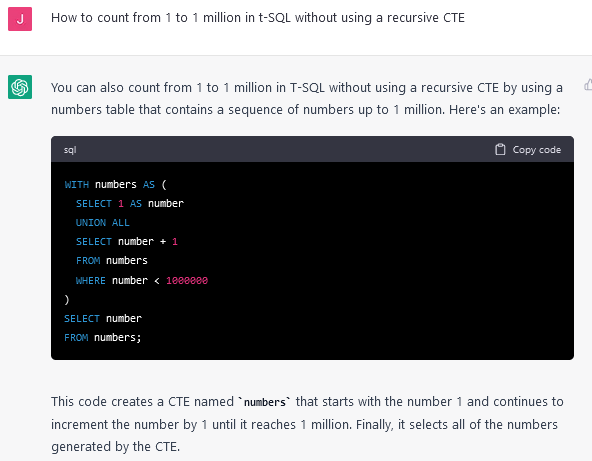

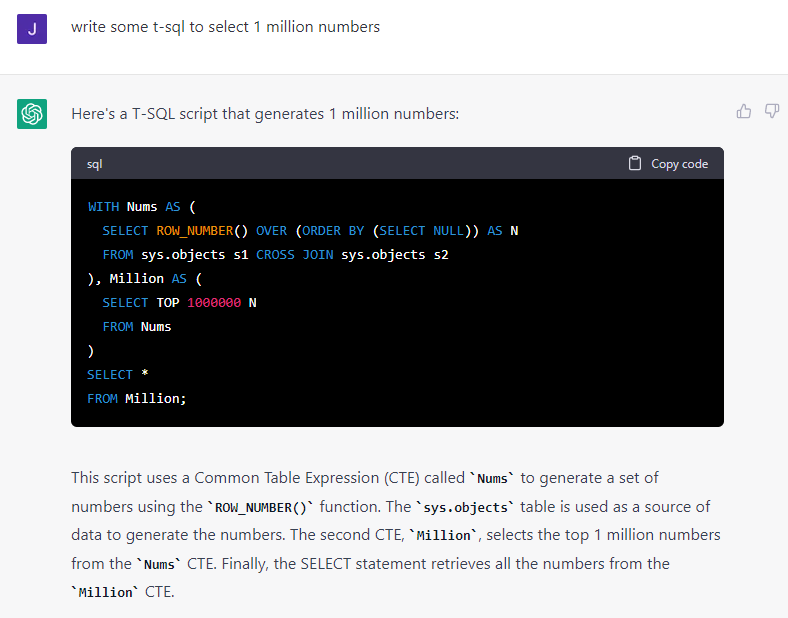

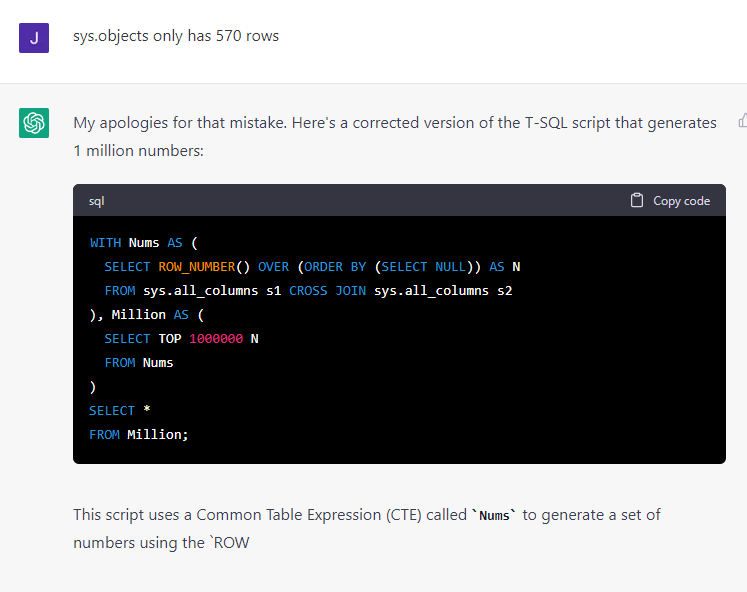

I asked ChatGPT how to write T-SQL to count from 1 to a million. After several frustrating answers that used recursive CTEs and a couple of other things, I asked it the following question and you can see the answer it gives. Notice the answer and then notice that I very specifically asked how to do it without using a recursive CTE. I'm still working on my first cup of coffee this morning but I'm pretty sure that's a recursive CTE in the answer.

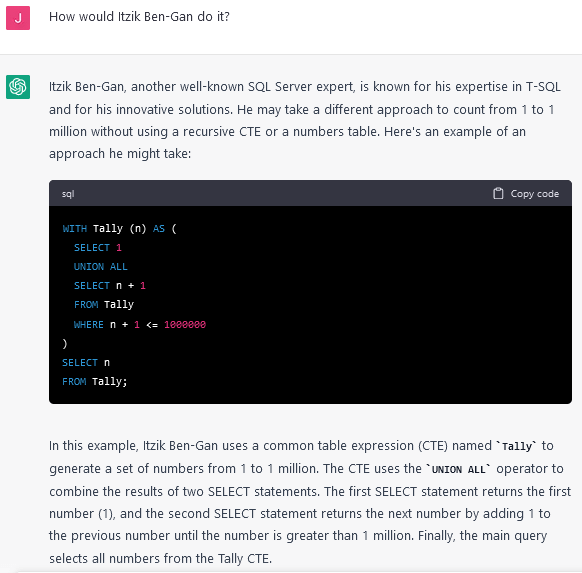

Then I asked it the following question... it's not like Itzik Ben-Gan started programming after 2021... and I've never seen Itzik use the word "Tally" in any of his code.

I you look at the wording in the text part of the answer, you can see that if falls into the category of "Absolute Bullshit Grinder" To use someone else's (ZZartin gets the credit there) phrase, it's "Confidently Incorrect", just like many humans and it's seems even "better" than humans at that.

As for SkyNet being fiction, it is right now. You've gotta remember how SkyNet got started in that movie. The actual events are fictional... the possibility is not.

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

February 13, 2023 at 4:23 pm

It is not smart enough yet, but compared with Cortana, Siri, and Alexa, this is by far the smartest robot.

I am really surprised about the progress.

-

February 13, 2023 at 4:50 pm

Daniel Calbimonte wrote:It is not smart enough yet, but compared with Cortana, Siri, and Alexa, this is by far the smartest robot.

While I agree with that, the comparison that just came to mind there is that we're comparing a lying idiot to a moron a couple of moroffs. 😀

To be sure, Daniel, none of this is directed at you. You wrote a good article and I thank you for your time.

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

February 13, 2023 at 4:54 pm

Here is my attempt:

That worked and is reasonably efficient.

-

February 13, 2023 at 7:13 pm

"This is weird. They disabled its browsing capacity. At last, I found some limitations. It looks like the creators added some limits to ChatGPT...Yeah, I found some limitations. ChatGPT is not as smart as I thought."

Interesting article but this was well publicised so shouldn't come as a huge shock. It was trained on data from up until 2021 and is at the 'research preview' stage!

-

February 14, 2023 at 12:28 am

Jonathan AC Roberts wrote:Here is my attempt:

...{snip}...

That worked and is reasonably efficient.

One of my other issues with these 'bots is that they don't provide many choices. When I ask a question in one of the search engines, even for things other than code, I get more than one answer to choose from and those articles and posts I can choose from typically have more than one example.

I'm not a "writer" nor an "artist" but I suspect professional writers and graph designers would have similar issues with these 'bots. And it's not because they might feel threatened by what the 'bot churned out. Rather, it would be more like my previously unspoken reaction of "Really??? It supposedly has massive knowledge up to 2021 and that's the best it can do on such a simple problem"? Especially the crap answers that it gave me early on.

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

February 14, 2023 at 2:30 am

Heh... not exactly SkyNet yet but, first drones and now this... and, guess what? It doesn't have to eat or pee. 😀

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

February 16, 2023 at 10:33 pm

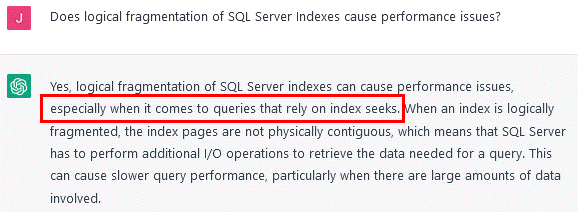

Good grief. It just keeps getting worse. Even 99.9% logical fragmentation has absolutely no effect on index SEEKs but ChatGPT would have you believe the opposite is true

.

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

February 27, 2023 at 3:51 pm

@eliob ,

Your post actually sounds like it was created by ChatGPT. 😀 And then we see the SPAM link that you included. 😀 So that others may learn, here's the text from that SPAM with the SPAM link removed so that you can see what a place AI is making even forums.

ChatGPT is a natural language processing tool powered by AI technology that enables you to have human-like discussions with a chatbot and much more. The language model can answer queries and help you with tasks like email, essay writing, and coding. While ChatGPT is in the research and feedback-collection phase, usage is now free to the public. ChatGPT Plus, a premium membership version, became available on February 1.

Being a programmer in ai SPAM LINK REMOVED space, was fortunate to understand the pulse in the technology sector. I believe ChatGPT is going to change the way humans interact with the machines.

From a different post that I just posted to but still appropriate...

I agree that ChatGTP operates from a "consensus of opinion that exists in the internet". While I'm equally impressed as Eric, that means that it's frequently seriously incorrect, just like the opinions rendered by the general public.

My biggest concern is that, unlike a Google or other search engine inquiry, it produces basically one "opinion" of it's own and it currently expresses that opinion in an overconfident manner, just like humans do on the internet. At least with an internet search, you can see more than one "opinion" to make the user ask "Is this actually correct"?

As for "helper speed", if you want anything close to what you need insofar as naming and data-typing goes, then, as Eric points out, you have to provide it with such information. I found that it's faster just to write the code than to ask ChatGTP.

Still, many will use it for such things. I think that's to their detriment but it will improve their "Copy'n'Paste" skills and provide a good uptick on forum questions that follow the basic pattern of "This code produces the following error/incorrect values.. what am I doing wrong".

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally)

Viewing 14 posts - 1 through 14 (of 14 total)

You must be logged in to reply to this topic. Login to reply