By Steve Bolton

…………Whenever we assign set membership grades to records on a continuous scale, we open up a whole new world of possibilities for measuring uncertainty and modeling different types of imprecision. Two articles ago in this series of amateur self-tutorials, we saw how a whole taxonomy of fuzzy set types can be derived by applying different combinations of membership grades; these fuzzy set types allow us to model imprecise natural language terms that are more difficult to handle with traditional “crisp” sets. In the last installment, we saw how crisp sets have only one type of complement, but how the concept of graded membership naturally leads to a whole smorgasbord of fuzzy complements. These can be quite useful in developing measures of imprecision that are tailor-made for specific real-world problems. Binary relations between fuzzy sets can likewise be put to good use, especially in the development of other measures that are useful in uncertainty management programs, but reside at a higher level of complexity than complements since multiple sets are involved.

…………All of the set relations I’ll be introducing in the next three articles, like fuzzy unions and fuzzy joins, are defined on some subset of the crisp Cartesian product of the two sets. I’ve seen several discussions in literature of how to model fuzzy relations as cylindrical projections of the Cartesian product in multidimensional space, which has uses in maximizing nonspecificity[1] – a metric of uncertainty I’ll discuss later in this series – but for now I’ll keep the discussion as down-to-earth as I can. Fuzzy set relations are essentially equivalent to defining a fuzzy membership function on the set that results from an operation, which leads to myriad ways of assigning grades to the relation. [2] This in turn results in a dizzying array of choices that are not available with crisp relations, which empowers us to create new measures, as the risk of getting lost in the complexity of all the available choices. I can hardly pretend to be an authority on which choices are the best match for particular use cases, but I can at least introduce the topic in the hopes that I can learn as I go and help others to at least avoid my inevitable mistakes.

Four Types of Fuzzy Intersections

In the case of intersections, we’re basically asking SQL Server, “Give me all of the records that are members of a both sets.” Partial membership introduces the need for some kind of math operation to assign a grade to the memberships of the resulting set, which essentially boils down to taking the minimum values of the membership grades of the two constituent sets. This is one of the unifying principles of fuzzy intersections, which differ only in the manner in which they select those minimums. The simplest ones are the Standard Intersection, which is merely minimum of either the first set or second set; the algebraic product, in which the membership grades of the corresponding rows are merely multiplied together; Bounded Difference, which is either 0 or the two set grades added together minus one, whichever is higher; and the Drastic Intersection, which is the membership value of the first set when the second equals one or vice-versa, but 0 otherwise.[3]

…………The code in Figure 1 implements all four of these, in a manner similar to the way I illustrated the use of membership functions and fuzzy complements in the last articles. The stored procedure I wrote for Outlier Detection with SQL Server, part 2.1: Z-Scores is pulled in here for double-duty as the membership function of my first set, while the alternate version I used in Outlier Detection with SQL Server, part 2.2: Modified Z-Scores is used for the second. The GroupRank and OutlierCandidate columns were members of the original procedures and could not be left out of the INSERT EXEC statements, but can be safely ignored. The @RescalingMax, @RescalingMin and @RescalingRange values are used in conjunction with the ReversedZScore of the two table variables to normalize the stored procedure results within the industry-standard 0 to 1 range for fuzzy sets. We could of course define much simpler membership functions and do without the stored procedures, but I wanted to demonstrate the usefulness of fuzzy intersections in a more meaningful way. We could also do without these two table variables and the third which holds the intersection values, but it is more practical in this situation to calculate all of this once only, since we have to demonstrate many different types of intersections afterwards. Since the membership values of both sets often differ, using an INTERSECT operator might backfire by discarding those that don’t match. Hence, I’ve found it easier in cases like this to use an INNER JOIN. The WHERE clause that follows merely illustrates how we can create an intersection on sets that have different crisp members, regardless of their membership grades. The code is lengthy because of the need for the three table variables in this particular instance, but is not difficult at all, once you strip away all of this preparatory code and see that the meat and potatoes can be found in the @IntersectionTable’s four computed columns.

Figure 1: Sample Code for a Fuzzy Intersection on Two Different Types of Z-Scores

— CREATING THE FUZZY SET TEST DATA

DECLARE @RescalingMax decimal(38,6), @RescalingMin decimal(38,6), @RescalingRange decimal(38,6)

DECLARE @ZScoreTable table

(PrimaryKey sql_variant,

Value decimal(38,6),

ZScore decimal(38,6),

ReversedZScore as CAST(1 as decimal(38,6)) – ABS(ZScore),

MembershipScore decimal(38,6),

GroupRank bigint

)

DECLARE @ModifiedZScoreTable table

(PrimaryKey sql_variant,

Value decimal(38,6),

ZScore decimal(38,6),

ReversedZScore as CAST(1 as decimal(38,6)) – ABS(ZScore),

MembershipScore decimal(38,6),

GroupRank bigint,

OutlierCandidate bit

)

INSERT INTO @ZScoreTable

(PrimaryKey, Value, ZScore, GroupRank)

EXEC Calculations.ZScoreSP

@DatabaseName = N’DataMiningProjects‘,

@SchemaName = N’Health‘,

@TableName = N’DuchennesTable‘,

@ColumnName = N’LactateDehydrogenase‘,

@PrimaryKeyName = N’ID’,

@DecimalPrecision = ’38,32′,

@OrderByCode = 8

— RESCALING

SELECT @RescalingMax = Max(ReversedZScore), @RescalingMin= Min(ReversedZScore) FROM @ZScoreTable

SELECT @RescalingRange = @RescalingMax – @RescalingMin

UPDATE @ZScoreTable

SET MembershipScore = (ReversedZScore – @RescalingMin) / @RescalingRange

INSERT INTO @ModifiedZScoreTable

(PrimaryKey, Value, ZScore, GroupRank, OutlierCandidate)

EXEC Calculations.ModifiedZScoreSP

@DatabaseName = N’DataMiningProjects‘,

@SchemaName = N’Health‘,

@TableName = N’DuchennesTable‘,

@ColumnName = N’LactateDehydrogenase‘,

@PrimaryKeyName = N’ID’,

@OrderByCode = 8,

@DecimalPrecision = ’38,32′

— RESCALING

SELECT @RescalingMax = Max(ReversedZScore), @RescalingMin= Min(ReversedZScore) FROM @ModifiedZScoreTable

SELECT @RescalingRange = @RescalingMax – @RescalingMin

UPDATE @ModifiedZScoreTable

SET MembershipScore = (ReversedZScore – @RescalingMin) / @RescalingRange

— DERIVING THE INTERSECTION

DECLARE @IntersectionTable table

(PrimaryKey sql_variant,

Value decimal(38,6),

MembershipScoreForSet1 decimal(38,6),

MembershipScoreForSet2 decimal(38,6),

StandardIntersection AS (CASE WHEN MembershipScoreForSet1 <= MembershipScoreForSet2 THEN MembershipScoreForSet1

ELSE MembershipScoreForSet2 END),

DrasticIntersection AS (CASE WHEN MembershipScoreForSet1 = 1 THEN MembershipScoreForSet2

WHEN MembershipScoreForSet2 = 1 THEN MembershipScoreForSet1

ELSE 0 END),

AlgebraicProduct AS MembershipScoreForSet1 * MembershipScoreForSet2,

BoundedDifference AS (CASE WHEN MembershipScoreForSet1 + MembershipScoreForSet2 – 1 > 0 THEN MembershipScoreForSet1 + MembershipScoreForSet2 – 1

ELSE 0 END)

)

INSERT INTO @IntersectionTable

(PrimaryKey, Value, MembershipScoreForSet1,

MembershipScoreForSet2)

SELECT T1.PrimaryKey, T1.Value, T1.MembershipScore, T2.MembershipScore

FROM @ZScoreTable AS T1

INNER JOIN @ModifiedZScoreTable AS T2

ON T1.PrimaryKey = T2.PrimaryKey

WHERE T1.Value != 142 AND T2.Value != 147

SELECT *

FROM @IntersectionTable

ORDER BY DrasticIntersection DESC

Figure 2: Sample Results from the Duchennes Table

…………Note how quickly the Drastic Intersection drops off to 0, when we order by that particular column. The other three undulate in comparison, but do so at varying rates I don’t have screen space to fully illustrate here. That is because there are many duplicates values for the Lactate Dehydrogenase column of the practice dataset I used for Figure 2, which is full of Duchennes muscular dystrophy data I downloaded from Vanderbilt University’s Department of Biostatistics a few tutorial series ago.

…………The different shapes of the function results can be leveraged to meet various use cases; for example, if the problem at hand calls for a rapid drop-off in intersection membership values, the Drastic Intersection might be ideal. As I’ve pointed out a few times in this series, fuzzy sets are much easier to understand once you recognize that they’re ideal for modeling imprecise natural language terms, including many found in everyday speech like “about” or “near,” not just broad high-tech concepts like “I/O bottleneck.” Membership grades should not be interpreted as probabilities unless they’re explicitly designed to measure uncertainty about stochastic measures. In this case, we’re deliberately assigning meanings to the two membership sets that are comparable as outlier detection methods, even though they measure aberrance in different ways; the purpose here is not to teach anything about Z-Scores, but to use a familiar concept to illustrate an unfamiliar one. The results here might be expressed in natural language as, “when both methods of Z-scores are taken into account, Lactate Dehydrogenase values of 198 are very close to the center point of the dataset. They have high membership value in the set of records that are NOT outliers.” As the values of each type of intersection move in the other direction, that becomes less true.

Upgrading to T-Norms

These four intersection types are simple instances of a broader class of functions known as triangular norms (T-Norms) that have been shown to be equivalent to fuzzy intersections, according to various mathematical theorems. The Standard Intersection is basically identical to Gödel’s T-norm, while the algebraic product is equivalent to the Product T-norm and the Bounded Difference to the Lukasiewicz T-norm.[4] The functions in this family satisfy certain obligatory mathematical properties, like commutativity, associativity, monotonicity and sometimes also continuity (i.e. a continuous function).[5] An Archimedean T-norm has the additional property of subidempotency, while a strict Archimedean T-norm also has strict monotonicity.[6] I won’t bore you with the formal definitions of these things, let alone the mathematical proofs and equations, since it is essentially my function to explain this to readers who don’t have a mathematical background; there is a crying need in the data mining field for bridges between the underlying theories and the end users, in the same way that mechanics fill a gap between drivers and automotive engineers. I suggest that anyone who needs this extra detail consult my favorite reference, George J. Klir and Bo Yuan’s Fuzzy Sets and Fuzzy Logic: Theory and Applications, which I will be leaning on through much of this series. They also get into a discussion of concepts like decreasing and increasing generators and pseudo-inverses and how to use them in the construction of T-norms, but that is also beyond the bounds of this introduction.[7]

…………Just as we were able to pile different types of fuzziness on top of each other a few articles ago to create a whole fuzzy set taxonomy, so too can we combine T-norms to form an endless array of more complex T-norms.[8] I’ll leave to the imagination and needs of the reader and just give examples of how to code 11 of the more common T-norms. Figure 3 contains additional code that can be tacked on to Figure 1 to calculate four types of T-norms developed by Berthold Schweizer and Abe Sklar and others likewise developed in the ‘70s and ‘80s by József Dombi, M.J. Frank, Horst Hamacher, Yandong Yu, Didier Dubois and Henry Prade.[9] Ronald R. Yager, the author of the Yager Complement mentioned in the last article, also developed a corresponding fuzzy intersection that likewise takes a @LambdaParameter variable as an input, while Michio Sugeno, the creator of the Sugeno Complement, devise a T-norm that was independently rediscovered by Siegfried Weber. The declaration section at the top also includes parameters also required by some of the other authors. These parameters allow us to vary the shape of each T-norm to suit particular use cases, just as we did with the parameters of the Sugeno and Yager Complements in the last article.

Figure 3: Code for Several Popular Types of T-Norm Fuzzy Intersections

DECLARE @AlphaParameter float = 1, — 0 to 1

@PParameter float = 1, — <> 0 for SchweizerandSklar 1, > 0 otherwise

@RParameter float = 1,

@SParameter float = 0.7, — must be > 0 but not =1 for Frank1979

@LambdaParameter float = 1, — > -1

@OmegaParameter float = 1, — ? must be > 0

@Pi decimal(38,37) = 3.1415926535897932384626433832795028841 — from http://www.eveandersson.com/pi/digits/100

SELECT PrimaryKey, Value, MembershipScoreForSet1, MembershipScoreForSet2,

(MembershipScoreForSet1 * MembershipScoreForSet2) / (SELECT MAX(Value) FROM (VALUES (MembershipScoreForSet1),(MembershipScoreForSet2),(@AlphaParameter)) AS T1(Value)) AS DuboisPradeTNorm,

Power(1 + Power(Power(CAST(1 / MembershipScoreForSet1 AS float) –1, @LambdaParameter) + Power(CAST(1 / MembershipScoreForSet2 AS float) –1, @LambdaParameter), CAST(1 / @LambdaParameter AS float)), –1) AS DombiTNorm,

Log(1 + (((CAST(Power(@SParameter, MembershipScoreForSet1) AS float) –1) * (Cast(Power(@SParameter, MembershipScoreForSet2) AS float) –1)) / (@SParameter –1)), @SParameter) AS Frank1979TNorm,

(MembershipScoreForSet1 * MembershipScoreForSet2) / (@RParameter + (1 – @RParameter) * (MembershipScoreForSet1 + MembershipScoreForSet2 – (MembershipScoreForSet1 * MembershipScoreForSet2))) AS HamacherTNorm,

(SELECT MAX(Value) FROM (VALUES (0), ((MembershipScoreForSet1 + MembershipScoreForSet2 + (@LambdaParameter * MembershipScoreForSet1 * MembershipScoreForSet2) – 1) / CAST(1 + @LambdaParameter AS float))) AS T1(Value)) AS WeberTNorm,

(SELECT MAX(Value) FROM (VALUES (0), ((1 + @LambdaParameter) * (MembershipScoreForSet1 + MembershipScoreForSet2 – 1) – (@LambdaParameter * MembershipScoreForSet1 * MembershipScoreForSet2))) AS T1(Value)) AS YuNorm,

1 – (SELECT MIN(Value) FROM (VALUES (1), (Power(Power(1 – MembershipScoreForSet1, @OmegaParameter) + Power(1 – MembershipScoreForSet2, @OmegaParameter), 1 / CAST(@OmegaParameter AS float)))) AS T1(Value)) AS YagerTNorm

FROM @IntersectionTable

WHERE MembershipScoreForSet1 != 0 AND MembershipScoreForSet2 != 0

SELECT PrimaryKey, Value, MembershipScoreForSet1, MembershipScoreForSet2,

Power((SELECT MAX(Value) FROM (VALUES (0), (Power(MembershipScoreForSet1, @PParameter) + Power(MembershipScoreForSet2, @PParameter) – 1)) AS T1(Value)), 1 / CAST(@PParameter AS float)) AS SchweizerSklarTNorm1,

1 – Power(Power(1 – MembershipScoreForSet1, @PParameter) + Power(1 – MembershipScoreForSet2, @PParameter) – (Power(1 – MembershipScoreForSet1, @PParameter) * Power(1 – MembershipScoreForSet2, @PParameter)), 1 / CAST(@PParameter AS float)) AS SchwiezerAndSklar2,

EXP(–1 * Power(Power(Abs(Log(MembershipScoreForSet1)), @PParameter) + Power(Abs(Log(MembershipScoreForSet2)), @PParameter), 1 / CAST(@PParameter AS float))) AS SchwiezerAndSklar3,

(MembershipScoreForSet1 * MembershipScoreForSet2) / Power(Power(MembershipScoreForSet1, @PParameter) + Power(MembershipScoreForSet2, @PParameter) – (Power(MembershipScoreForSet1, @PParameter) * Power(MembershipScoreForSet2, @PParameter)), 1 / CAST(@PParameter AS float)) AS SchwiezerAndSklar4

FROM @IntersectionTable

WHERE MembershipScoreForSet1 != 0 AND MembershipScoreForSet2 != 0

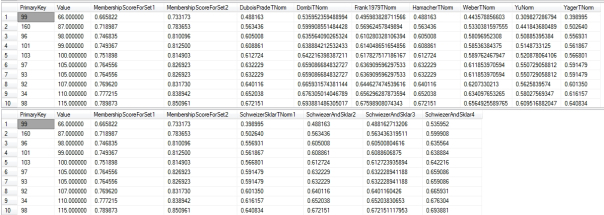

Figure 4: T-Norm Results (click to enlarge)

…………There’s an awful lot of math going on here but very few examples in the literature with sample data to verify the results on, so be on the lookout for undiscovered errors in this inexpert attempt to code these more advanced T-norms. To simplify things, I decided to add the brute force WHERE clause in order to prevent divide-by-zero errors, rather than including validation logic in each separate calculation. To make the code a little easier on the eyes, I split the Schweizer and Sklar T-norms into a separate query and put each on a line of its own, which probably won’t render correctly in WordPress. In most of them, I adopted a T-SQL trick posted by Jamie Thomson at SQLBlog.com which allows you to select the MAX or MIN for a set of values at each row, without having to do an aggregate operation on the whole table.[10] The Yager T-Norm uses a MIN operation, but the outer subtraction turns it into a reciprocal so it’s actually a MAX in the end.

…………Note that once again, the each function follows a different pattern. Some of them overlap at times, like the Yager T-norm, which equals the Bounded Difference when the @OmegaParameter is set to 1; as it approaches infinity (or the best can approximate at the limits of SQL Server’s float, numeric and decimal data types) it joins up with the Standard Intersection.[11] Our goal is to find a T-norm that produces a pattern that closely matches the imprecision we’re trying to model. It’s a mouthful when put that way; suffice it to say that once again, it is easier to grasp all of this if we get away from computer code, heavy math and big words and look at fuzzy sets in terms of natural language. As Klir and Yuan put it, our selection of the right fuzzy set operation is dependent on the meaning of particular linguistic terms”

“We have to determine, in each particular application, which of the available operations on fuzzy sets best represent the intended operations on the corresponding linguistic terms. It is now experimentally well established that the various connectives of linguistic terms, such as and, or, not, and if-then, have different meanings in different contexts, We have to determine which of the T-norms, T-conorms, complements or other operations on fuzzy sets best approximate the intended meanings of the connectives. The various linguistic hedges are context dependent as well.”[12]

…………In Behavior-Driven Development (BDD) and user stories, it might be natural to flag “and” statements as candidates for fuzzy intersections. Once we’ve identified a suitable T-norm, parameter estimation techniques can be used to take educated guesses on where to set the corresponding input variables (I suppose this would include maximum likelihood estimation, which I am currently stuck on).[13] I’ll elaborate on this selection process further in the next article, which is likely to be a good deal shorter because fuzzy unions are implemented in almost the same way.

…………The same data modeling principles apply to both fuzzy unions and fuzzy intersections, so I’ll dispense with the topic here. If we go to all the trouble of calculating these fuzzy intersection or union values, we might want to persist them instead of incurring their performance costs repeatedly by doing them on the fly. In the last article, I introduced the issue of storing the parameters for Sugeno and Yager Complements in such instances, which leads to some intriguing data modeling issues not often in seen in ordinary crisp sets, where there are no choices of functions to apply to set relations and therefore no parameters to store. I listed a couple of possible solutions, like defining indexed views with the parameter values baked into them or creating a ComplementTable to store the parameter values alongside their function type, which might span schemas or whole databases and include text keys linking them to the table and views the complements are applied to. We could go one step further and also store the complement values in one or more dependent tables linked to the ComplementTable by a foreign key, but if we want to enforce a FOREIGN KEY REFERENCES statement on the second key we’d need one such table for each fuzzy object we’re getting records from. The issue becomes more complicated with binary relations, since we’d need another text column to identify the second object in the IntersectionTable and a third key foreign key if we’re also storing the row-by-row values returned for each parameter setting. The latter would amount to a many-to-many join across two fuzzy sets that are also joined to the IntersectionTable holding the parameters and T-norm type. Thankfully, the modeling options don’t get any more complex than this with other binary relations, such as fuzzy unions, the topic of next week’s article. If you can wade through this tutorial, the next should be a breeze, considering that the counterparts of T-norms were developed by the same theorists and are implemented with similar formulas. The logic behind them isn’t much different either, except that we’re taking maximums rather than minimums at each row. This means we can reuse much of the sample code I’ve posted here and just swap out a few math operations, which is a piece of cake.

[1] IBID., p. 122.

[2] p. 120, Klir, George J. and Yuan, Bo, 1995, Fuzzy Sets and Fuzzy Logic: Theory and Applications. Prentice Hall: Upper Saddle River, N.J.

[3] IBID., p. 63.

[4] See the Scholarpedia.com webpage “Triangular Norms and Conorms” at thehttp://www.scholarpedia.org/article/Triangular_norms_and_conorms#Fuzzy_intersections_and_unions

[5] IBID., pp. 62-63.

[6] IBID., p. 63.

[7] IBID., p. 68.

[8] IBID., p. 70.

[9] I implemented the formulas I found in a table at p. 76, Klir andYuan, which is widely available elsewhere. I had to look up some of the first names at the Wikipedia webpage “Construction of T-Norms” at http://en.wikipedia.org/wiki/Construction_of_t-norms

[10] Thomson, Jamie, 2012, “Use VALUES Clause to Get the Maximum Value from Some Columns,” published Jan. 20, 2012 at the SQLBlog web address http://sqlblog.com/blogs/jamie_thomson/archive/2012/01/20/use-values-clause-to-get-the-maximum-value-from-some-columns-sql-server-t-sql.aspx

[11] pp. 70-71, Klir and Yuan.

[12] IBID., pp. 280-281.

[13] IBID., p. 94.