Intro

Intro

I have recently faced the issue of handling big numbers in SSAS Tabular. Our SSAS model processes accounting data for an international company and some amounts in the original currency are huge for countries with a weak currency. So, we’ve been facing an issue of overflow Currency datatype in SSAS.

Issue explanation

For implementing amounts in SSAS Tabular you have only two options: Decimal (Double) or Currency. Limits of those datatypes are here.

In short:

- Currency – 15 digits + 4 decimals

- Decimal (double) – 15 digits + exponent

Decimal is therefore less precise for sure as the maximum detail of any number is the 15 most significant digits. Therefore, if I have max Currency number +922,337,203,685,477.5807 and I put it to decimal data type I’ll get +922,337,203,685,477 which means that in all cases for large numbers I lose some precision in less significant digits. There is no proper implementation in SSAS for working with large numbers and preserving precision.

In addition, sum function behaves a bit weird when combining big and small numbers. (I hope someone would be able to explain to me a method for how it calculates the number seen in the top level of the hierarchy)

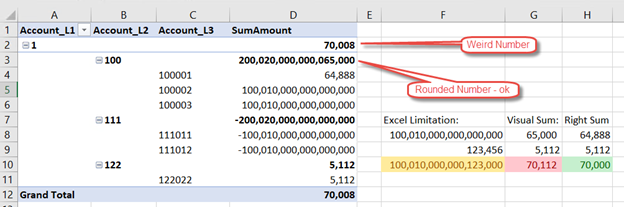

Example:

I have prepared a small sample with Hierarchy to show the behavior of such a calculation. In our case we are working with Chart Of Account where there is high chance the number will be in different branches of hierarchy with positive and negative amounts, therefore at a certain level it will go to 0.

We have large numbers in two branches of hierarchy – always the same number just with the negative sign. Then we have some small numbers in some parts of the hierarchy.

- Number in D3 is ok as it sums big and large numbers and therefore it needs to switch to precision of 15 digits.

- Number in D2 is odd as it’s not what we would calculate out of the numbers which are visible (red cell G10) nor the right number (H10 – green cell).

- Yellow cell F10 just shows excel itself has same issue with Large numbers.

Solution

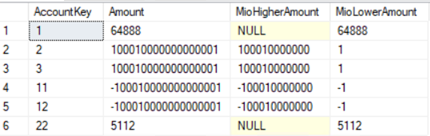

After thinking about how to solve this and after consultation with Marco and Kasper, the only option would be to split values to smaller and larger parts. Let’s see the source values of the table with prepared split:

- Amount – is original value

- MioHighAmount – is part of the number which is larger than 1,000,000

- MioLowerAmount – is part of the number which is lower than 1,000,000

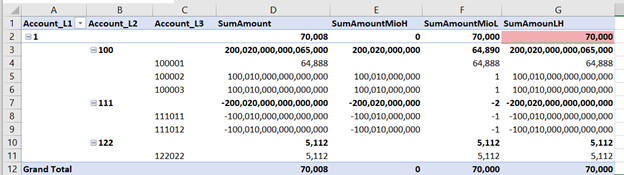

The core of the solution is to sum large and small numbers separately with SUM function. And then sum them in DAX as two different measures. This way we lose as little precision as possible at the last stage of calculation. See red cell in example in Excel:

Dax Formulas:

| SumAmountMioH := SUM ( Journal[MioHigherAmount] ) SumAmountMioL := SUM ( Journal[MioLowerAmount] ) SumAmounLH := ([SumAmountMioH] * 1000000)+ [SumAmountMioL] |

Dax formulas are formatted with DAXFormater.

Conclusion

I did not provide a solution to preserve exact precision for large numbers. I did demo of weird behavior during aggregating large numbers. And I have provided a workaround to lose precision in the last step, which resulted in much more precise numbers in aggregated values. Unfortunately, there is currently no way to work with large numbers with full precision in SSAS Tabular.