I am sure you have heard of the term; garbage in garbage out. This maxim is even more true when it comes to preparing, processing and analysing data for reporting purposes. In this blog post, I will focus on some of processes of data quality and then share some best practices for data quality management.

The data quality process is the set of activities that ensure data is correct/accurate and complete. These activities include checking data prior to being used for reporting and also alert systems to report bad data so it can be corrected or reviewed.

Best Practices for Data Quality

Cleansing

This is the process of correcting mistakes within a database by amending existing data based on pre-defined business rules. An example of this could be ensuring there are no duplicate customer records (e.g for a company in the retail industry), that all subscriptions refer to existing packages or services –(e.g for a company in the telecom industry).

Standardization

This ensures that all completed data entries are in a consistent and in an agreed format. An example of this to ensure all employee reference numbers are in a consistent format based on a predefined business rule.

Enrichment

This best practice ensures the comprehensiveness of data within a database. This involves enhancing data by comparing it to a lookup or third-party content and appending an attribute (column in a table) where appropriate. An example of this is determining area codes of employees based on their location.

Matching and Merging

Data matching is the process of determining that one data item is the same as another data item. This is used to identify duplicate entries within one system or multiple systems and then linked or merged as per the business requirement.This process is invaluable for matching character-based data types as numeric or datetime data types can easily be matched by using the equal sign (=). In subsequent blog posts, I will demonstrate how this can be done using SQL Server Integration Services.

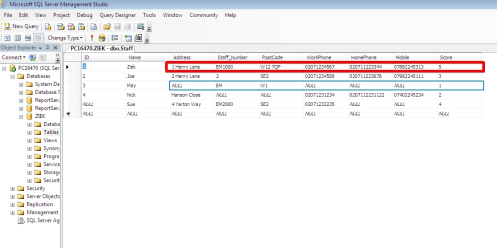

Scoring

This is a technique that is increasingly used to enhance data quality. It involves assigning a number to every row of data in say a table. This number provides a clue about the quality of the data in the row and helps to decide on the appropriate action to take. For example an accurate record may have a score of five while an incomplete or invalid record would have a score of one. Any score between one and five would indicates the degree of confidence the user/business has in the record and also which appropriate action to take. The action could be an amendment or a review of the record.

From the figure above, the row with the completed and accurate (as defined by the business rule) has a score of 5. While the row with incomplete and data in wrong format has a score of 1. It is also clear from the figure that all scores from 1 to 4 requires an action from those responsible for ensuring data quality.

Thanks for reading and please stay tuned as I share how some of these best practices discussed above can be achieved using SSIS in subsequent posts.