A while ago I blogged my about my approach to service packing a SQL Server 2008R2 active active cluster. Time to apply SP1 to SQL 2012 on top of a Windows 2012 failover cluster. The basic approach is similar but there are a couple of subtle differences.

The key to the approach with 2008 was to patch the passive node, while not allowing the SQL instances the option of failing back to the passive node. In Windows Server 2008 this was possible through the Failover Cluster Adminstration GUI tool. I haven’t seen any literature suggesting that this approach is no longer valid – although I have seen moves towards ‘cluster aware’ updating. But this is only available from SQL 2012 SP1, so to apply SP1 I’m going to stick to my tried and tested method.

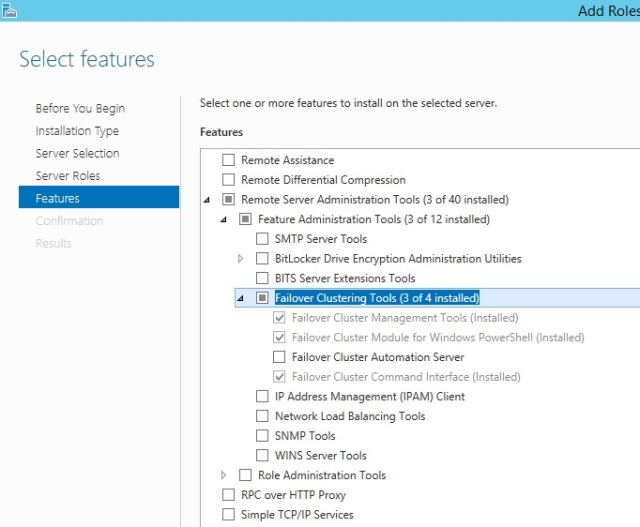

1.The management of possible failover partners is no longer available via the GUI and needs to be done with cluster.exe. Cluster.exe is not required for the configuring of a server 2012 failover cluster so it may or may not be configured in your environment. You can add the Failover Cluster Command Interface feature using the server manager tool.

2. Make a note of all node names (and/or IP addresses), SQL Server virtual names along with preferred nodes. If there are more than three nodes you may need to also take note of possible owners for each SQL resource group. For my example assume that I have a cluster with node1 and node2, SQL1 normally lives on node1 and SQL2 normally lives on node2.

3. To start with a clean slate and ensure any previous updates are completed both nodes should be restarted if possible. Choose the physical node that you you want to patch second and restart that node (in my example node2).

4. Restart the node you want to patch first (node1). This will mean that both active SQL instances are now running on node2. Some restarts will be essential, but you could avoid the first two restarts if you need to keep downtime to a minimum and just fail SQL1 over to node2. The main point here is to always patch a passive node.

5. Use cluster.exe to remove node1 from the possible owners lists of SQL1 and SQL2. This means that neither SQL instance can fail over to node1 while it is being patched. The relevant commands are:

- Check the possible owners: cluster.exe resource “SQL1″ /listowners

- Remove passive node from possible owner list of all instances: cluster.exe resource “SQL1″ /RemoveOwner:node1

- Confirm: cluster.exe resource “SQL1″ /listowners

(repeat for SQL2)

6. Run the service pack executable on node1 and apply SP1 to both SQL instances.

7. Restart node1.

8. Add node1 back into the possible owners lists of SQL1 and SQL2 using:

- cluster.exe resource “SQL1″ /AddOwner:node1

(repeat for SQL2)

9.Fail both instances over to node1. You could do this by restarting node2 or using powershell to suspend node2 with the drain parameter. Once the instances have successfully moved and you have removed the possible owners – resume the node to patch.

10. Repeat steps 5 – 8 on node2.

11. Add node2 back into the possible owners lists of SQL1 and SQL2 and fail both instances over to node2. Check that the build level is correct and review the SQL Server error logs.

12. Fail SQL1 over to node1. Check build levels and SQL Server error logs.

I want acknowledge Sean Gallardy who helped clarify my thinking by responding to my msdn forum question.