An algorithm that starts with the word “regression” seems an unlikely candidate to move forward with this series of self-tutorials in SQL Server Data Mining (SSDM), but don’t let the name fool you: Linear Regression is a powerful data mining tool that represents a logical second step in any workflow, including the climb up this creaky stairway. It can also be considered a sort of building block toward Decision Trees in one direction, as well as Logistic Regression and Microsoft’s Neural Network algorithm in another. Perhaps best of all, it is complex and powerful yet relatively easy for statistical laymen to understand.

I had my sharing of regressing in a completely different way around the holidays, when a variety of obstacles prevented me from starting my discussions of the nine Microsoft algorithms on schedule. One of the problems stemmed from my own inexperience with nested tables, a feature I’d only used previously with the Sequence Clustering algorithm, which led to some serious performance problems that I discuss in more depth in A Rickety Stairway to SQL Server Data Mining, Part 0.2: How to Dig Out of a Data Mining Cave-In. Keep in mind that I’m not posting this because I’m an expert, but because I’m not; I’m trying to kill several birds with one stone by providing some free press to the most under-utilized parts of SQL Server and forcing myself to think with more clarity about data mining as I write this. I’ve already seen first-hand how a statistical novice like myself can garner substantial benefits with a minimal investment of time, energy and processing power. As discussed in A Rickety Stairway to SQL Server Data Mining, Algorithm 1: Not-So-Naïve Bayes a few days ago, I was able to dig out useful data from the simplest SSDM algorithm, Naïve Bayes, by feeding it just 720 rows of denormalized data. There were six tables in my original schema populated by polling the dynamic management views dm_exec_query_stats, dm_io_pending_io_requests, dm_io_virtual_file_stats, dm_os_performance_counters, dm_os_wait_stats and sp_spaceused every minute for three days. The intention was to cause IO stress on my wobbly development machine, so that I could discuss IO data that professional DBAs can already understand, but which I need more experience with, thereby killing off two more birds with another stone. The six tables were originally joined to a single RecordTable that recorded data about each particular poll, but because of my mishap with nested tables (an explosion in the number of attributes ground processing to a halt), I ended up using a denormalized view of a subset of the data. Instead of feeding SSDM all of the tables, some of which have more than a million rows, I merely created a view inner joined on the records dm_io_pending_io_requests, dm_io_virtual_file_stats and dm_os_performance_counters had in common, which amounted to a mere 720 rows or so. Nevertheless, Naïve Bayes was able to return useful data that a DBA could use to track down performance problems. It also turned up some strong relationships that seemed obvious, but were nonetheless useful in confirming that the algorithm was doing its job despite being fed such a minimal supply of information.

As we shall see, Linear Regression was also able shed light from a different direction on interesting links in our data. Even its data inputs are handled differently, so it is not surprising that there were some differences between the columns it identified as interesting and those returned by Naïve Bayes. The latter proved to be handy despite the fact that its inputs must be discretized, or placed into buckets that have no numeric relationship with each other; in other words, SQL Server will treat two ranges of values from 1 to 5 and from 6 to 10 as different states of an object, in a sort of an apples vs. oranges comparison, not as part of a continuum. Despite this manifest blindness, or “conditional independence,” the utility of Naïve Bayes is such a curiosity that it is still a subject of academic research. That makes one wonder just how much more benefit we might receive from an algorithm capable of discerning that numeric ranges are involved, like Linear Regression can. As discussed in more detail under the subheading Discontent with Content in my introductory post, A Rickety Stairway to SQL Server Data Mining, Part 0.0: An Introduction to an Introduction , SQL Server users from the relational world may be unaccustomed to differentiating their data by its meaning, or Content type. The nine SSDM algorithms have nine possible Content types, including Key Sequence, Key Time, Key, Table, Continuous, Cyclical and Ordered, but the first two of these can be used only with the Sequence Clustering and Time Series algorithms, whereas the Cyclical and Ordered types are allowed with Linear Regression but not processed any differently than other Content types. The Table type is for nested tables, which we will discuss in tandem with Sequence Clustering later in this series, by which time I hope to have more experience with them. Since Linear Regression allows input columns with a Content type of Continuous, we can follow the intended DSV schema outlined in post 0.2 much more closely than we did with Naïve Bayes, because it will not be necessary to discretize our Continuous columns. On the other hand, we will have to mark the columns which should be Discrete, since they identify objects or states rather than ranges of numeric values, as Continuous; essentially, we’re left with the opposite of last week’s dilemma, in that we’ll have to treat some of our data as if it had an inappropriate Content type. The lion’s share of the columns in the DSV represent numeric ranges of some kind that imply both a rank and an order, although we do have a few that are naturally Discrete, such as numbers that identify database files and other objects like performance counters and wait types.

Since I was working with a very small subset of my data and one of the common uses of Naïve Bayes is to identify interesting columns to analyze with more resource-intensive algorithms, I ran several more trials off-camera using much larger datasets since the last tutorial. I tried to push the envelope by combining five of the six dependent tables with their parent in a denormalized view, but my machine couldn’t quite handle the load of processing 92 million rows, although it performed more admirably than during my misadventure with nested tables. So I cut back to three separate views, in which sp_spaceused, dm_os_wait_stats and dm_exec_query_stats correspondingly were left joined to the parent RecordTable and the three tables used in the last tutorial, dm_io_pending_io_requests, dm_io_virtual_file_stats and dm_os_performance_counters. The mining structures and models I built on each still had high row counts of 142,080, 7,684,160 and 3,887,072 respectively, but my shaky old machine was able to handle each of them in less than 15 minutes apiece. The only hiccups I ran into were a cardinality warnings on a couple of columns, but I did not bother to adjust MAXIMUM_STATES to see the less popular states that SSDM eliminated, through the mechanism discussed in post 0.2 Some of the interesting column relationships these trials returned were that IOStallReadMs strongly predicts NumOfBytesWritten, NumOfWrites, NumOfReads, NumOfBytesRead and IOPendingMSTicks; IOStall predicted the first three of these, along with IOStallReadMs, and was predicted by SizeOnDiskBytes and FilehandleID; IOStallWriteMs had strong relationships with many of these columns, including predicting IOStall and being predicted by SizeOnDiskBytes and Rows; while the Data and CntrValue columns were related to NumOfWrites and IOPendingMsTicks, as well as IndexSize, Reserved, Unused and Rows from the sp_spaceused table. Of all the Discrete objects in the first view, FileHandleID seems most important according to the output of Naïve Bayes, followed by DatabaseID and FileID, then PerformanceCounterDBID. With the WaitStats table, MaxWaitTimeMs closely predicted SampleMs and SignalWaitTimeMs, and was predicted by IoPendingMSTicks, WaitingTasksCount and WaitTimeMS; SignalWaitTimeMS closely predicted SampleMS, NumOfReads and NumOfWrites and was predicted by IOStallWriteMS; and WaitingTasksCount predicted SampleMS, NumOfReads, IOStall, IOStallWriteMS, IOPendingMSTicks, MaxWaitTimsMS, NumOfWrites and SignalWaitTimeMS. As usual the strongest relationships were often within the tables that the third view referenced, such as some close links between dm_exec_query_stats columns like LastPhysicalReads, LastLogicalReads, LastRows and TotalPhysicalReads. There were also some interesting relationships, however, with other columns like IOPendingMsTicks and IOStall with MinLogicalWrites, LastLogicalWrites and LastLogicalReads. As of yet no close ties have emerged between these various IO columns and a particular performance counter or wait type, but some of the objects referenced by dm_exec_query_stats did have strong links. The discrete columns with the closest relationships to other IO columns included FileHandleID, DatabaseID, FileID, QueryTextID, QueryPlanHashID, QueryHashID, PlanHandleID and ObjectId in that order, but were inconsequential for IOCompletionRequestAddress, PlanGenerationNum and SQLHandleId and SchedulerAddress. No links have yet emerged from any of these views to the calculated column MinuteGap, which I hoped would explain why the SSIS job this data came from was often delayed by intervals of more than a minute, as discussed in previous posts. On the other hand, the relationships we’re already seeing between various measures of IO stress and particular database files and queries might be quite useful in the long run, by helping us pinpoint exactly which objects are dragging down performance. It would even be quite feasible, for example, to develop more refined measures that check to see if a particular DDL statement or a specific string within a query is causing unexpected performance hits. Thanks to this preliminary data returned by Naïve Bayes, we can better estimate which input and predictable columns will be worthwhile to feed into more resource-intensive algorithms. Linear Regression straddles the line between both categories, since it can also be used to make preliminary investigations of data, yet is a step up from Naïve Bayes in computational capabilities with only a minor hit in performance.

The Progression of Regression

Linear Regression is simple enough that high school and college students fulfilling their math prerequisites are often introduced to it, so it is fitting that the origins of the algorithm are likewise humble and basic: it grew out of sweet peas. A longer, more complete answer is that Sir Francis Galton (1822-1911) laid the foundations for both regression analysis and Karl Pearson’s well-known statistical tool, Pearson Product Moment Correlation, when he used forerunners of both as tools to study the heredity of sweet peas.[1] It would be unjust to deliver this colorful anecdote without pointing out, however, that Galton was a racist and a pioneer of the eugenics movement (he actually invented the term) which inspired modern monsters like Hitler and Nazi admirer Margaret Sanger in the 20th Century. His career was littered with shameful quotes, such as how he wanted “to give to the more suitable races or strains of blood a better chance of prevailing speedily over the less suitable than they otherwise would have had.”[2] Galton showed flashes of brilliance, like the development of these statistical tools, but tragically put them in the service of a field that was both vile and the epitome of junk science. He was stark proof of my controversial contention in A Rickety Stairway to SQL Server Data Mining, Part 0.1: Data In, Data Out that many famous names in the hard sciences and mathematics have been terrible philosophers; for reasons I speculated on in that post, it seems that brilliance of that particular type is often accompanied with a distinct risk of losing common sense. It is almost as if their unnatural talent also makes them more prone to abnormally bad reasoning than ordinary folks, once they apply their minds to problems outside of the bounds of science and math. This may seem like a superfluous point, but we must keep the big picture in mind at all times: it would better to not do data mining at all if the results can’t be interpreted in the light of common sense, or worse yet, are applied to some nefarious purpose. History has shown that there is a palpable danger of people highly skilled in these fields exercising terrible judgment when applying them to the real world, so we must be exceptionally cautious. Computerized, automated data mining might relieve this hazard by lifting the burden of doing dry calculations, but it might also merely lead us much faster into far greater lapses of judgment, if no critical thinking and wisdom accompanies it. That requires a completely different skill set than the hard sciences and mathematics are accustomed to. In other words, the name Linear Regression might imply the straight and narrow path, but it can be put to crooked purposes.

On the assumption that my readers are more trustworthy than Galton, I’ll do my best to explain the algorithm as simply as possible: begin by thinking of a scatter plot, which is the ideal visualization tool for this particular algorithm. Basically, you start by filling a graph with scattered data points, which are pairs of input variable values and output variable values assigned to the vertical x axis and horizontal y axis on the graph. Then a common statistical method called the least squared errors is applied to plot out a straight line that fits all of the points best; it’s just a sophisticated version of an average of all the values of the data points, really. I’m going to avoid overuse of equations in this tutorial series because it is aimed to help database professionals who are less likely to be able to interpret them, but in this case, the results are expressed in a regression equation that is easy to understand: y = ax + b. The slope of the line formed by the calculation is represented by a, while the intercept, or the value for y at the place in the graph where x is zero, is represented by b. As we shall see, all of these variables can be retrieved from the mining model, but a note of caution is in order: Books Online (BOL) states that SQL Server adjusts each intercept so that it indicates a comparison with the average for the predictable output attribute y. This is because some values for the intercept are of little real-world worth, such as the perfect example BOL cites of calculating a person’s income at age zero.

Aside from this adjustment, the algorithm is basic enough that high school and early college math students are sometimes exposed to it. One of Linear Regression’s few drawbacks arises from its own popularity and simplicity, which has made possible an unnecessarily confusing proliferation of terminology. There are simply far too many synonyms for these terms. In the Wikipedia article “Dependent and Independent Variables,” for instance, seven synonyms are given for the input variable x (regressor, controlled variable, manipulated variable, explanatory variable, exposure variable, risk factor and feature) and eight for the output variable y (regressand, response variable, measured variable, observed variable, responding variable, explained variable, outcome variable and experimental variable).[3] The input may also be referred as the independent variable or predictor, while the y axis may also be referred to as the output or “dependent.”[4] Linear Regression as a whole is simply referred to merely as using “least squares,” after the name of the calculation that makes the regression equation possible.[5] To complicate matters further, some mathematicians and scientists employ symbols other than the standard x, y, b and a used here to write their regression equations, including Greek letters. For the sake of simplicity, I will use the variables from the equation y = ax + b and the terminology for x and y which I have used throughout this series, i.e. input and predictable output.

Like many other statistical tools, Linear Regression is a fairly simple building block which can be used to construct more sophisticated means of analysis. Weighted Least Squares Regression is a refinement in which the inputs are weighted to correct the fact that in a simple regression, all of the data points in the scatter plot are treated equally, regardless of how important to the equation they really are.[6] Nonlinear Least Squares Regression adds some optimizations to deal with non-linear functions, i.e. those that don’t plot well on a straight line the way an ordinary regression equation demands. Locally Weighted Polynomial Regression (LOESS) is a more recent invention that dates only back to 1979, when more sophisticated weighting techniques based on polynomials became feasible due to continual improvements in computer processing capabilities. Microsoft’s implementation of Linear Regression is a form of both Multiple Regression, in which there is more than one input or x axis, and Multivariate Linear Regression, in which there is more than one output, or y axis. It is actually a stripped down version of Microsoft’s implementation of Decision Trees algorithm, in which that mining method’s MINIMUM_LEAF_CASES parameter is set so that the regression lines do not split into separate branches. This post will be used as a stepping stone towards discussions of this algorithm and Logistic Regression in the next two installments of this series.

The latter is designed to deal with functions which should be constrained within a particular range, such as on a rating scale from 1 to 10, in which case adjustments are added to form an S-curve rather than a straight line. Linear Regression works best on data that we expect to fall along a straight line. That is one of the reasons it is used so frequently in projections, particularly in the field of economics[7]; simply supply another value for x and you can easily calculate the next expected value for y. The algorithm is also ubiquitous, well-understood and works well for small datasets.[8] It has several disadvantages, however, including the fact that it doesn’t model non-linear data well. This can be lead to incorrect extrapolation of the results when the regression equation is applied to input variables whose outputs are not yet known. A simple least squares regression equation can also be easily thrown off by a few unusual outlying values.[9] As I have touched on briefly elsewhere in this series, it may be possible to optimize SSDM by sampling our data before mining it, to see what kind of data distributions our columns have, perhaps with the help of Multidimensional Expressions (MDX) functions with SQL Server Analysis Services (SSAS) cubes or with some of the exciting new windowing functions in T-SQL. Although I have yet to experiment much with this, it may be possible to set optimal values for our discretization parameters in this way, or to correctly forecast which of our columns Naïve Bayes is likely to return good results for, given that it operates best on data that is highly uniform or highly skewed rather than in between both extremes. In the case of Linear Regression, we could make use of MDX’s five linear regression functions or write some of our own in T-SQL to sample our relational data. Another subject of future experimentation might be a benchmarking contest pitting SSDM’s version of Linear Regression vs. the corresponding MDX functions to see which scales best. It is probably highly likely, however, that any MDX or T-SQL functions we could write would not be able to compete with the remaining seven SSDM algorithms we have yet to survey. It might be useful to perform sampling on our cubes or relational sources for each of them, but head-to-head competition by reinventing SSDM’s functionality is probably out of the question.

Regressors, Representations and Results

One potential use for preliminary sampling of our data might be to identify the most interesting input columns, so that we can set certain flags and parameters that affect how Linear Regression handles them. In addition to the NOT NULL mining flag that is available for all algorithms (which is not important for our data, because it has no nulls), Linear Regression has a unique REGRESSOR flag that indicates to SSDM it should be used as an input attribute, i.e. a “regressor” on the x axis. I intended to perform an experiment by creating identical mining models where this flag was set on certain columns, but discovered that it was automatically set on all of my inputs and outputs anyway. So I did the next best thing and turned the flag off for about half of the attributes in the second mining structure, which cut the processing time on that model down to about three or four minutes. SSDM breezed through all 5,378,912 cases in that structure, or 70 percent of the 7,684,160 rows after 30 percent had been reserved for model training as usual (I left HoldoutMaxPercent, HoldoutMaxCases and HoldoutSeed at their default values for all the trials in today’s post for simplicity’s sake). The results seemed identical to another model I created in which the same input columns were set to Ignore and the same predictables were set to PredictOnly: results were retrieved for all of the predictables no matter how the REGRESSOR flag was set, but there were no comparisons against input attributes with the flag set off. It is possible that this modeling flag is of more use with Decision Trees, which is a souped-up version of Linear Regression. BOL’s documentation refers to splits being caused under certain conditions when this flag is used, but splits cannot occur at all with Linear Regression.

We can also set the parameter FORCE_REGRESSOR to make SSDM perform calculations on a particular attribute that might otherwise be discarded. This is where we wade into the thorny issue of whether or not SSDM is applying feature selection under the hood at times without the user being aware of it, until an attribute they’re particularly interested in is not included in the results. For example, I was particularly interested in whether or not WaitTypeID and PerformanceCounterID would be associated with any specific values for our IO measures, but they were not included in the output in any of the first three models, even when the REGRESSOR flag was set and they were set to Input rather than Ignore. After using the FORCE_REGRESSOR parameter on WaitTypeID, the results included comparisons between my predictable attributes and that input column, at the cost of another 45 minutes in model processing time and splits akin to Decision Trees results, which were probably just clutter.

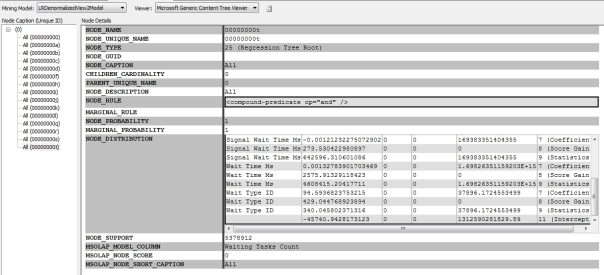

Although I am not yet certain, this behavior is probably due to feature selection occurring under the hood, which can be verified by checking the model content and setting certain parameters. If you try to set the algorithm parameters in the SQL Server Data Tools (SSDT) properties window, you will see several others not mentioned in the BOL documentation on Linear Regression, like COMPLEXITY_PENALTY, MINIMUM_SUPPORT, SCORE_METHOD and SPLIT_METHOD. These are specific to Decision Trees and if we were to set them, they would perform splits in our regressions, which means for all practical purposes we would be using that algorithm instead. MAXIMUM_STATES, which we made use of with Naïve Bayes, is not available with Linear Regression. The key ones for feature selection, as always, are MAXIMUM_INPUT_ATTRIBUTES and MAXIMUM_OUTPUT_ATTRIBUTES, which I left at their default values of 255. This means feature selection should not have been invoked on the first two structures because we don’t have that many attributes in our model. On my first trial of the third structure, which has a lot more columns drawn from dm_exec_query_stats, I received a warning that feature selection was applied, but this did not occur with the other two structures. Or so I thought: not only were certain input columns missing from the results on the first trials, but a check of the mining model content revealed that their Interestingness Scores – which are the only means of feature selection available with Linear Regression – were exceptionally low. Three of the four methods of feature selection outlined in post 0.2 apply only to Discrete or Discretized columns, but can’t be used here because Linear Regression only makes use of the Continuous, Key and Table Content types. This limits us to a single method called the Interestingness Score, in which attributes are assigned higher weights if SSDM determines that they are non-random. One of the limitations of SSDM is that’s it’s difficult to peek under the hood and see exactly why certain attributes were selected over others through the other three methods of feature selection, but with Linear Regression we can retrieve the Interestingness Score through a DMX query, which we will get into after these initial posts on the nine algorithms are complete. For now we will stick with the simpler method of using the Generic Content Viewer, as depicted below:

Figure 1: Linear Regression Nodes in the Generic Content Tree Viewer (click for larger view)

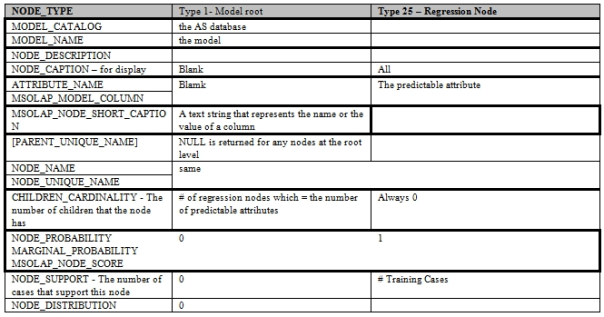

Figure 2: Metadata for LR (adapted from Books Online)

In my last post I introduced the Generic Content Viewer, which displays the results of the nine algorithms in a common metadata format, a topic I go into more detail about in part 0.1. To make a long story short, SQL Server’s Data Mining Team deserves a lot of credit for developing a format that can contain the disparate output of the nine algorithms, which is a little bit like comparing apples and oranges. The drawback of it is that the meaning of the result columns differs from algorithm to algorithm, which can be confusing. Figure 2 shows what the metadata signifies for Linear Regression, which has probably the simplest format of any of the algorithms. There are only two types of nodes in the metadata for Linear Regression, as depicted in Figure 1, where there is a single root node labeled (0) and 15 root nodes below it for each of the 15 predictable attributes). Moreover, most of the metadata columns don’t contain any interesting information. As always, MODEL_CATALOG, MODEL_NAME and ATTRIBUTE_NAME merely signify the names of objects, or the database, mining model and predictable column respectively. NODE_NAME and MSLOAP_NODE_SHORT_CAPTION also identify objects. NODE_PROBABLITY, MARGINAL_PROBABILITY and MSOLAP_NODE_SCORE are not of much use to us with Linear Regression, while NODE_RULE and MARGINAL_RULE are not available at all. CHILDREN_CARDINALITY and NODE_SUPPORT perform their usual functions of identifying the number of children and cases a node has. The really interesting information, as usual, occurs in the nested NODE_DISTRIBUTION table. Its columns have the same names as always: ATTRIBUTE_NAME, ATTRIBUTE_VALUE, SUPPORT, PROBABILITY, VARIANCE and VALUETYPE. The significance of the ATTRIBUTE_VALUE varies, however, from row to row depending on the VALUE_TYPE, which can be one of the following: 1) Missing; 3) to identify the Continuous column being predicted in that node, i.e. the y axis; 7) the coefficient, which has a 1:1 relationship for each input and is accompanied by a VARIANCE value; 8) one Score Gain for each input being compared against the predictable attribute in the node; 9) one row of statistics for each input being compared, including the average in the ATTRIBUTE_VALUE and the sum of deviations from it in the VARIANCE column; and 11) the Intercept for the regression equation. In Figure 1, we can see that WaitTimeMS has a row with a ValueType of 8, which indicates that the Score Gain in the fourth column from the left (labeled VARIANCE off-screen) is zero. This means it was in all likelihood eliminated by feature selection going on in the background, without the kind of warning SSDM generates when feature selection is applied because the number of attributes has exceeded the limits set in MAXIMUM_INPUT_ATTRIBUTES and MAXIMUM_OUTPUT_ATTRIBUTES.

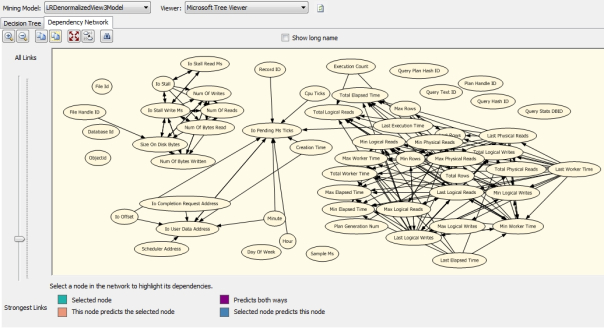

The only other visualization tools for Linear Regression are those included in the Microsoft Tree Viewer, one of which is the Dependency Network introduced in the last post on Naïve Bayes. In my last post I neglected to mention that the magnifier icons allow you to zoom in or out and that the ovals can be moved, as I have done in Figure 3 to group them by their table they came from or the type of data they represent. The data there is from the third mining model, which compares the data from polling dm_exec_query_stats against dm_io_pending_io_requests, dm_io_virtual_file_stats and dm_os_performance_counters. As you can see, there are no relationships between QueryPlanHashID, QueryTextID, PlanHandleID, QueryHashID and QueryStatsID and any of the other columns, unless we drag the slider up to show less common links. Naïve Bayes showed more promising links for these than Linear Regression has, perhaps because these columns would normally have a Content type of Discrete, which the latter must interpret as Continuous. As usual most of the other relationships are grouped around the original tables, which is to be expected. There are some interesting relationships, however, including some cross-table ones, such as those linking LastPhysicalReads with TotalElapsedTime, TotalWorkerTime, MaxPhysicalReads, TotalPhysicalReads and MinLogicalWrites; TotalLogicalReads with ExecutionCount and LastPhysicalReads; IOPendingMSTicks with several measures of IO and time, as well as LastExecutionTime and IOPendingMsTicks; and perhaps most curiously, those binding CreationTime to IOPendingMsTicks and IOUserDataAddress.

Figure 3: The Dependency Network for the Third Mining Structure (click for larger view)

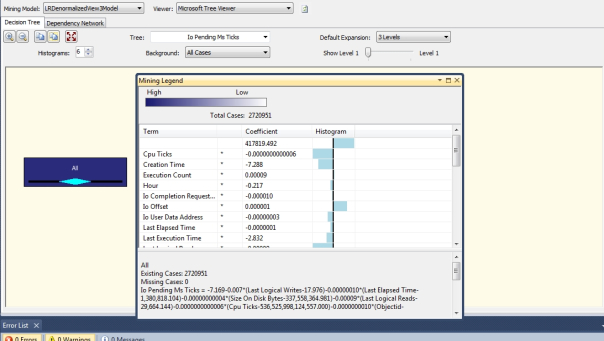

Figure 4: The Decision Tree and Mining Legend for the Third Mining Structure (click to enlarge)

The remaining visualization tool is the Decision Tree tab, most of the functionality of which is only useful with the algorithm of the same name. As far as I can tell, the Histograms, Background, Show Level and Default Expansion tools depicted in Figure 4 are only useful when splits are created, which should only occur with Decision Trees. The same goes for the blue block to the left labeled All, which would branch out into multiple nodes if we were using that algorithm; so far I have only seen it produce a single node with Linear Regression, in keeping with what BOL says about it. The only exception was when I used the FORCED_REGRESSOR parameter on WaitTypeID and created useless splits. The real action occurs in the dockable window titled Mining Legend, which varies significantly in purpose from one algorithm to the next. In the case of Linear Regression we can use it to view the coefficients, histograms and case counts for the predictable attribute selected in the Tree dropdown, when compared against the list of input attributes under the column header Term. At the bottom we can also retrieve the entire regression equation, which is difficult to read because it involves adding and multiplying all of the input attributes with various intercepts and slopes. The size of the scrollbar on the bottom right is a clue to just how long the equation for this predictable attribute is.

I used IOPendingMsTicks as the predictable attribute in Figure 4 because the Dependency Network shows that it has some unexpectedly strong relationships with CreationTime and LastExecutionTime. Why, I do not know. As discussed at the beginning of this column, we were already able to identify some other interesting relationships through Naïve Bayes. Both algorithms have already returned many relationships we’d expect to find between the columns of particular tables and also between certain IO metrics, which ought to build our confidence that these relatively unsophisticated algorithms are doing their jobs. SSDM provides about all the functionality a user can possibly desire for simple regressions with multiple inputs and outputs, although if I had a wishlist for future improvements, it would include a scatter plot visualization tab and easier ways of displaying the complex regression equations. There are many more sophisticated enhancements to Linear Regression out there in the wild world of statistics, including two Microsoft has implemented in SSDM. One is Logistic Regression, which introduces means of constraining the regression equations within certain bounds, which is slated for discussion after next week’s post on Decision Trees. This algorithm features regressions that branch out rather than following straight lines. It uses many of the same parameters and visualization methods as Linear Regression, which makes it the next logical step up our rickety stairway. We can expect Decision Trees and the algorithms that follow it to shed even more light on our IO data than these two preliminary data mining methods already have.

[1] See Stanton, Jeffrey M., 2001, “Galton, Pearson, and the Peas: A Brief History of Linear Regression for Statistics Instructors,” in the Journal of Statistics Education, Vol. 9, No. 3. Published at the American Statistical Association webpage http://www.amstat.org/publications/jse/v9n3/stanton.html.

[2] See the entry titled Sir Francis Galton at the Dictionary of Science Quotations webpage http://todayinsci.com/G/Galton_Francis/GaltonFrancis-Quotations.htm

[3] Refer to the Wikipedia article “Regressor,” http://en.wikipedia.org/wiki/Regressor

[4] Some of these definitions come from a college course handout titled “Regression : Terminology and Definitions” at http://www.stat.lsu.edu/faculty/geaghan/EXST7005/Fall2003/PDF/26s%20SLR%20Parasites%20-%20supplimental.pdf

[5] See the Engineering Statistics Handbook published online by the National Institute of Standards and Technology, particularly the page at http://www.itl.nist.gov/div898/handbook/pmd/section1/pmd141.htm

[6] IBID.The particular pages devoted to Weighted Least Squares, Nonlinear Least Squares Regression and LOESS can be found at http://www.itl.nist.gov/div898/handbook/pmd/section1/pmd143.htm,

http://www.itl.nist.gov/div898/handbook/pmd/section1/pmd142.htm#def and http://www.itl.nist.gov/div898/handbook/pmd/section1/pmd144.htm

[7] According to the Wikipedia article titled “Linear Regression,” it is “the predominant empirical tool in economics.” See http://en.wikipedia.org/wiki/Linear_regression

[8] See the National Institute of Standards and Technology, webpage “ear Least Squares Regression” at http://www.itl.nist.gov/div898/handbook/pmd/section1/pmd141.htm

[9] IBID.