Introduction

While working on a pipeline in Azure Data Factory (ADF), sometimes we need to combine records from multiple source files and put them together into an output file. In this article, we will discuss steps to merge multiple files.

Solution

We will use three files in the data lake storage folder: File1.csv,File2.csv and File3.csv. Each file has a similar structure, with these columns:

- SALESORDERNUMBER

- ISEXPORTSALE

- REATEDDATE

- ISSELECTED

- SALESORDERSTATUS

- CURRENCYCODE

- DELIVERYADDRESSCITY

- DELIVERYADDRESSCOUNTRYREGIONID

- DELIVERYADDRESSSTATEID.

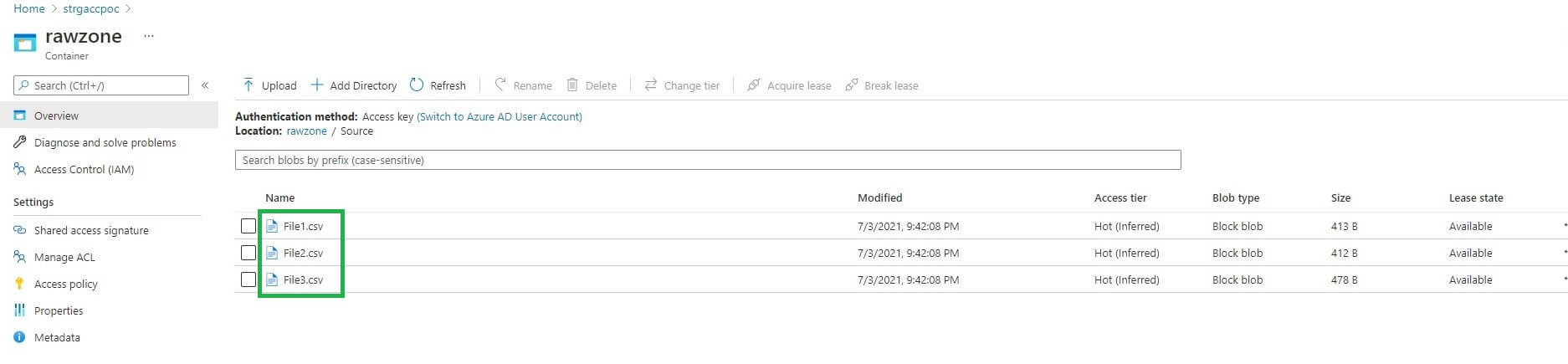

We will use these files as sources for the merging process. You can see these files in my container below.

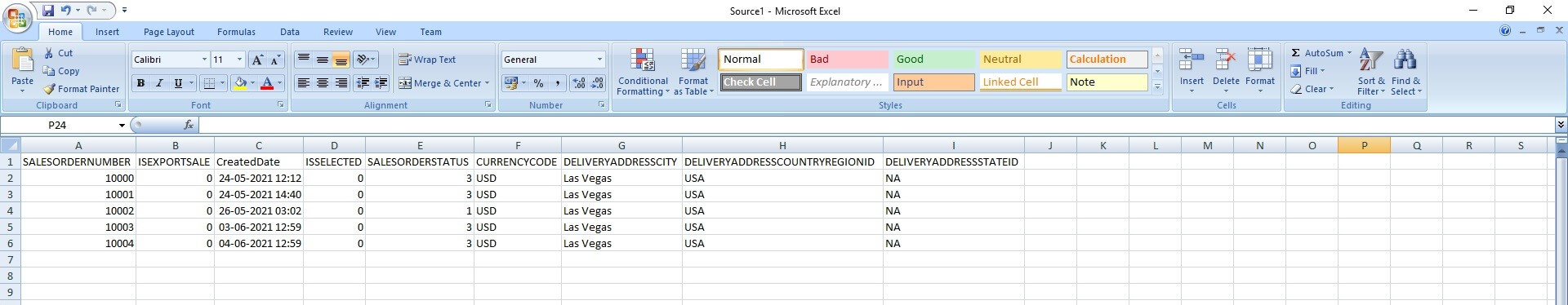

Let's see the content of the three source files. First, we have file1.csv, with 5 records:

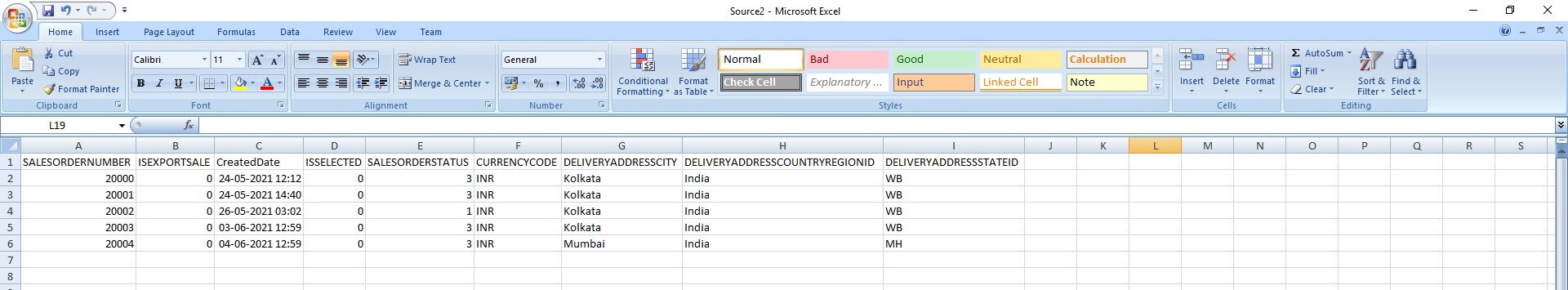

File2.csv also has 5 records, from 20000 to 2004 as the SalesOrderNumber:

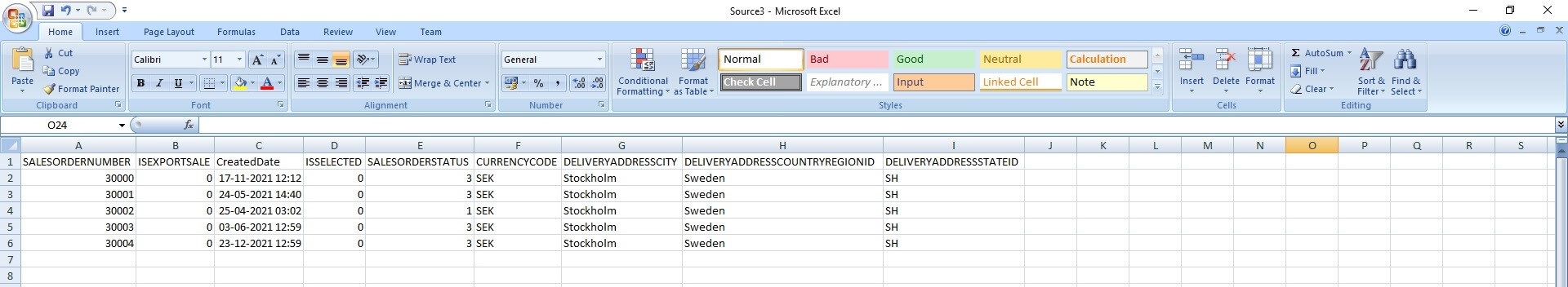

And file3.csv 5 different records:

ADF Pipeline Design

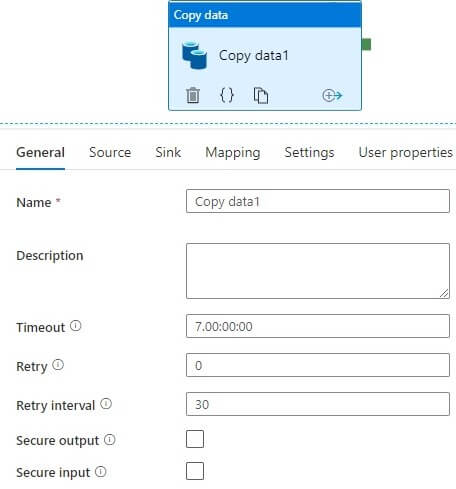

We will use copy data activity in the ADF pipeline. The basic settings are shown below.

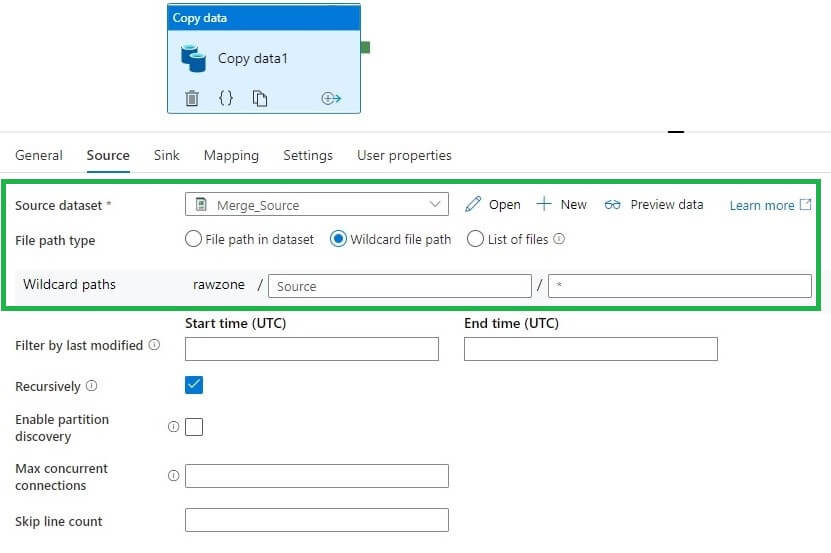

Let's edit the Source in these ways.

- We add the source data set to the folder where the above three source files are stored

- In the File path type, select Wildcard file path.

- In wildcard paths, we use an asterisk (*) for the file name so that all the files are picked.

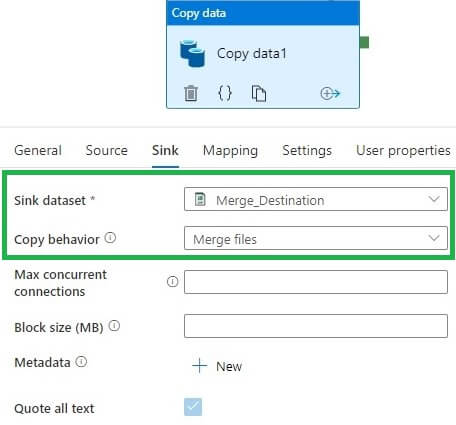

Next we edit the Sink. Here the Copy Activity Copy behavior has a Merge files option. This combines the source files' data into a single file:

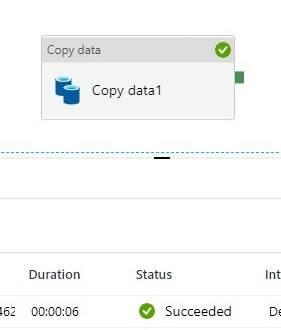

Let's run the pipeline. We see this succeeds:

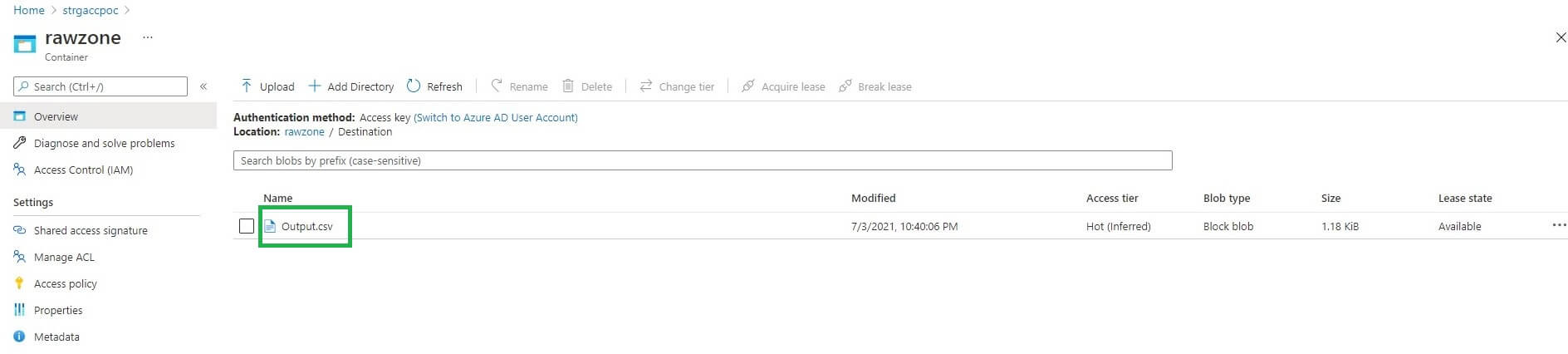

The output file is generated and named Output.csv. We see this in our container:

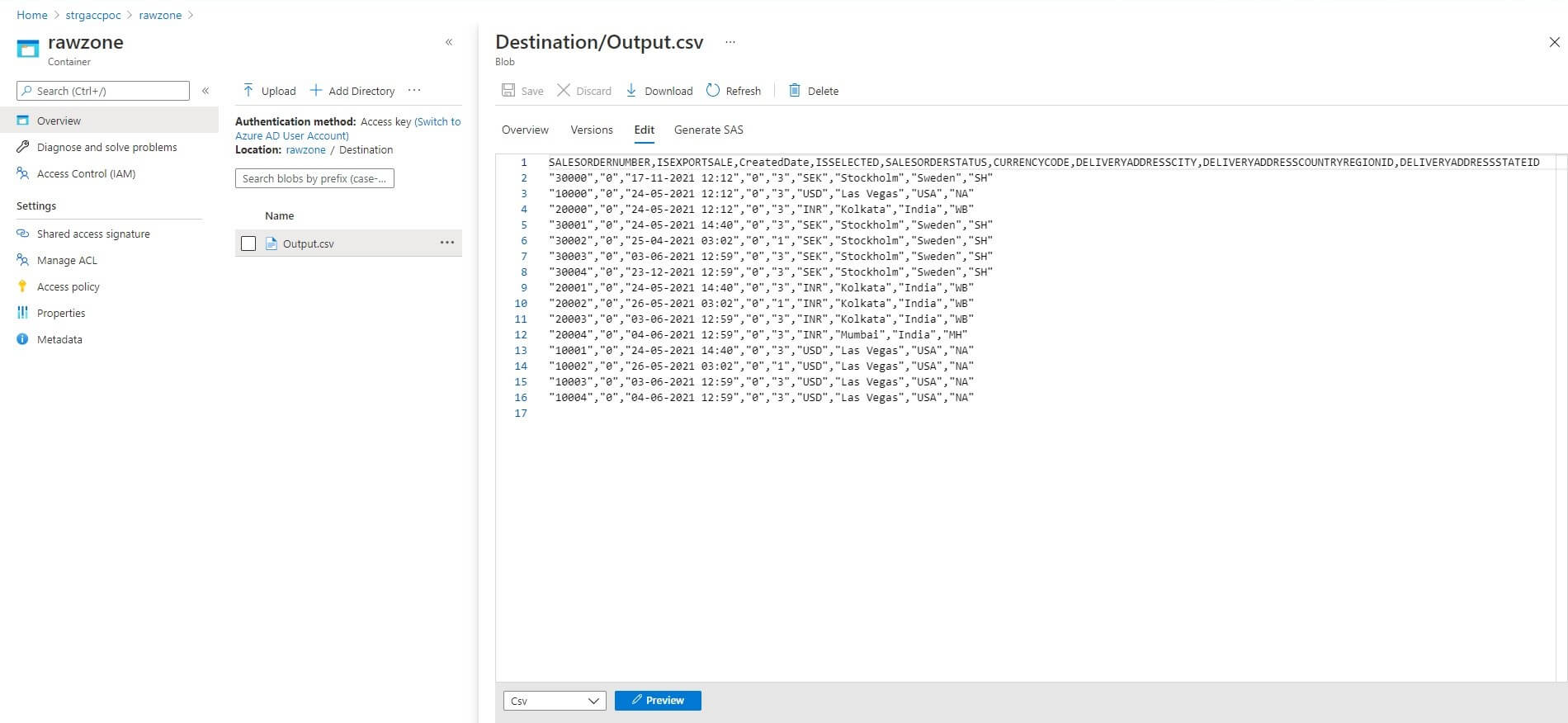

Let's open the merged file Output.csv. As expected we received 15 records in the output file, a combination of all three files:

Conclusion

In this article, we discussed steps to combine multiple files into a single file using copy data activity in Azure Data Factory. Note: All files should have a similar structure otherwise merge process may not work properly.