Storage Area Networks and the SQL Database Administrator

The intent of this article is to provide Database Administrators with an overview of what a Storage Area Network (SAN) is. If you are looking for more detailed information or performance tuning guidelines, this document is not for you. This document IS for you if you are database administrator with a database mounted on a SAN, but have never directly participated in the administration of your SAN. This document is also for you if you have never owned a SAN, but are interested in the technology or you have plans to add a SAN to your infrastructure in the near future.

Background and Experience

I am an MCSE/MCDBA and I have worked in IT for about 9 years, 6 of these I have spent in a position where I was directly responsible for the administration of one or more production database servers. In addition to Microsoft certifications, I am an Oracle Certified Associate (OCA) and I am certified by Dell to work on the Dell/EMC Clariion product line.

In a previous job, I had a single MS SQL Server which used a SAN for storage. However, the company had a separate group responsible for the design, implementation and ongoing support of our SAN system. In my current job, I wear a number of different hats, the two primary roles being Database Administrator and Storage Area Network Administrator. Currently, we have three storage systems installed, two in our production environment and one in our test environment. In raw terms, we have approximately 12 TB of storage available. I have participated in the design, installation and ongoing support of each system. We currently host three SQL database servers and one Oracle database server on the production SAN. In addition to the database servers, we currently host a pair of VMWare ESX 2.5 servers and a dedicated backup server.

SAN Components

Storage Processor

The central component of a SAN is the storage processor. Depending on the vendor and the model, the storage processor will have one or two CPUs, cache memory, two or more fibre channel ports and additional Ethernet connections for management. Most storage processors are integrated with a disk enclosure (meaning that the first bank of disks will be integrated with the storage processor). Some high-end systems separate the storage processor from the first storage enclosure. In contrast with direct attached SCSI devices, a typical SAN storage processor will have significantly more cache memory (from 512 MB to 4 GB), internal CPUs and an onboard operating system. Cache memory can be allocated to read-cache and/or write cache. Even at the low-end a storage processor will typically have a built-in battery and other redundant features to ensure that data that is written to cache is preserved in the event of a power failure or other problem.

Storage Enclosure

Again, depending on the model and vendor, you can usually add one or more additional disk arrays to a storage enclosure. This is a cheap(er) way to expand your storage capacity without adding a new storage processor.

Disks

Currently most vendors support Fibre and Serial ATA (SATA) disks. These disks are not interchangeable with regular SCSI disks. Fibre disks support higher spindle speeds are a generally better for more intense I/O operations. SATA disks are generally bigger, cost less (on a per GB basis) and are best suited to large, sequential write operations (such as backups). SATA and Fibre disks cannot be mixed in the same enclosure.

Host Bus Adapter

The host bus adapter is a card that fits into a PCI slot in the back of your server. HBAs come with one or two ports. Depending on whether you select the direct attached or switched network design, you will cable the HBA to a switch or directly to the storage processor.

Fibre Channel Switches

A fibre channel switch is similar to an Ethernet switch. By cabling a storage processor to a switch, you "open up" your storage environment to far more incoming connections than if you connected hosts directly to the storage processor. In a production environment, you will want to have at least two switches (with each server cabled to both switches) in order to provide for redundancy.

Software

Again, depending on the vendor, a SAN will come with software to enable various features. Each vendor uses their own terminology for the features that a particular software component enables. In general, however, the functionality can be broken down into:

- LUN Masking (the ability to offer up logical disks to multiple hosts)

- Remote replication (replicating data to a physically remote SAN)

- LUN Copying (the ability to instantaneously copy an entire physical LUN)

Monitoring Station

An optional component that many vendors recommend is a dedicated workstation or server to monitor the health and performance of the SAN. Monitoring can be as simple as providing a mechanism to generate automated alerts via SMTP to more robust solutions that use SNMP and interface with enterprise infrastructure solutions such as HP OpenView and IBM Director.

Storage Design

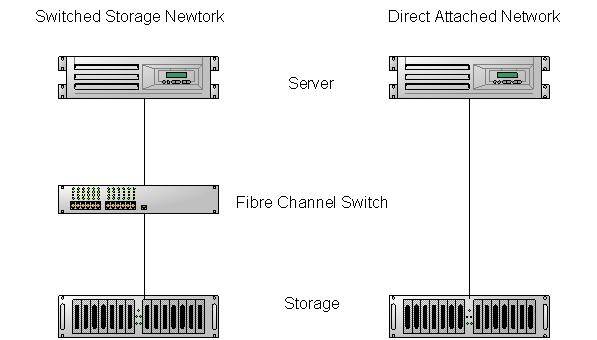

One of the first questions you will have to answer in designing your storage solution is whether you will go with a “direct attached” model or a switched network model. In the direct attached model, the server (or servers) is attached to the storage processor directly. It’s a simple solution and it works well if you only envision one or two servers being connected to the storage network. However, this design greatly limits the potential to expand your storage network.

The switched network design offers greater flexibility by connecting one or more servers to the storage processor via a fibre channel switch. The greater flexibility is made possible because storage systems have a fixed number of connections which cannot be expanded without adding additional storage processors (an expensive proposition). Switch from vendors like Brocade and McData come with 8, 16, 24 and 32 ports available. Switches can also be chained together to offer even more connection ports.

Figure 1. Switched Storage vs. Direct Attached

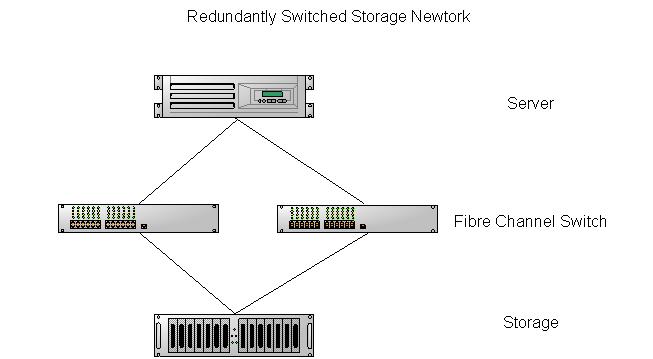

In reality, in a production environment, the storage administrator would have a minimum of two switches and each server would be cabled into each switch independently. Then, by cabling the storage system into each switch, the administrator can provide for multiple paths from the server to the storage processor. This provides fault tolerance in the event that any item in the storage network fails (such as an HBA, a cable, a storage processor, a switch or any of the fibre adapter ports).

Figure 1. Redundantly Switched Storage Network

Newer iSCSI-capable storage systems are simplifying this decision. iSCSI (or IP SCSI) allows the storage network traffic to flow over the same network as your regular Ethernet traffic; however this requires implementation of a high-speed Gigabit network. Security concerns aside, this model allows for the greatest flexibility when designing your storage solution.

Storage Design

There is far too much information about the details of designing a storage network and, in particular, optimizing the disk design for maximum I/O to effectively cover in an article this size. It is possible to invest an enormous amount of time and talent into designing the “optimum” solution for your database needs. This involves identifying high I/O tables and indexes and isolating these onto separate disks to avoid disk contention. It requires a detailed understanding of the capabilities of your SAN and a equally detailed understanding of the exact mechanics of your database. There is (in my opinion) a risk of "over-engineering" the solution to meet sizing and performance requirements that may change as your business changes and grows. Unless you have a very precise understanding of your current performance requirements and the requirements as they will be in 12 months, you are probably better off using the 80/20 rule to optimize your solution (identify the top 80% of the performance boosting "best practices" and stick with these).

If you are looking for detailed advice, consider looking at some of the white papers written by the various storage system vendors. In addition, most vendors will only be too glad to sell you consulting services for the design phase. If you have never designed a SAN solution for a database environment, I would highly encourage spending the extra money to pay for someone else to architect the solution.

The biggest administrative difference between a SAN and direct attached SCSI systems is the manner in which the disks are partitioned and offered up to host systems. In direct attached SCSI, once you carve out the physical disks into logical RAID arrays, you are done. The host system sees each logical disk as a “physical” entity. As a server administrator you can partition these disks into one or more logical entities for the server (for example by using the logical disk management component in Windows), but there are a fixed number of spindles in the array and there’s little that can be done to alter or improve performance.

With a SAN, after building the physical arrays, you still need to carve out individual LUNs from each array and assign the LUN to a host system. In fact, the RAID level of a physical array is not determined until the first LUN is built (once a RAID level has been assigned to a particular array of disks, it cannot be changed easily). With a SAN, a single physical array of disks can host one or more LUNs, and each LUN may be offered to a different host system. With most vendors, it is possible to stripe a LUN across two or more physical arrays, thus not only increasing capacity, but also multiplying the number of spindles available for I/O (the price, of course is simplicity and ease of trouble shooting).

This ability to effectively partition large physical arrays into useful blocks and offer these blocks individually to host systems is an invaluable feature of a SAN.

The environment in which I currently operate does not generally require such exacting attention to the minutiae of performance. We are not handling millions of I/O requests nor do we have issues around locking or blocking. My chief aims in designing the storage solution were to:

- Separate the log and data files onto different physical disk arrays

- Provide a separate physical disk array for backing up large databases (where applicable)

- Ensure redundancy of physical paths to the data

- Balance I/O across LUNs and physical arrays

- Balance I/O across the storage processor CPUs

- Balance performance requirements against ease of administration/maintenance

As mentioned earlier a major difference between a SAN and SCSI direct attached storage is that the SAN has one or more onboard CPUs. When a LUN is built, it must be assigned to a specific CPU within the storage processor. That CPU is then primarily responsible for handling the I/O for that LUN. If the CPU fails, then responsibility is handed off to the other CPU. Entry level SANS may only have one CPU in which case this caveat does not apply.

Backups

One of the biggest benefits of installing our SAN was simplifying our backup strategy for our large databases. We have one SQL database that is over 200 GB in size and an Oracle database that is around 80 GB. Previous experience backing up databases directly to tape has left me with a very bad taste in my mouth (and that was a smallish 10 GB database). In general, I prefer to backup to disk and then have a separate process copy the backup to tape. In cases where the sum of all databases on a server is less than 20 GB, I am perfectly comfortable with backing up the databases over the network (assuming a 100 MB switched Ethernet network is in place and not saturated with other traffic).

I felt that backing up a 200 GB database over the network was out of the question (it might be doable in a Gigabit environment, but I have no experience with this particular technology). With the SAN, I am able to carve out an additional LUN dedicated to storing backups of the production database. Furthermore, with some additional software purchased from the vendor, I am able to make an instantaneous "snapshot" copy of this LUN at a point in time and then offer this image of the backup to a different server in order to copy the image to tape. The snapshot process is not particularly easy to explain, but essentially, the process inserts a checkpoint into the source image and writes all changes after that checkpoint to a separate disk. A host that is reading the "snapshot" will, in effect, read the source LUN directly (which is still live and being updated) and then back out the changes by reading the copied data from the other disk. This minimizes the I/O requirements on the production database server, ensures that I have a current backup of the database on local disk and allows me to copy this backup to tape with minimal processing overhead on the production server.

A more expensive alternative for backups is to use a solution similar to EMC’s Timefinder SQL Interface Module (TSIM). This is only available with EMC’s Symmetrix product (although there are supposed to be third party add-ons for their Clariion line). TSIM interacts directly with your SQL Server’s Virtual Device Interface (VDI) and will enable you to make a near-instantaneous backup of your production databases. I watched with some amazement as an 80 GB database was backed up in full in about 3 seconds. TSIM handled the process of freezing the database I/O, snapping a copy of the database, inserting a checkpoint and tracking the LSN updates. This was all handled through a straightforward (though ill-documented) command-line interface. Our backup policy at the time allowed us to make a complete backup of the database every 24 hours. The backup image could then be written to tape during the day without interfering with the production server.

Using this type of product, you will still need to schedule transaction log backups in order to provide point-in-time recoverability, but the time-consuming process of making a full backup of the production database is greatly simplified. It took a leap of faith on my part to place the responsibility for these backups into the hands of our SAN administrator. You will need to have a close working relationship with your SAN administrator to make this type of solution work well.

A restore could be done by either copying the full backup over the network (a lengthy proposition) or by reversing the snapshot process to restore the disk as it was at the time the backup was performed. Once the database had been restored, transaction logs could be applied to bring it up to a specific point in time. The TSIM CLI provided an option which would permit the administrator to restore the database specifying WITH RECOVERY or WITH NORECOVERY (using the latter option permitted us to roll transactions forward to a point in time when the database was last known to be "good".

Monitoring

In general, monitoring a SAN is transparent to the database administrator. You will continue to monitor the disks (I/O, queues, response times, free space) just as you would any other physical disk. However, if you are the SAN administrator, there are several factors which you must consider when monitoring SAN performance:

- Is I/O contention of a virtual disk the result of a single server or multiple servers accessing the same physical resources?

- Is there a performance bottleneck at the switch?

- Is the performance bottleneck a result of too many virtual disks being assigned to the same storage processor?

Just like with your database server, you will need to run baseline statistics and retain this data for future reference. You will want to periodically update the baseline and project any trends forward to identify future bottlenecks before they interrupt production. In short, you must treat the storage processor just as you would any other physical server for which you are responsible.

Conclusions

As a SQL Server DBA, I greatly value the capabilities and performance that Storage Area Networks have to offer. Learning how to design, implement and manage SANs has greatly increased my value to my employer. Since large database applications are a prime candidate for being hosted on a SAN, I feel strongly that learning the ins and outs of storage management will make me a better Database Administrator now and in the future. Moreover, with the declining costs of implementing a SAN and new entry-level SANs being marketed by Dell, EMC and IBM, I believe that more DBAs will encounter SANs in the workplace.