Query tuning of SELECT

-

Hi All,

Need some help in tuning the SELECT statement.

Below SELECT query is taking a long time to execute under a specific database.

Brief description about the Issue

=============================

- We have 2 databases say db1 and db2. under db1 query completes in 5 secs but in db2 its taking 30-40 mins.

- Both databases reside on same SQL instance

- max server memory = 85 GB , OS memory = 96 GB RAM

select TOP (50000) * from EXT_data_Hist_TbL

WHERE CREATE_DATE < dateadd(year,-1,GETDATE())

- Table in qustion: EXT_data_Hist_TbL and it is a HEAP table

- In db1 table rowcount = 24534283 tablesize=187 GB

- In db2 table rowcount = 13290417 tablesize 191 GB

- no blocking

- waittype observed for db2 query is "(5ms)PAGEIOLATCH_SH:db2:3(*)"

- Only difference is that, Initially when both databases are restored on to non-prod env, in db2 , application team has run below DELETE statement twice or thrice ( I don't know the exact number)

and then if they run above SELECT stmt, it runs over 30mins. My doubt is whether these batch DELETES here is causing any issues under the covers? can anyone shed some light here.

DELETE TOP (50000) EXT_data_Hist_TbL

WHERE CREATE_DATE < dateadd(year,-1,GETDATE())

- I tried updating the statistics ( there is only 1 though which is a system created one) and run the SELECT query, it didn't help.

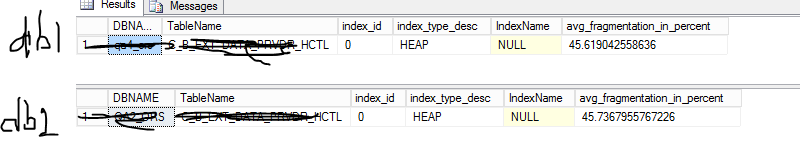

- index fragmentation in both the db's is almost same but rowcount differs.

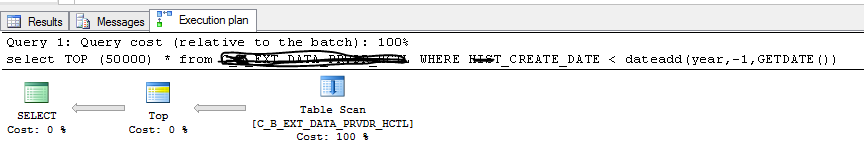

- The execution plan(s) i also same for both query.. i.e. Full table scan since it is a HEAP

How can we make this SELECT faster on db2 as well? Any inputs here please?

Thanks,

Sam

-

November 21, 2019 at 9:06 am

your table is not fragmented as it cant be, your table is a heap.

- add a clustered index

- then add a none clusted index on CREATE_DATE

***The first step is always the hardest *******

-

November 21, 2019 at 9:09 am

I reached out to app team, they said Its a history table. We asked the app team if we can create clustered idx on it instead of HEAP but they denied saying it was running in 5 secs in other database. so, to prove that all the data required for that good query is coming from cache and for the other, it is coming out from disk ? ( I think waitype plays a role if I am not wrong ). Is there a query to help that the data is coming from cache?

- on the other hand , I ensured that there is no activity on the server and tried to run the query multiple times on db which is running slow but the result is same. it takes 30-40 mins. I am not sure drop cleanbuffers will help in this case. Looks like the data pages are scattered across the data files. Will rebuilding the HEAP help here i.e. pages may not be continuous ? how to prove that data pages are not continuous and may be continuous in the other database ? if we can prove it probably I can rebuilt the heap and re-run the query?

Is deletes the problem? what TOP 50000 is doing something here? what will happen if we are deleting 50000 records everytime and what impact will have on the subsequent SELECT queries on such table?

Other thing is, can we use DropCleanBuffers of a specific database?

-

November 21, 2019 at 9:15 am

I know this is blindingly obvious, but put a clustered index on the table (maybe on create_date) - then recompare

you have no idea how many issues you can get into with heaps - especially with delete statements

MVDBA

-

November 21, 2019 at 11:44 am

How long does it take to do the select on just a small not null column with the same query on both databases? e.g.:

select TOP (50000) CREATE_DATE

from EXT_data_Hist_TbL

WHERE CREATE_DATE < dateadd(year,-1,GETDATE()) -

November 21, 2019 at 11:53 am

MVDBA (Mike Vessey) wrote:I know this is blindingly obvious, but put a clustered index on the table (maybe on create_date) - then recompare

you have no idea how many issues you can get into with heaps - especially with delete statements

Mike, Can you give me some examples of DELETES having adverse affect on Heap Tables ?

We are trying to convince the dev team to have a clustered idx but the only argument was , "why it is running fine on 1 database and why it is having issue when SELECT ran against the other copy of the databases where random deletes happened?

-

November 21, 2019 at 12:14 pm

if you delete data from a heap, the space might not be released and the pages remain allocated to the table, but unused

the problem gets worse, the more times you do it. you end up with 2 solutions - add a clustered index or run DBCC cleantable

there are dozens of articles around and lots of google items. but try this one

https://www.sqlservercentral.com/forums/topic/heap-tables-with-lots-of-unused-space

or the microsoft KB article

I try not to use heaps as it's not usually good DB design - hopefully Microsoft have gotten around to fixing the space release issue

MVDBA

-

November 21, 2019 at 12:15 pm

Jonathan AC Roberts wrote:How long does it take to do the select on just a small not null column with the same query on both databases? e.g.:

select TOP (50000) CREATE_DATE

from EXT_data_Hist_TbL

WHERE CREATE_DATE < dateadd(year,-1,GETDATE())on db1 - the query completes in 1 sec

on db2, it took 14 mins. whenever I am running always , its always showing physical reads high every time using sp_whoisactive stp.

-

November 21, 2019 at 1:48 pm

Hi All,

I tried to query just 500 rows. But I see a huge difference in Logical scan count. What does it basically say?

set statistics io on

select TOP (500) * from EXT_data_Hist_TbL

WHERE CREATE_DATE < dateadd(year,-1,GETDATE())

For "db1"

Table 'EXT_data_Hist_TbL'. Scan count 1, logical reads 500, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

For "db2"

Table 'EXT_data_Hist_TbL'. Scan count 1, logical reads 2048723, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

- This reply was modified 6 years, 6 months ago by vsamantha35.

-

November 21, 2019 at 1:59 pm

I'm assuming that rows are added roughly in CREATE_DATE order. That means you will find the earliest rows on db1 very quickly as no rows have been deleted.

On db2 you are deleting the earliest rows so making space at the beginning of your table. When new rows are inserted they will occupy the space that has been made free from deleted rows. This means you will have to read through more rows to find the earliest rows.

You should have a clustered index on every table. So just create one on the table, even if it's just on the CREATE_DATE column. It shouldn't add much overhead.

-

November 21, 2019 at 2:12 pm

the only thing that I can advise is to check all indexes are in place on both servers (maybe one is missing) - then look at your server settings -look at compatibility modes, cardinality estimation settings, query optimiser fixes setting, parameter sniffing etc

if it's logical reads then that's from cache

but I still advise putting the clustered index on

MVDBA

-

November 21, 2019 at 7:30 pm

Jonathan AC Roberts wrote:I'm assuming that rows are added roughly in CREATE_DATE order. That means you will find the earliest rows on db1 very quickly as no rows have been deleted.

On db2 you are deleting the earliest rows so making space at the beginning of your table. When new rows are inserted they will occupy the space that has been made free from deleted rows. This means you will have to read through more rows to find the earliest rows.

You should have a clustered index on every table. So just create one on the table, even if it's just on the CREATE_DATE column. It shouldn't add much overhead.

Hi Jonathan,

Just want to get more clarity on your statement "This means you will have to read through more rows to find the earliest rows."

So, u mean to say, in that deleted free space , they might have inserted data with higher range dates but since we have this filter condition "WHERE CREATE_DATE < dateadd(year,-1,GETDATE())" defined.

So, SQL Server may have to read more pages in order to fetch the rows satisfying above condition( i.e. earliest rows) , Is my understanding correct?

if this is case, we probably be able to convince the app team of why it is important to have table CI on this table and end up scanning less number of pages and there by improving the performance of the query.

Thanks,

Sam

-

Try doing

select TOP (50000) * from EXT_data_Hist_TbL

on each database, without anything in the WHERE or ORDER BY, and see how many reads it does.

-

November 21, 2019 at 8:31 pm

I get what you are saying. Thanks so much Jonathan and others for the help. I will share the results.

-

November 22, 2019 at 4:02 am

Have you considered just rebuilding the heap? You should also rebuild any non-clustered indexes, as well, to recover space and rebuild stats.

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally)

Viewing 15 posts - 1 through 15 (of 15 total)

You must be logged in to reply to this topic. Login to reply