Need help with query where i need to aggregate an aggregate

-

May 24, 2019 at 9:37 am

Hi all,

I have an inherited system which I can't do much with so (for now) i'm stuck with what i've got.

Data is collected and inserted into a "storage" table at various times throughout the month which shows customers and how much storage they are consuming.

I need to report on the TOTAL maximum storage used for each customer per month (they can have multiple storage volumes). With the volumes, if they're using 4TB on the 1st of the month and then drop it to 2TB for the rest of that month, i'm only interested in SUM-ing the highest value of each datastore for that month.

The following query achieves this BUT, i want to be able to display multiple months side by side.

SELECT

c.name,

SUM(x.maxsize) As 'Tier 1 GB - Jan 19'

FROM

(SELECT

id,

MAX(size) AS maxsize,

customer

FROM

#storage

WHERE

timestamp >'20190101' AND

timestamp < '20190201' and tier = '1'

GROUP BY id, customer) x, #customer c

WHERE

c.id = x.customer

GROUP BY c.name

ORDER BY name;I've asked about something similar previously and using CASE was the answer, but i'm having trouble getting my head around this one as using the SUM and MAX together causes problems.

Help from your collective genius once again will be much appreciated!

Below is the table structure and some test data...

CREATE TABLE #customer(

[id] [int] NULL,

[name] [nchar](50) NULL,

[aliases] [nchar](120) NULL,

[prefix] [nchar](3) NULL,

[active] [int] NULL

)

CREATE TABLE #storage(

[id] [nchar](50) NOT NULL,

[customer] [int] NOT NULL,

[name] [nchar](120) NOT NULL,

[int] NOT NULL,

[tier] [tinyint] NOT NULL,

[timestamp] [datetime] NOT NULL,

)

INSERT INTO #customer (id,name,aliases,prefix,active)

VALUES (1,N'Inner City Unit',N'ICU',N'ICU',1)

INSERT INTO #customer (id,name,aliases,prefix,active)

VALUES (2,N'Acme Ltd',N'ACM',N'ACM',1)

INSERT INTO #customer (id,name,aliases,prefix,active)

VALUES (3,N'My Test Company',N'MTC',N'MTC',1)

/*** Jan 2019 ***/

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000001' ,1 ,N'ICU001', '2048', 1, '20190103')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000002' ,1 ,N'ICU001', '8192', 2, '20190103')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000020' ,2 ,N'ACM001', '2048', 1, '20190103')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000021' ,2 ,N'ACM001', '8192', 2, '20190103')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000030' ,3 ,N'MTC001', '2048', 1, '20190103')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000031' ,3 ,N'MTC001', '4096', 2, '20190103') -- increase

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000001' ,1 ,N'ICU001', '2048', 1, '20190123')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000002' ,1 ,N'ICU001', '8192', 2, '20190123')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000020' ,2 ,N'ACM001', '2048', 1, '20190125')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000021' ,2 ,N'ACM001', '8192', 2, '20190125')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000030' ,3 ,N'MTC001', '3072', 1, '20190127') -- decrease

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000031' ,3 ,N'MTC001', '4096', 2, '20190128')

/*** Feb 2019 ***/

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000001' ,1 ,N'ICU001', '2048', 1, '20190201')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000002' ,1 ,N'ICU001', '8192', 2, '20190201')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000020' ,2 ,N'ACM001', '2048', 1, '20190202')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000021' ,2 ,N'ACM001', '8192', 2, '20190203')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000030' ,3 ,N'MTC001', '3072', 1, '20190203')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000031' ,3 ,N'MTC001', '4096', 2, '20190205')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000001' ,1 ,N'ICU001', '2048', 1, '20190217')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000002' ,1 ,N'ICU001', '8192', 2, '20190217')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000020' ,2 ,N'ACM001', '2048', 1, '20190217')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000021' ,2 ,N'ACM001', '8192', 2, '20190217')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000030' ,3 ,N'MTC001', '3072', 1, '20190218')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000031' ,3 ,N'MTC001', '4096', 2, '20190218')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000001' ,1 ,N'ICU001', '2048', 1, '20190126')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000002' ,1 ,N'ICU001', '3072', 2, '20190126') --- decrease

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000020' ,2 ,N'ACM001', '2048', 1, '20190126')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000021' ,2 ,N'ACM001', '8192', 2, '20190126')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000030' ,3 ,N'MTC001', '4096', 1, '20190127') -- increase

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000031' ,3 ,N'MTC001', '4096', 2, '20190128')

/*** Mar 2019 ***/

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000001' ,1 ,N'ICU001', '4096', 1, '20190303')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000002' ,1 ,N'ICU001', '3072', 2, '20190303')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000020' ,2 ,N'ACM001', '2048', 1, '20190303')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000021' ,2 ,N'ACM001', '8192', 2, '20190303')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000030' ,3 ,N'MTC001', '2048', 1, '20190303')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000031' ,3 ,N'MTC001', '4096', 2, '20190303')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000001' ,1 ,N'ICU001', '2048', 1, '20190323')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000002' ,1 ,N'ICU001', '3072', 2, '20190323')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000020' ,2 ,N'ACM001', '8192', 1, '20190325') -- increase

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000021' ,2 ,N'ACM001', '8192', 2, '20190325')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

VALUES (N'D000030' ,3 ,N'MTC001', '4096', 1, '20190327')

INSERT INTO #storage (id, customer, name, size, tier,[timestamp])

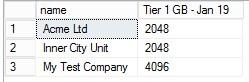

VALUES (N'D000031' ,3 ,N'MTC001', '4096', 2, '20190328')Running the original query gives me this :

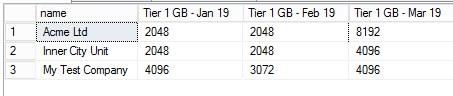

What i wish to produce is :

Thanks in advance!

- This topic was modified 7 years ago by stuart.davison 8016.

-

May 24, 2019 at 10:31 am

Hi Thom, yes.

Very strange, I still have on my screen where it was copied from and it exists there. Somehow went missing on the copy !

-

May 24, 2019 at 10:41 am

OK, personally, I would return a dataset like this then (I've guessed you want split by

tier, if not add aWHERE):SELECT c.[name],

DATEADD(MONTH, DATEDIFF(MONTH,0,s.timestamp),0) AS [Month],

s.tier,

MAX(s.size) AS MAxSize

FROM #customer C

JOIN #storage S ON C.id = S.customer

GROUP BY c.[name],

DATEADD(MONTH, DATEDIFF(MONTH,0,s.timestamp),0),

s.tierThen use your presentation layer to do the pivoting, such as using a Matrix in SSRS. If you really have to pivot it in your SQL, then you'll need to use a Dynamic Pivot.

Thom~

Excuse my typos and sometimes awful grammar. My fingers work faster than my brain does.

Larnu.uk -

June 7, 2019 at 2:31 pm

Data is collected and inserted into a "storage" table at various times throughout the month which shows customers and how much storage they are consuming.

I need to report on the TOTAL maximum storage used for each customer per month (they can have multiple storage volumes). With the volumes, if they're using 4TB on the 1st of the month and then drop it to 2TB for the rest of that month, I'm only interested in SUM-ing the highest value of each datastore for that month.

>> The following query achieves this BUT, I want to be able to display multiple months side by side. <<

Why do you want to use SQL to print a denormalized report? We never lmeant SQL to be used this way.

SELECT c._customer_name,

SUM(x.maxsize) AS “Tier 1 GB - Jan 19”

FROM

(SELECT customer_id, MAX(size) AS maxsize, customer_name

FROM Storage_Log

WHERE timestamp BETWEEN ‘2019-01-01' AND '2019-02-01'

AND storage_tier= 1

GROUP BY id, customer) AS X, Customers c

WHERE c.customer_id = x.customer_id

GROUP BY c.customer_ name;

>> Below is the table structure and some test data. <<

Thank you for trying to post DDL. But you got everything wrong. We need keys and you have none. There is no such crap as a generic “id” that changes data types and meaning from table to table; that is how non-SQL programmers fake non-relational record numbers. I guess that you meant the customer identifier.

An identifier cannot be a numeric since it is a nominal scale. There is no such crap as a generic “name” in RDBMS. We do not use assembly language flags. Columns are scalars, so “aliases” is violation of ISO-11179 rules; you cannot put multiple values in a column. Also, TIMESTAMP is both too vague and a reserved word in Standard SQL.

I would love to see the careful research that showed a fixed length fifty character Chinese string is a valid customer name. Prefixes do not float around by themselves-- what does it prefix?

These are problems you created with your local tables. My guess is that you needed something like this:

CREATE TABLE Customers

(customer_id CHAR(5) NOT NULL PRIMARY KEY, --- required, not optional

customer_name VARCHAR(50) NOT NULL,

customer_alias VARCHAR(10) NOT NULL) ;

Why did you use the old Sybase table construction syntax? We have had ANSI/ISO table constructors for years.

INSERT INTO Customers

VALUES

(‘00001’, 'Inner City Unit', 'ICU'),

(‘00002’, 'Acme Ltd', 'ACM'),

(‘00003’, 'My Test Company', 'MTC');

Why are you adding redundancy to your schema? Where are the REFERENCES clauses that preserve data integrity and relates the storage to the customers? I cannot figure out your data in terms of a valid schema. Here is what I came up with:

CREATE TABLE Storage_Log

(customer_id CHAR(5) NOT NULL

REFERENCES Customers, --- this is what the R in RDBMS means!

storage_tier SMALLINT NOT NULL,

storage_date DATE NOT NULL

DEFAULT CURRENT_TIMESTAMP,

PRIMARY KEY (customer_id, storage_tier, storage_date)

);

Can you correct this? Proper column names, constraints, etc. please.

>> Running the original query gives me this : <<

Posting pictures is consider very rude.

Please post DDL and follow ANSI/ISO standards when asking for help.

-

June 7, 2019 at 2:38 pm

I think you've forgotten about the difference between Sample data and Production data, Joe. The OP is clearly using Temporary tables here, not persisted tables, and their attempts should be applauded not told they're (completely) "wrong".

The images at the end at to show what results they got, not sample data. Please don't demotivate the users to ask questions on the SSC community when this question was very well formed. The only person being rude here is you.

Also, it seems you forgot to format your code in the code snippet Joe, making it impossible to read; please do use the formatting tools when posting as otherwise it makes your replies much harder for everyone to use.

Thanks.

Thom~

Excuse my typos and sometimes awful grammar. My fingers work faster than my brain does.

Larnu.uk -

June 7, 2019 at 3:13 pm

Joe,

In addition to what Thom said, the OP also stated that he is stuck with what he has at this time. Sometimes you don't have the option to fix things that are wrong and have to deal with what you are dealt.

You really need to learn what tact is and stop beating people over the head because you think you are always right and that we should bow down to your superior knowledge and wisdom. To be honest, just go away.

-

June 7, 2019 at 3:19 pm

I have been doing this for over 30 years. I found that people who post bad SQL on forums also write bad code in production. As one guy told me ~25 years ago, "Nobody ever told me that everything isn't VARCHAR(50) and NULL-able!" I am the one who tells the kids when they are screwing up, so they can learn in a forum and not a production system. I started my career in Defense Systems; if you screw up, you kill the wrong people.

I do not expect all of the constraints to be in place, but we need a key in each table. Not an option. The data types need to be correct (recently I saw someone using FLOAT without any idea how floating point math works). Normalization to just 3NF is easy with sample data. Nothing fancy, just a skeleton is fine.

Please post DDL and follow ANSI/ISO standards when asking for help.

-

June 7, 2019 at 3:30 pm

jcelko212 32090 wrote:I have been doing this for over 30 years. I found that people who post bad SQL on forums also write bad code in production. As one guy told me ~25 years ago, "Nobody ever told me that everything isn't VARCHAR(50) and NULL-able!" I am the one who tells the kids when they are screwing up, so they can learn in a forum and not a production system. I started my career in Defense Systems; if you screw up, you kill the wrong people. I do not expect all of the constraints to be in place, but we need a key in each table. Not an option. The data types need to be correct (recently I saw someone using FLOAT without any idea how floating point math works). Normalization to just 3NF is easy with sample data. Nothing fancy, just a skeleton is fine.

You can teach, actually mentor, without being a bully. You are just a bully, plain and simple.

I really don't care where you started your career, it doesn't excuse how you treat people.

-

June 7, 2019 at 3:32 pm

jcelko212 32090 wrote:I have been doing this for over 30 years.

So does this mean the system I use at the office is exempt as it was designed (and is still running) on an operating system created in 1978?

Yes, poor design choices exist. Yes, they should be avoided when designing, or when you can change them, but not everyone has that control (or luxury) some of us are forced to use poorly designed systems; perhaps due to regulatory and compliance reasons.

Knowing how to do things correctly is important, but knowing what to do and how to when it what we have doesn't follow even 1NF is just as important.

Either way, there's a good way to educate people, and a bad way; what you posted wasn't education is was just insulted the poor OP's integrity and attempts. You won't teach anyone anything by insulted them because they won't listen/read what you say. So please, take the time to listen/read this and change the way you write your posts. Make them friendlier and constructive, not insulting and negative; you'll find that the people you are trying to educate will be far more willing to read/listen to what you say.

Thom~

Excuse my typos and sometimes awful grammar. My fingers work faster than my brain does.

Larnu.uk -

June 7, 2019 at 5:15 pm

The poster can control the @ and # tables he creates, can't he? Data element names, etc. are also under their control. Yes, you can get stuck with crap DDL and not be able to replace it. But at least know it is crap DDL and learn how to do it right, even if you cannot fix it. I hate hearing "Nobody ever told me that.." from someone when get I on a forum. I also found that trying to be "friendlier and constructive" does not work decades ago. All you get is "But it works with a kludge, so it is okay, right?" and they post the same errors again and again.

Please post DDL and follow ANSI/ISO standards when asking for help.

-

June 9, 2019 at 7:37 pm

jcelko212 32090 wrote:The poster can control the @ and # tables he creates, can't he? Data element names, etc. are also under their control. Yes, you can get stuck with crap DDL and not be able to replace it. But at least know it is crap DDL and learn how to do it right, even if you cannot fix it. I hate hearing "Nobody ever told me that.." from someone when get I on a forum. I also found that trying to be "friendlier and constructive" does not work decades ago. All you get is "But it works with a kludge, so it is okay, right?" and they post the same errors again and again.

It's good that you've slid down to their level, Joe. Think about it.

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

June 12, 2019 at 1:59 pm

jcelko212 32090 wrote:An identifier cannot be a numeric since it is a nominal scale.

Heh... you changed your form of rubbish. And, yes, you CAN do some very necessary math with it not to mention that it's usually the only thing available for an Employee, Customer, or Person table and you have yet to prove otherwise. 😉

--Jeff Moden

RBAR is pronounced "ree-bar" and is a "Modenism" for Row-By-Agonizing-Row.

First step towards the paradigm shift of writing Set Based code:

________Stop thinking about what you want to do to a ROW... think, instead, of what you want to do to a COLUMN.Change is inevitable... Change for the better is not.

Helpful Links:

How to post code problems

How to Post Performance Problems

Create a Tally Function (fnTally) -

August 5, 2019 at 5:53 pm

Try this

Declare @cols nvarchar(Max);

Declare @query nvarchar(Max);

Declare @tier tinyint;

Set @cols =

STUFF

(

(select ',' + Quotename(a.lbl)

from

(

select distinct DATEADD(DAY, -DAY(timestamp)+1, timestamp) ord

, 'Tier 1 GB - '+ cast(DATENAME(month,timestamp) as NCHAR(3)) + ' ' + Right(cast(Year(timestamp) as char(4)),2) lbl

from #storage a

) a

order by a.ord asc

FOR XML PATH(''), TYPE).value('.', 'NVARCHAR(MAX)') ,1,1, '');

set @query =N'

select p.name

, ' + @cols + '

from

(

select a.name, a.size

,''Tier 1 GB - '' + cast(DATENAME(month,timestamp) as NCHAR(3)) + '' '' + Right(cast(Year(timestamp) as char(4)),2) lbl

from #storage a

, #customer b

where a.customer = b.id

and tier = @tier

) a

pivot

(

max(size)

For lbl in (' + @cols + ')

)p ;'

set @tier = 1;

exec sp_executesql @query , N'@tier tinyint', @tier;Let me know if you have any questions.

-

August 5, 2019 at 8:59 pm

No, Jeff, an identifier is by its very nature measured on a nominal scale. It is not an interval scale. It is not a ratio scale. It is not a ranking or categorical scale. It names a single entity. It is not a quantity because to quote "The North American Arithmetic; part the third (1834)" numbers are the expression of quantity. This quantity can be either a multitude or a magnitude. If an identifier was a numeric value, it could be diminished or increased. We would not care how it is displayed.

What three times your credit card "number" means? Don't be fooled just because it's a string of digits; that doesn't make it a number. Likewise, representing a number and another system of digits doesn't make it into an identifier.

Can you give me that makes sense numeric value? Do all the arithmetic operations make sense on it? Notice that this does not include check digits and hashing. These algorithms have to pull individual digits out from the string that holds the identifier to do the math on them after they been cast to a numeric data type.

Please post DDL and follow ANSI/ISO standards when asking for help.

Viewing 15 posts - 1 through 15 (of 29 total)

You must be logged in to reply to this topic. Login to reply