SQL Server 2017 has introduced several changes to the way that query plans work in SQL Server. This article is the second in a series that will cover these changes. The other articles in the series are:

- Automatic Plan Correction

- Adaptive Query Processing – Batch Mode Memory Grant Feedback (this article)

- Adaptive Query Processing – Batch Mode Adaptive Join

- Adaptive Query Processing – Interleaved Execution

Adaptive Query Processing

Adaptive Query Processing deals with means to improve query performance. Introduced in SQL Server 2017, Adaptive Query Processing currently includes these features:

- Batch Mode Memory Grant Feedback

- Batch Mode Adaptive Join

- Interleaved Execution

Generally speaking, query optimization generates a set of query plans, during which various plan options are considered and the plan with the lowest cost is used. The query then runs the plan generated by the optimization process. Sometimes, the generated plan just isn’t an optimal plan for the query being run – a different number of rows being returned can drastically change the best plan for a query.

Memory Grants (background)

When a query is being run, it acquires memory necessary to perform its work – in particular to store temporary row data in sorting and join operations. When calculating the memory required, there are two memory grants that are calculated: Required Memory and Additional Memory.

The required memory is the minimum amount of memory needed to run sort and hash join operations. The additional memory is the amount of memory needed to temporarily store all of the rows in memory. The additional memory is calculated by the size of the row and the number of rows, as determined by the cardinality estimate. If parallelism is in play, there is additional required memory that is required.

For more detailed background information about memory grants, see https://blogs.msdn.microsoft.com/sqlqueryprocessing/2010/02/16/understanding-sql-server-memory-grant/

When the memory grant is wrong

We see from the above section that the additional memory is determined in part by the estimated number of rows to be returned. For a parameterized query, the plan is compiled with the parameter supplied to the query. If this parameter happens to be atypical for your workload, then the memory grant in the plan may not be appropriate. Subsequent runs of the cached plan, with different parameters, may have different memory grant needs.

If the memory grant in the cached plan is too small, this will result in spills to tempdb, or in other words, the query will spill to disk. Even if tempdb is on a SSD, this is still an expensive operation.

If the memory grant in the cached plan is too large, then concurrency can be impacted while the query waits in the queue until enough memory is available to run.

In either case, having an inadequate memory grant is not desired.

Batch Mode Memory Grant Feedback

Enter the Batch Mode Memory Grant Feedback, introduced in SQL Server 2017. This process provides a feedback mechanism to the cached plan for the actual amount of memory used, and updates the cached plan for future use. The first thing to talk about is “Batch Mode”. In Batch Mode, operators process the data in groups of rows. This is a complicated way of saying that the query needs to use a ColumnStore index somewhere. Without a ColumnStore index, the query will be processed in Row Mode.

The feedback mechanism is triggered when one of the following occurs:

- A spill to disk has occurred

- The memory grant is at least two times greater than the actual memory used.

When one of these conditions occurs, the memory grant is recalculated, and the cached plan is updated with the new memory grant values. Since this updates the cached plan, this feature is designed for workloads that repeat the SQL statement, usually with different parameter values.

Seeing Batch Mode Memory Grant Feedback in action

For this demo, we will be using the WideWorldImportersDW database, available from Microsoft’s github for SQL Server samples. In this database, we’re going to create a new stored procedure.

ALTER DATABASE [WideWorldImportersDW] SET COMPATIBILITY_LEVEL = 140;

GO

USE WideWorldImportersDW;

GO

ALTER DATABASE SCOPED CONFIGURATION CLEAR PROCEDURE_CACHE;

GO

--DROP PROCEDURE IF EXISTS [FactOrderByLineageKey]

-- Intentionally forcing a row underestimate

CREATE OR ALTER PROCEDURE [FactOrderByLineageKey]

@LineageKey INT

AS

SELECT

[fo].[Order Key], [fo].[Description]

FROM [Fact].[Order] AS [fo]

INNER HASH JOIN [Dimension].[Stock Item] AS [si]

ON [fo].[Stock Item Key] = [si].[Stock Item Key]

WHERE [fo].[Lineage Key] = @LineageKey

AND [si].[Lead Time Days] > 0

ORDER BY [fo].[Stock Item Key], [fo].[Order Date Key] DESC

OPTION (MAXDOP 1);

GONow, turn on the actual execution plan (Ctrl+M), and run the following query. This is for a lineage key that doesn’t have any rows:

EXEC [FactOrderByLineageKey] 8;

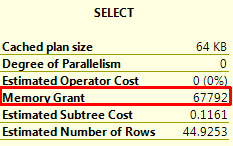

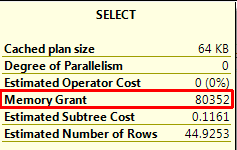

Note the execution plan and the memory grant for the query:

Since there are no records that match the parameter, the query returns immediately. Now, run the following query a few times, looking at the plan to see the impact on spills, memory grant size, and run time:

EXEC [FactOrderByLineageKey] 9;

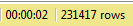

When this query is run with this parameter, it returns 231,417 rows, and takes five seconds (all database files are on SSD drives).

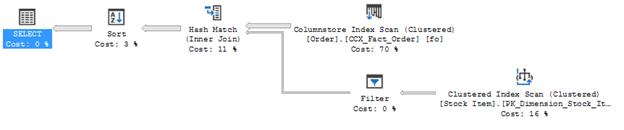

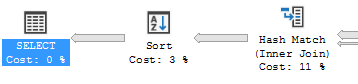

The query plan looks like this:

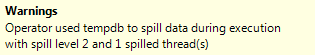

Let’s look at those warnings. For the Hash Match operator:

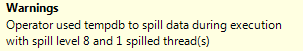

The Sort operator has this warning:

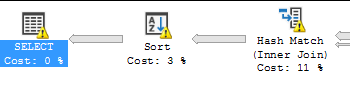

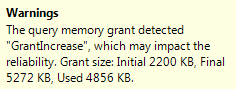

And the Select operator has this warning:

We definitely have a problem that hopefully the Batch Mode Memory Grant Feedback can help us with. It should have updated the cached plan with the new memory grant, so let’s run this query again and look at the execution plan. The first thing to note is the time required to run this query:

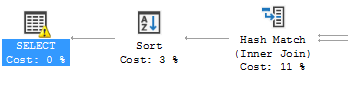

Just in duration, a 60% improvement. Let’s look at the query plan:

No warnings anywhere. Which means that the memory was adequate to prevent the spills to disk. The final memory grant is:

In conclusion, it can be seen that the Batched Mode Memory Grant Feedback updated the cached plan with a better sized memory grant, which prevents the spills to disk that were occurring with the Hash Match and Sort operators.

Extended Events for Batch Mode Memory Grant Feedback

As with all new things being added to SQL Server, Extended Events (XE) are used to monitor the new features. The XE events added for Batch Mode Memory Grant Feedback are spilling_report_to_memory_grant_feedback, memory_grant_feedback_loop_disabled and memory_grant_updated_by_feedback.

The entire query plan

Above, I've only shown the relevant part of the query plan. I'm sure that there are some that want to see the entire query plan. Just for those folks, here it is: