(2020-Dec-21) While working with Azure Functions that provide a serverless environment to run my computer program code, I’m still struggling to understand how it actually works. Yes, I admit, there is no bravado in my conversation about Function Apps, I really don’t understand what happens behind a scene, when a front-end application submits a request to execute my function code in a cloud environment, and how this request is processed via a durable function framework (starter => orchestrator => activity).

Azure Data Factory provides an interface to execute your Azure Function, and if you wish, then the output result of your function code can be further processed in your Data Factory workflow. The more I work with this couple, the more I trust how a function app can work differently under various Azure Service Plans available for me. The more parallel Azure Function requests I submit from my Data Factory, the more trust I put into my Azure Function App that it will properly and gracefully scale out from “Always Ready instances”, to “Pre-warmed instances”, and to “Maximum instances” available for my Function App. Supported runtime version for PowerShell durable functions, along with data exchange possibilities between orchestrator function and activity function requires a lot of trust too because the latter is still not well documented.

My current journey of using Azure Functions in Data Factory has been marked with two milestones so far:

- Initial overview of what is possible - http://datanrg.blogspot.com/2020/04/using-azure-functions-in-azure-data.html

- Further advancement to enable long-running function processes and keep data factory from failing - http://datanrg.blogspot.com/2020/10/using-durable-functions-in-azure-data.html

Photo by Jesse Dodds on Unsplash

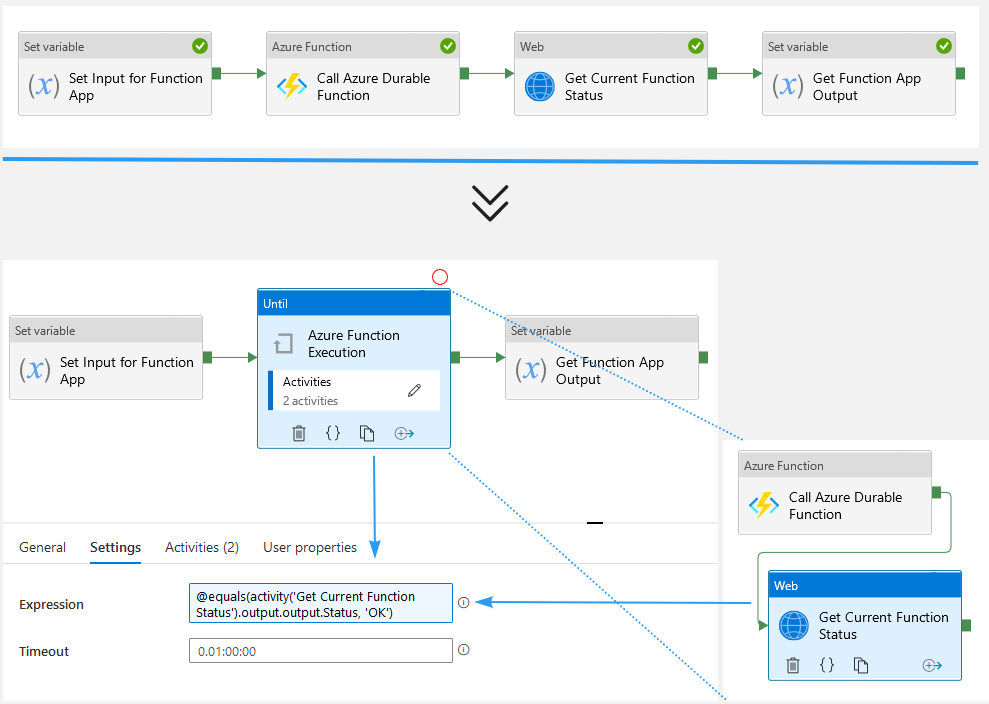

Recently I realized that the initially proposed HTTP Polling of long-running function process in a data factory can be simplified even further.

An early version (please check the 2nd blog post listed above) suggested that I can execute a durable function orchestrator, which eventually will execute a function activity. Then I would check the status of my function app execution by polling the statusQueryGetUri URI from my data factory pipeline, if its status is not Completed, then I would poll it again.

In reality, the combination of Until loop container along with Wait and Web call activities can just be replaced by a single Web call activity. The reason for this is that simple: when you initially execute your durable Azure Function (even if it will take minutes, hours, or days to finish), it will almost instantly provide you with an execution HTTP status code 202 (Accepted). Then Azure Data Factory Web activity will poll the statusQueryGetUri URI of your Azure Function on its own until the HTTP status code becomes 200 (OK). Web activity will run this step as long as necessary or unless the Azure Function timeout is reached; this can vary for different pricing tiers - https://docs.microsoft.com/en-us/azure/azure-functions/functions-scale#timeout

The structure of statusQueryGetUri URI is simple: it has a reference to your azure function app along with the execution instance GUID. And how Azure Data Factory polls this URI, is unknown to me, it's all about trust, please see the beginning of this blog post 🙂

https://<your-function-app>.azurewebsites.net/runtime/webhooks/durabletask/instances/<GUID>?taskHub=DurableFunctionsHub&connection=Storage&code=<code-value>

This has been an introduction, now the real blog post begins. Naturally, you can execute multiple instances of your Azure Function at the same time (event-driven processes or front-end parallel execution steps) and the Azure Function App will handle them. My recent work project requirement indicated that when a parallel execution happens, a certain operation still needed to be throttled and artificially sequenced, again, it was a special use case, and it may not happen in your projects.

I tried to put such throttling logic inside of my durable azure function activity, however, with many concurrent requests to execute this one particular operation, my function had app used all of the available instances, while the instances were active and running, my function became not available to the existing data factory workflows.

There is a good wiki page about Writing Tasks Orchestrators that states, “Code should be non-blocking i.e. no thread sleep or Task.WaitXXX() methods.” So, that was my aha moment to remove the throttling logic from my azure function activity to the data factory.

Now, when an instance of my Azure Function finds itself that it can’t proceed further due to other operation running, it completes with HTTP status code 200 (OK), releases the azure function instance, and also provides an additional execution output status that it’s not really “OK” and needs to re-executed.

The Until loop container now will handle two types of scenario:

- HTTP Status Code 200 (OK) and custom output Status "OK", then it exits the loop container and proceeds further with the "Get Function App Output" activity.

- HTTP Status Code 200 (OK) and custom output Status is not "OK" (you can provide more descriptive info of what your not OK scenario might be), then execution continues with another round of "Call Durable Azure Function" & "Get Current Function Status".