I found myself in a situation where I needed to modify temporal tables. We do this outside business hours because we don’t want clients changing data while versioning is off. Who wants to wait until off-hours to run scripts if you could devise a way to do it during work hours? This post walks you through how to change temporal tables without losing track of what is changing.

What are Temporal Tables?

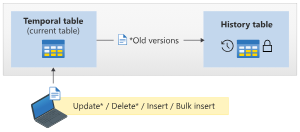

According to Microsoft, temporal tables are designed to keep a full history of data changes, allowing easy point-in-time analysis. The current table contains the current value for each row. The history table contains each previous value (the old version) for each row, if any, and the start time and end time for the period for which it was valid.

Use cases for temporal tables include:

- Auditing all data changes and performing data forensics when necessary

- Reconstructing the state of the data as of any time in the past

- Calculating trends over time

- Maintaining a slowly changing dimension for decision support applications

- Recovering from accidental data changes and application errors

My Situation

My first message to you is to never be in this situation. What is this situation? Using temporal tables for reporting. If you use the temporal table for reporting, you may have to clean up erroneous data in the temporal table. We are building a data warehouse to move reporting out of OLTP databases.

I would never use a temporal table for reporting. Now that we have to regularly fix data in temporal tables, I can see why I would never do that. You don’t want to miss data changing in the current table while table versioning is off. However, you must turn off versioning to change temporal table data.

You can block changes to the current table while changing the temporal table to avoid missing changes to the current table. This means no one else can change any data while you run the transaction to make your data changes because you are blocking on purpose. This does not scale. We have a very small environment. Also, turning off temporal tables is as easy as dropping an index. However, it takes time to turn it back on, just like adding an index takes time.

Setup The Scenario

Let’s set up a temporal table with the following script:

-- Create the WebsiteUserInfo table with auto-incrementing ID

CREATE TABLE WebsiteUserInfo

(

[ID] INT IDENTITY(1,1) NOT NULL PRIMARY KEY,

[UserID] INT NOT NULL,

[UserName] NVARCHAR(100) NOT NULL,

[PagesVisited] INT NOT NULL,

[ValidFrom] DATETIME2 (0) GENERATED ALWAYS AS ROW START,

[ValidTo] DATETIME2 (0) GENERATED ALWAYS AS ROW END,

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

)

WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = dbo.WebsiteUserInfoHistory));

-- Generate 100,000 rows for the table

DECLARE @Counter INT = 1;

WHILE @Counter <= 100000

BEGIN

INSERT INTO WebsiteUserInfo (UserID, UserName, PagesVisited)

VALUES (@Counter, 'User ' + CAST(@Counter AS NVARCHAR), @Counter);

SET @Counter = @Counter + 1;

ENDNow we have the table and some data in place. Depending on your SQL Server/Azure SQL database specs, that data might take a while to load. I ran this on Azure SQL Basic, and it took a while. I wanted to add enough rows to make this a somewhat sizable table, not just a few rows.

Modify Your Current Table and Its History Table

We will step through the entire transaction block statement by statement to test whether this works. You could run it all at once, but we want to see the lock blocking the insert to prove this blocks it. Start by executing the following query in one query window:

-- Assuming you want to delete rows with ID values 1 to 10

BEGIN TRANSACTION

DELETE FROM WebsiteUserInfo WITH (TABLOCK)

WHERE ID IN (1, 2, 3, 4, 5, 6, 7, 8, 9, 10);

After you execute the begin transaction and first delete statement above, you will want to run your insert statement on the current table. Execute the following query in another query window:

-- Generate 100,000 rows for the table

DECLARE @Counter INT = 1;

WHILE @Counter <= 100000

BEGIN

INSERT INTO WebsiteUserInfo (UserID, UserName, PagesVisited)

VALUES (@Counter, 'User ' + CAST(@Counter AS NVARCHAR), @Counter);

SET @Counter = @Counter + 1;

ENDNext, we can run the rest of the block in the first query window with the BEGIN TRANSACTION statement. The rest of the query block is included below:

ALTER TABLE [dbo].[WebsiteUserInfo]

SET (SYSTEM_VERSIONING = OFF)

GO

DELETE FROM WebsiteUserInfoHistory

WHERE ID IN (1, 2, 3, 4, 5, 6, 7, 8, 9, 10);

ALTER TABLE [dbo].[WebsiteUserInfo]

SET

(SYSTEM_VERSIONING = ON (HISTORY_TABLE = [dbo].[WebsiteUserInfoHistory]));

COMMIT TRANSACTION Once you commit the transaction, that will free up the TABLOCK on the current table and allow inserts in your other query window. With the current table and its history table all in the same transaction, you will prevent other users from changing data in the current table. This way, you won’t miss changes logged to the history table. This will cause blocking, though, so keep that in mind if you are worried about excessive blocking during business hours. Our tables are small, so it’s not an issue for us, and it’s less than 10 seconds.

For the Future

In the future, you could run the entire transaction without stepping through each statement. Note: You need the GO after the ALTER TABLE statement to turn versioning off. You will get an error without that GO when executing the transaction without stepping through each statement. The entire statement is included below:

-- Assuming you want to delete rows with ID values 1 to 10

BEGIN TRANSACTION

DELETE FROM WebsiteUserInfo WITH (TABLOCK)

WHERE ID IN (1, 2, 3, 4, 5, 6, 7, 8, 9, 10);

ALTER TABLE [dbo].[WebsiteUserInfo]

SET (SYSTEM_VERSIONING = OFF)

GO

DELETE FROM WebsiteUserInfoHistory

WHERE ID IN (1, 2, 3, 4, 5, 6, 7, 8, 9, 10);

ALTER TABLE [dbo].[WebsiteUserInfo]

SET

(SYSTEM_VERSIONING = ON (HISTORY_TABLE = [dbo].[WebsiteUserInfoHistory]));

COMMIT TRANSACTION Conclusion

As I mentioned, if your temporal table is large, this will be a terrible plan for you. However, this code will work if you have small temporal tables. Also, I recommend not reporting on temporal tables because they aren’t meant to be modified if reporting data needs to change to reflect an accurate portrayal of the data.

The post Temporal Table Data Fixes appeared first on sqlkitty.