(2022-Jan-27) When Hogarth, a nine-year-old boy, a character from “The Iron Giant” movie, met a 50-foot tall alien robot, whom he eventually befriended, he didn't know about a very special characteristic of that robot. If anything was broken inside then this mechanical machine could fix and reassemble itself back to the original state. I don’t think this is the main reason for the high IMDb rating of this movie, but the robot’s feature to repair himself has been demonstrated a few times during that movie.

Photo by Castorly Stock from Pexels

There are many metaphorical “giants” when it comes to a SQL server development practice, starting from the Microsoft data platform itself, both cloud and on-prem, along with automation to deploy your SQL Server projects.

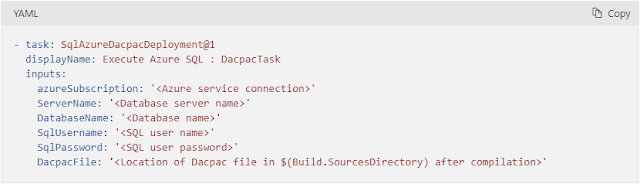

A very simple step to deploy your Azure SQL database can be executed with the help of [SqlAzureDacpacDeployment@1] Azure DevOps task. This task works with a packaged version of your database solution, formally called DACPAC file, that holds the definition of all tables, views, stored procedures, and other database objects.

Behind a simplicity to define all required and optional parameters to execute a deployment task of your DACPAC-wrapped SQL Server project, lies a massive but very calculated effort to bring your SQL code changes incrementally. If it’s a brand new deployment, then your whole DACPAC solution will materialize in a destination SQL Server environment; and further deployment would only bring incremental changes: DACPAC content will be compared with the target SQL Server environment and only delta changes will be selected for a next deployment automatically.

I’ve seen the [SqlAzureDacpacDeployment@1] Azure DevOps task performing well by simply adding database objects or altering existing tables as well as very complex table index or constrain alterations. This task works really well! Here is more information about it - https://docs.microsoft.com/en-us/azure/devops/pipelines/tasks/deploy/sql-azure-dacpac-deployment?view=azure-devops

The other gem of SQL Server development is temporal tables, a feature introduced in SQL Server 2016 and it helps to automatically collect all data changes from your 'main' table and preserve them in a separate “historical” table. I would describe this as a combination of Type 1 & Type 2 of Slowly Changing Dimensions (SCD), basically turning it into Type 4 SCD. However, with the SQL Server temporal tables, historical table data is updated automatically and doesn’t require any manual data modifications.

Yes, some limitations can be faced while working with temporal tables in a SQL Server database: https://docs.microsoft.com/en-us/sql/relational-databases/tables/temporal-table-considerations-and-limitations?view=sql-server-ver15

However, use cases scenarios and additional benefits may attract more adoption of these system-versioned temporal tables: https://docs.microsoft.com/en-us/sql/relational-databases/tables/temporal-table-usage-scenarios?view=sql-server-ver15

- Data audit

- Point-in-time analysis

- Anomaly detection

- Slowly-changing dimensions

- Repairing row-level data corruption

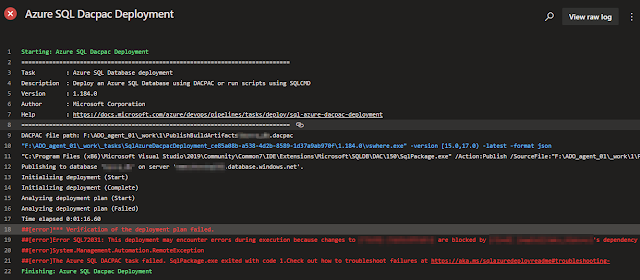

Here is one thing that still doesn’t work well when you try to alter an existing temporal table and run this change through the [SqlAzureDacpacDeployment@1] DevOps task, whether this change is to add a new column or modify existing attributes within the table. Your deployment will fail with the “This deployment may encounter errors during execution because changes to ... are blocked by ...'s dependency in the target database” error message.

This is the built-in functionality of the SQL Server temporal tables and before altering any table columns, the history tracking option needs be to turned off by setting the SYSTEM_VERSIONING to OFF. More details can be found here: https://docs.microsoft.com/en-us/sql/relational-databases/tables/changing-the-schema-of-a-system-versioned-temporal-table?view=sql-server-ver15#important-remarks

In general, a temporal table can be updated in the following 3 steps:

- Turn off table system versioning

- Make table changes

- Turn on table system versioning

- Manually perform Step 1 in your target database environment for a table that you need to change and then re-run failed DACPAC deployment task

- Include an additional deployment SQL step to alter all temporal tables and turn off their system versioning, then run DACPAC deployment step and turn on system versioning for all temporal tables in your database after this.

- Be more diligent with the previous step and try to collect a list of temporal tables that might be changed and included in your latest build, then dynamically turn on and off system versioning for those tables only.

The last possible workaround leads me to a thought that all these multiple sub-steps could be included in the next version of the [SqlAzureDacpacDeployment@1] Azure DevOps task.

Most definitely, this Azure DevOps “giant” won’t be able to fix itself, and my only hope is that Microsoft could find time to improve it.