(2021-Jun-30) One of my university professors liked to tell us a quote, “The Sleep of Reason Produces Monsters”, in a way to help us, his students, to stay active in our thinking process. I’m not sure if Francisco Goya, had a similar aspiration when he was creating his artwork with the same name.

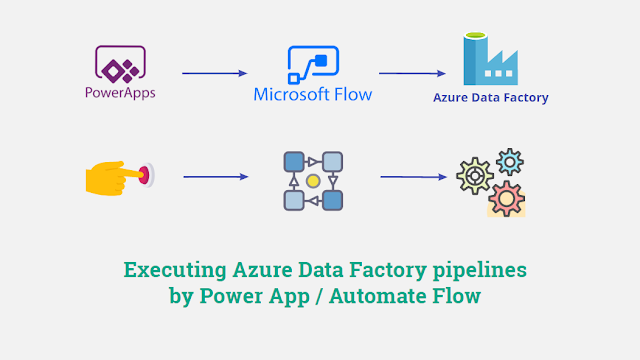

So, let me explain my reasons to create a solution to trigger Azure Data Factory (ADF) pipelines from a Power App and why it shouldn't be considered as a monster 🙂

- Schedule trigger: a trigger that invokes a pipeline on a wall-clock schedule.

- Tumbling window trigger: a trigger that operates on a periodic interval, while also retaining state.

- Event-based trigger: a trigger that responds to an event.

- Custom event trigger: a trigger that requires integration with Azure Event Grid (preview)

You can also manually run (execute) your ADF pipelines by using one of the following methods:

- .NET SDK

- Azure PowerShell module

- REST API

- Python SDK

Another option to manually trigger an ADF pipeline is to access the ADF instance itself and visually select a pipeline to run along with passing all necessary parameters. However, this will require elevated permissions, and you wouldn't want to give this access away.

Alternatively, Azure Data Factory exists as a connector in Logic Apps, Power Apps and Power Automate Flows and can support three different actions:

- Cancel a pipeline run: This operation cancels a pipeline run by its run ID.

- Create a pipeline run: This operation creates a new pipeline run in your factory

- Get a pipeline run: Get a particular pipeline run execution

Why Power App & Automate Flow?

As flexible as Azure Data Factory can be, it still doesn’t allow regular business users to start a necessary data transformation process whenever they need to, i.e. outside of scheduled time frames. Our business users just wanted to have “their” magic button to press and wait for the results.

Developing a sophisticated web application for business users with Rest API calls to trigger ADF pipelines is a very time-consuming exercise. Power App in contrast provides an easy way to develop an instrument that business users can use and operate, so this choice is settled.

Then I need to create a logical workflow to execute Data Factory pipelines in Azure, and I intuitively think about Azure Logic App. Yes, this is possible and Power App allows connection to Logic App in Azure, but even if Power Platform and Azure are both Microsoft ecosystems, they exist in two separate worlds. So, Power Automate Flow (which is a sibling to Azure Logic App) is another obvious choice for my development.

Power App development

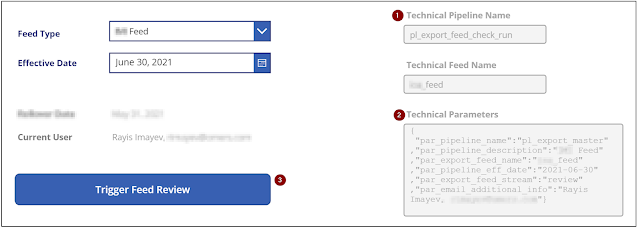

I left some of the "technical" greyed-out fields visible for validation purposes. This is just an example and your case might be different.

- Technical Pipeline Name text field defines an explicit name of my Data Factory pipeline that I want to execute. This field value is derived based on user interaction with my Power App.

- Technical Parameters text field defines JSON elements with all the parameter values, and those values are derived from the Power App user controls too. Again, your case with the pipeline name and parameters will be different.

- Magic button that will pass Technical Pipeline Name and Technical Parameters values to my Power Automate Flow which then will execute my ADF process.

eFront_ADF_Pipeline_Execute_Only is the actual name of my Power Automate Flow.

Power Automate Flow development

My Power Automate Flow needed to be as generic as possible. It should only have a reference (connection) to my Data Factory; the pipeline name with its parameters to execute should also come from the incoming payload.

(1) First, I extract a payload that comes from my Power App and then I save it in the "var_power_app_payload" variable:

(2) Then I transform "var_power_app_payload" variable into a JSON structure:

(3) Save the 'pipeline_name' JSON element into "var_adf_pipeline_name" variable comes next:

(4) Save the 'parameters' JSON element into "var_adf_pipeline_parameters" variable follows the previous step:

(5) Final, but not the last step is to execute the "Create a pipeline run" step and pass "var_adf_pipeline_name" and "var_adf_pipeline_parameters" variables along with selecting my Azure Subscription, Resource Group and Data Factory:

(6) After submitting a request to trigger an ADF pipeline we then just return a status of the previous step execution back to the Power App.

As a result, our business users can now interact with their Power App and initiate a data transformation process whenever they want to which will then trigger the Power Automate Flow and execute an actual Data Factory pipeline.

Closing Notes

There is a risk that business users may press their “magic” Power App button multiple times and this will then initiate multiple Data Factory pipeline executions that will run simultaneously. Azure Data Factory allows Concurrency setting on a pipeline level, let’s say, one at a time and other requests to execute the same pipeline will be queued.

However, we didn't need this queued process. Imagine seeing a queue of hundreds of pipelines in Data Factory if someone pressed that Power App button a hundred times.

Paul Andrew created a very elegant solution to check if an ADF pipeline is already running, then gracefully stop the new run - https://mrpaulandrew.com/2020/11/12/get-data-factory-to-check-itself-for-a-running-pipeline-via-the-azure-management-api/.

Another, thanks to Kenwyn Warner, who showed me how to interact with Power Automate Flows from a Power App!