Without a doubt, the most versatile data flow tool in the SSIS arsenal is the script component. This control grants ETL developers complete control over the flow of data, leverages the full power of the .NET Framework namespaces, and can be used as a source, destination, or transformation in data flow operations. Within the script logic, one can perform complex data manipulation not easily accomplished using other tools in SSIS. When used as a transformation, the developer must make the design decision to use the script component in either synchronous or asynchronous mode.

In this post, I’ll describe what synchronous script transformation processing is, and will show an example of how to use it in an SSIS package.

Synchronous Processing, Defined

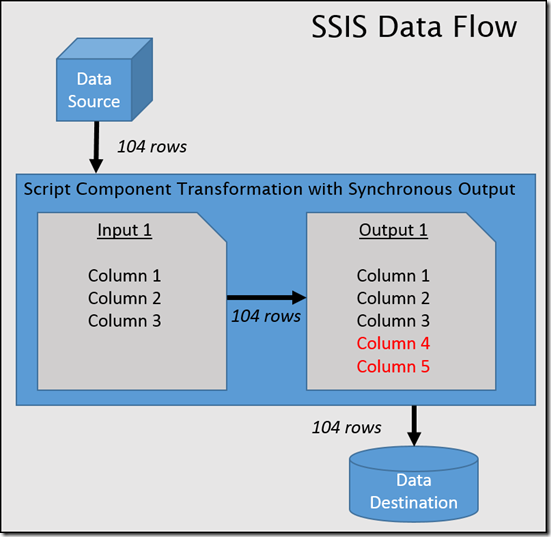

Simply put, using the script transformation synchronously means that for every row of data received by the input, exactly one row of data will be sent to the synchronized output. The output will contain the same metadata as the input, although it is possible to add more columns to the output. The SSIS developer does not have to explicitly add input rows to the output; when configured to run synchronously, this process occurs automatically. As shown in the graphic below, the number of input and output rows will always match when using synchronous outputs, and the input metadata will be passed along to the output (with new output columns shown in red).

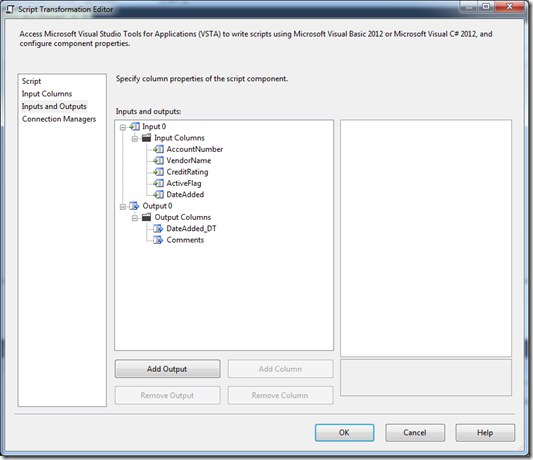

Using a synchronous output is the most common use of the script component transformation. It is also the default setting. You’ll notice when you create a new script component and configure it as a transformation, it will create one input and one output for you automatically. As shown in the example below, I’ve configured a sample package with some vendor data from a flat file that will be sent to my script component, and all of the metadata from the source can be seen in the input metadata.

Note the highlighted section, which sets the SynchronousInputID for the selected output. This was set by default. As mentioned previously, unless otherwise specified, the default output will be configured to run synchronously with the default input. It is worth noting that, when using multiple outputs, you can have more than just one output set to run synchronously with the input, but when explicitly adding new outputs this does not occur automatically. I’ll discuss multiple outputs more extensively when I cover asynchronous script processing in a future blog post.

You’ll notice that the default output ([Output 0]) does not appear to have any metadata associated with it. This is by design – it is inheriting all of the metadata from the input. Although I can’t change or remove any of the existing column metadata, I can add more columns to the output by selecting the output and clicking the Add Column button. As shown below, I’ve added a couple of extra columns to this output to store values I will be generating in the logic of the script.

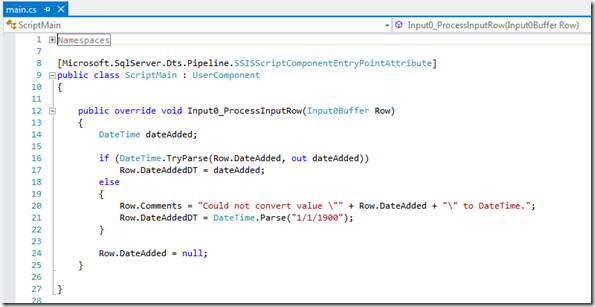

With the inputs and outputs configured, I can now write some code. Using the script component transformation synchronously is by far the simplest use of this tool, because much of the behavior is configured automatically. As shown below, I’ll focus my attention on the Input0_ProcessInputRow function, which was automatically created and named according to the name of the output. The Input0_ProcessInput row function is where all of the transformation will take place. (Please note that I have removed a lot of noncritical automatically-generated extra code to keep things simple.)

This is about as simple as SSIS coding gets – the above snippet is a fully functioning script transformation, even though it doesn’t yet really do anything. To make any changes to the data inline, I can address the existing columns (either those inherited from the input, or those I explicitly created in the output) by using the syntax Row.. In the snippet below, you can see that I’m doing some data type checking, and am assigning to my new output variable the date value parsed from the string input. If the input value cannot be parsed into a valid DateTime, I set it to a default value and write the original string value into a Comments column.

As shown in the data viewer output below, the original metadata, as well as the additional columns added to the output, are included in the output of the script component. Note the anomaly about 10 rows down, which reverts to the default values for DateAdded_DT and Comments as defined in the script transformation logic.

Potential Uses

Even though this design pattern using synchronized inputs and outputs is fairly simple, it also has a broad set of uses in ETL. In particular, here are a few cases where doing synchronized in-line operations works well:

- Data cleansing operations

- Complex string manipulation (such as detecting date format, or dissecting first, middle, last name from a Full Name field)

- Removing control characters

- Token replacement

- Data type checking and substitution

- Applying complex business rules

Conclusion

As shown in this post, using the script component synchronously is the simplest way to do inline data transformation within code. Because it does not change the source metadata or manipulate the number of output rows, there is a minimal amount of logistical code required.

At the beginning of the post, I also mentioned asynchronous processing, which is much more complex but also vastly more flexible. I will discuss asynchronous processing in the script component transformation in a future post.

The post Synchronous Processing in the SSIS Script Component appeared first on Tim Mitchell.