A Data Flow Task created with the SSIS Import/Export Wizard reads an entire data file into a single destination table. You cannot use the import/export wizard alone if you wish to direct records in a data file to different tables based upon the values of fields in the record, or to filter out some records entirely. You can either modify a wizard-generated data flow task, or create one from scratch.

To create a conditional data flow task, manually add a data flow task to the control flow container of an empty SSIS package, then click the data flow tab to add source, transformation, and destination elements to the data flow container.

Overview

In this article, we will create an SSIS package that reads a stock market sales data file using a flat file data source into a multicast data flow transformation that is connected by three data flow paths to an OLE DB destination element, a conditional split element, and a data conversion element.

The first data flow path from the multicast transformation sends all the records into a single table named S&P500.

The second data flow path sends the multicast records to a conditional split transformation that connects to three data flow paths that each connect to a table. Case 1 of the conditional split sends records containing ticker symbol "AMD" to a table named "AMD." Case 2 sends records with ticker symbol "INTC" to the "INTC" table. The default output (all the records minus the records diverted to other tables by the case statements) is sent to the "Leftovers" table.

The third path sends the records to a Data Conversion transformation, which casts the text value contained by the "Volume" field to an integer so it can be used in a mathematical logic statement. The output of the data converion element is sent to a conditional split element, which selects for records with a volume greater than or equal to 1,000,000, which are then sent to a table named "HighVolume."

Create SQL Server tables

Open a SQL Server Management Studio query window and execute the following SQL code.

BEGIN TRY DROP DATABASE DemoDB END TRY BEGIN CATCH END CATCH GO CREATE DATABASE DemoDB GO USE DemoDB GO CREATE TABLE [dbo].( [Date] [varchar](50) NULL, [Ticker] [varchar](50) NULL, [Open] [varchar](50) NULL, [High] [varchar](50) NULL, [Low] [varchar](50) NULL, [Close] [varchar](50) NULL, [Volume] [varchar](50) NULL ) ON [PRIMARY] CREATE TABLE [dbo].[AMD]( [Date] [varchar](50) NULL, [Ticker] [varchar](50) NULL, [Open] [varchar](50) NULL, [High] [varchar](50) NULL, [Low] [varchar](50) NULL, [Close] [varchar](50) NULL, [Volume] [varchar](50) NULL ) ON [PRIMARY] CREATE TABLE [dbo].[INTC]( [Date] [varchar](50) NULL, [Ticker] [varchar](50) NULL, [Open] [varchar](50) NULL, [High] [varchar](50) NULL, [Low] [varchar](50) NULL, [Close] [varchar](50) NULL, [Volume] [varchar](50) NULL ) ON [PRIMARY] CREATE TABLE [dbo].[HighVolume]( [Date] [varchar](50) NULL, [Ticker] [varchar](50) NULL, [Open] [varchar](50) NULL, [High] [varchar](50) NULL, [Low] [varchar](50) NULL, [Close] [varchar](50) NULL, [Volume] [varchar](50) NULL ) ON [PRIMARY] CREATE TABLE [dbo].[Leftovers]( [Date] [varchar](50) NULL, [Ticker] [varchar](50) NULL, [Open] [varchar](50) NULL, [High] [varchar](50) NULL, [Low] [varchar](50) NULL, [Close] [varchar](50) NULL, [Volume] [varchar](50) NULL ) ON [PRIMARY]

The SQL code creates a database named DemoDB and adds four tables named "S&P500," "AMD," "INTC" and "Leftovers" to it.

Save and extract resource file

Download the attached sp500hst.csv.zip resource file and extract it to the C:\demo_files\ folder.

Open the sp500hst.csv file to observe that field names are contained in the first record.

Create the SSIS package

Open an SSIS project in Business Intelligence Development Studio, create an empty SSIS package, then add a data flow task to the control flow container.

Click on the data flow tab and add a flat file source to the data flow container.

Right-click on the connection manager container and select "New Flat File Connection" from the popup menu list.

Browse to and select the "C:\demo_files\sp500hst.csv" file, add a connection manager name, then click the "Columns" node to define the columns.

Click the "OK" button...

...to add the sp_500hst_csv connection manager to the connection Mmanager container.

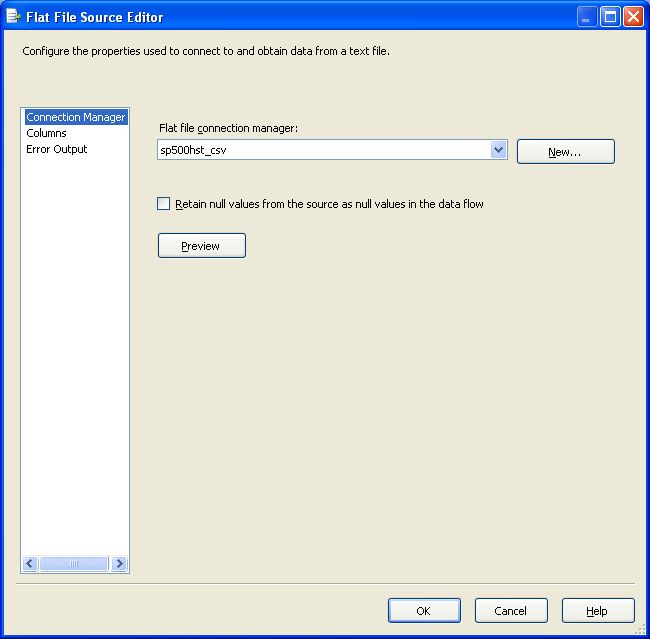

Double-click on the flat file source to bring up the editor. Select the sp500hst_csv connection manager, then click the "OK" button.

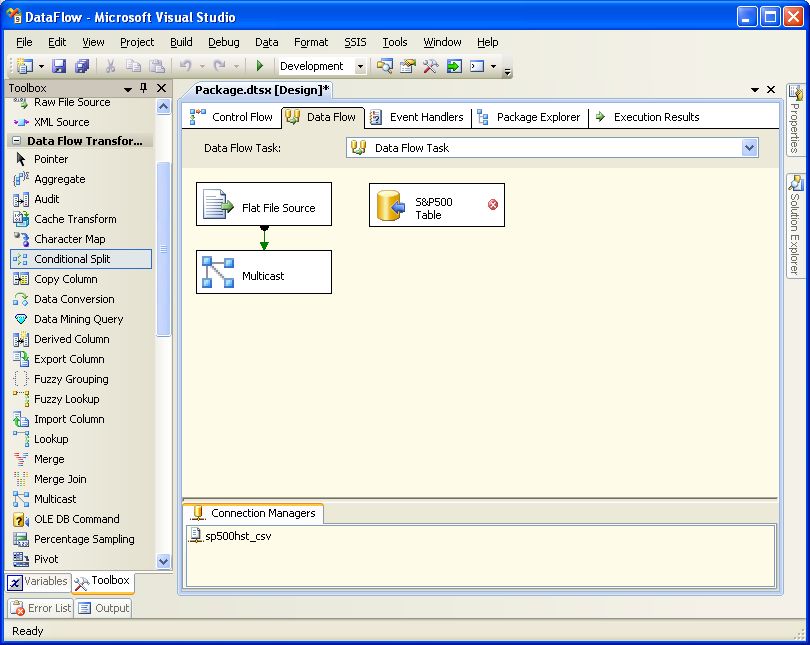

Drag-and-drop a multicast transformation into the data flow container.

Add a path from the flat file source to the multicast transformation.

Select "Flat File Source Output" from the drop-down menu and click the "OK" button.

Drag-and-drop an OLE DB destination to the data flow container.

Change the name of the OLE DB Destination transformation to "S&P500 Table."

Add a data flow path from the Multicast Transformation to the OLE DB Destination.

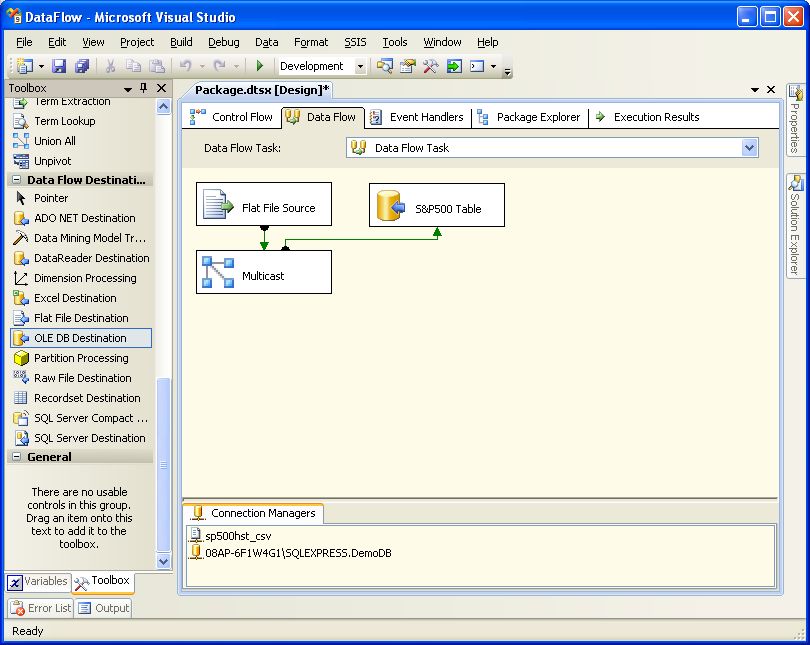

Right-click the Connection Manager container and select "New OLE DB Connection" from the popup menu.

Click the "New" button to create a new connection manager.

Select the server and database names from the drop-down menu lists and click the "OK" button.

Click the "OK" button again to complete creation of the connection manager.

Note that the OLE DB connection manager has been added to the connection manager container.

Double-click the OLE DB destination to bring up its editor, choose the connection manager and table from the dropdown menus, then click on the mappings node to map the columns.

Because the first line of the data file contains the field names, the source and destination columns are automatically mapped.

After clicking the "OK" button, you can see that the OLE DB destination error flag has disappeared.

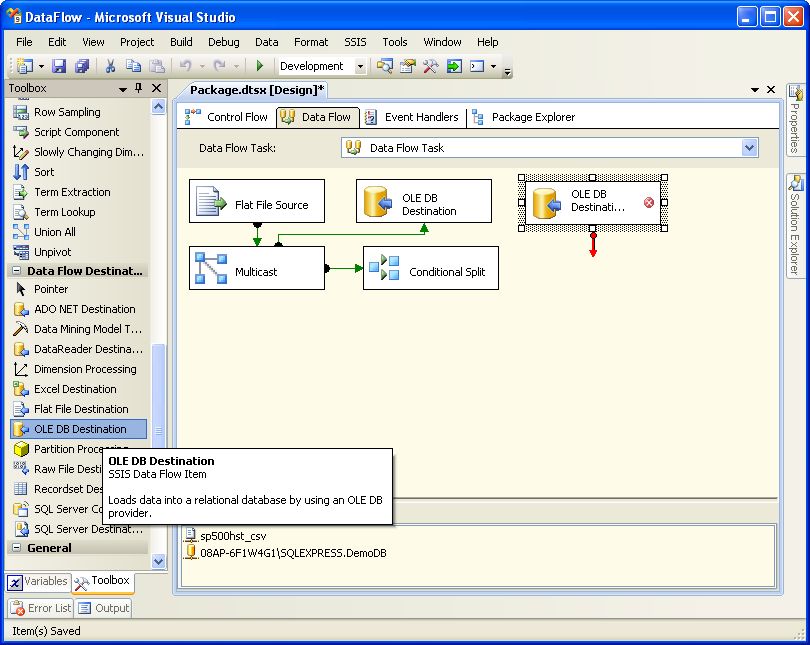

Add a conditional split element to the data flow container.

Add a data flow path from the multicast element to the conditional split element.

Double-click on the conditional split element...

...to bring up the conditional split transformation editor.

Expand the "Columns" folder and drag the "Ticker" field down to the "Condition" text box to create conditional case number 1.

Complete the condtion statement to display [Ticker] == "AMD"

Add a second case statement for "INTC" and click the"OK" button.

Add an OLE DB destination element to the data flow container.

Change the name of the OLE DB Destination to "AMD Table."

Add a path between the Conditional Split Transformation and the AMD Table OLE DB Destination.

Select "Case 1" form the output dropdown menu list.

Double-click on the AMD Table destination...

...to bring up the OLE DB Destination Editor. Configure the Connection Manager and Table or View dropdown menu lists, then click the "Mappings" node...

...to see that the input and destination columns are automatically mapped. Click the "OK" button.

The first data flow path from the conditional split element is now configured.

Add OLE DB destinations and paths for the INTC and Leftovers table in the same way we added the AMD table destination and path.

Now add a data conversion transformation element to the data flow container.

Add a path from the multicast transformation element to the data conversion transformation element.

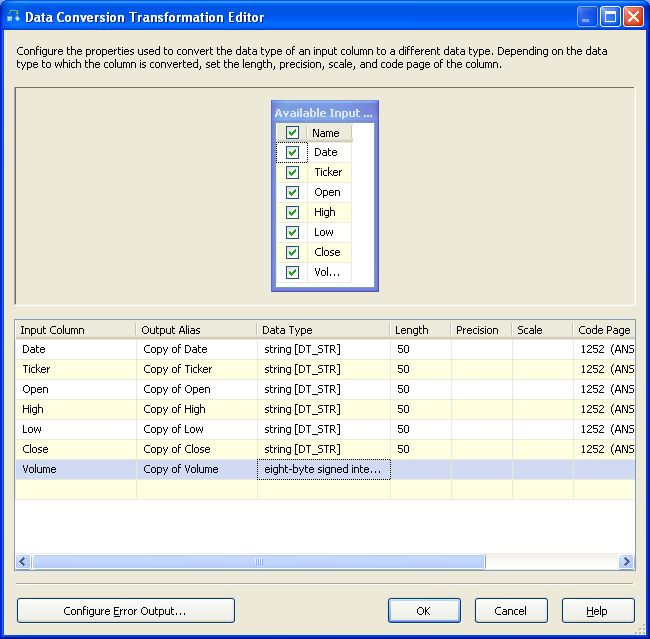

Double-click on the data conversion element...

...to bring up the editor. Select all of the available input checkboxes, change the "Copy of Volume" output alias data type value to "eight-byte signed integer," then click the "OK" button.

Add a conditional split transformation element to the data flow container.

Add a path betwen the data conversion transformation element and the new conditional split transformation element.

Select "Data Conversion Output" from the dropdown menu list.

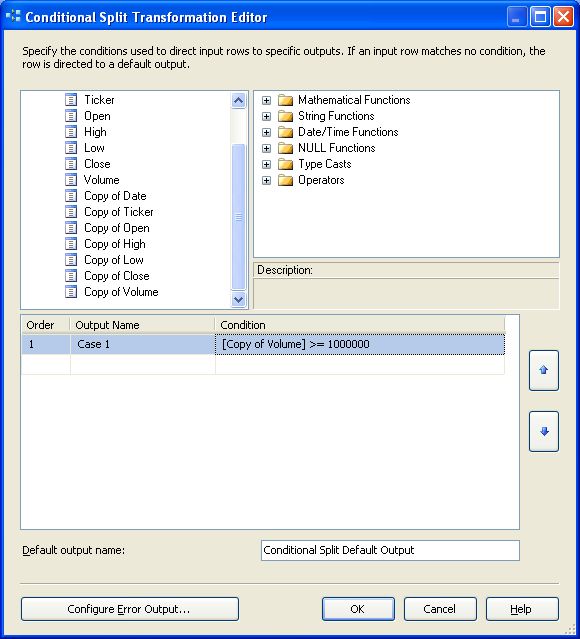

Double-click the conditional split transformation element...

...to bring up the editor. Create and edit case 1 to display [Copy of Volume] >= 1000000.

Add an OLE DB destination transformation to the data flow container.

Change the name of the OLE DB destination transformation to "HighVolume Table."

Add a path between the conditional split transformation to the HighVolume OLE DB destination.

Select Case 1 from the output dropdown list.

Double-click the HighVolume OLE DB destination...

...to bring up the eidtor. Configure the transformation to the HighVolume table and click the "Mappings" node...

...to automatically map the fields, then click the OK button.

Click the "Start Debugging" button  to execute the completed SSIS package.

to execute the completed SSIS package.

The package directs records to multiple tables.

Query the tables to confirm the record counts reported by the SSIS package.

That's it and hopefully you now know how to create a conditional data flow.

| Looking to get started with Integration Services? You might like our Stairway to Integration Services, designed to help you get started with the technology and learn how to build your own packages. |